Book note

Scaling Made AI Legible

A book note on The Scaling Era: An Oral History of AI, 2019–2025.

By Robby Sneiderman. .

Scaling made AI legible.

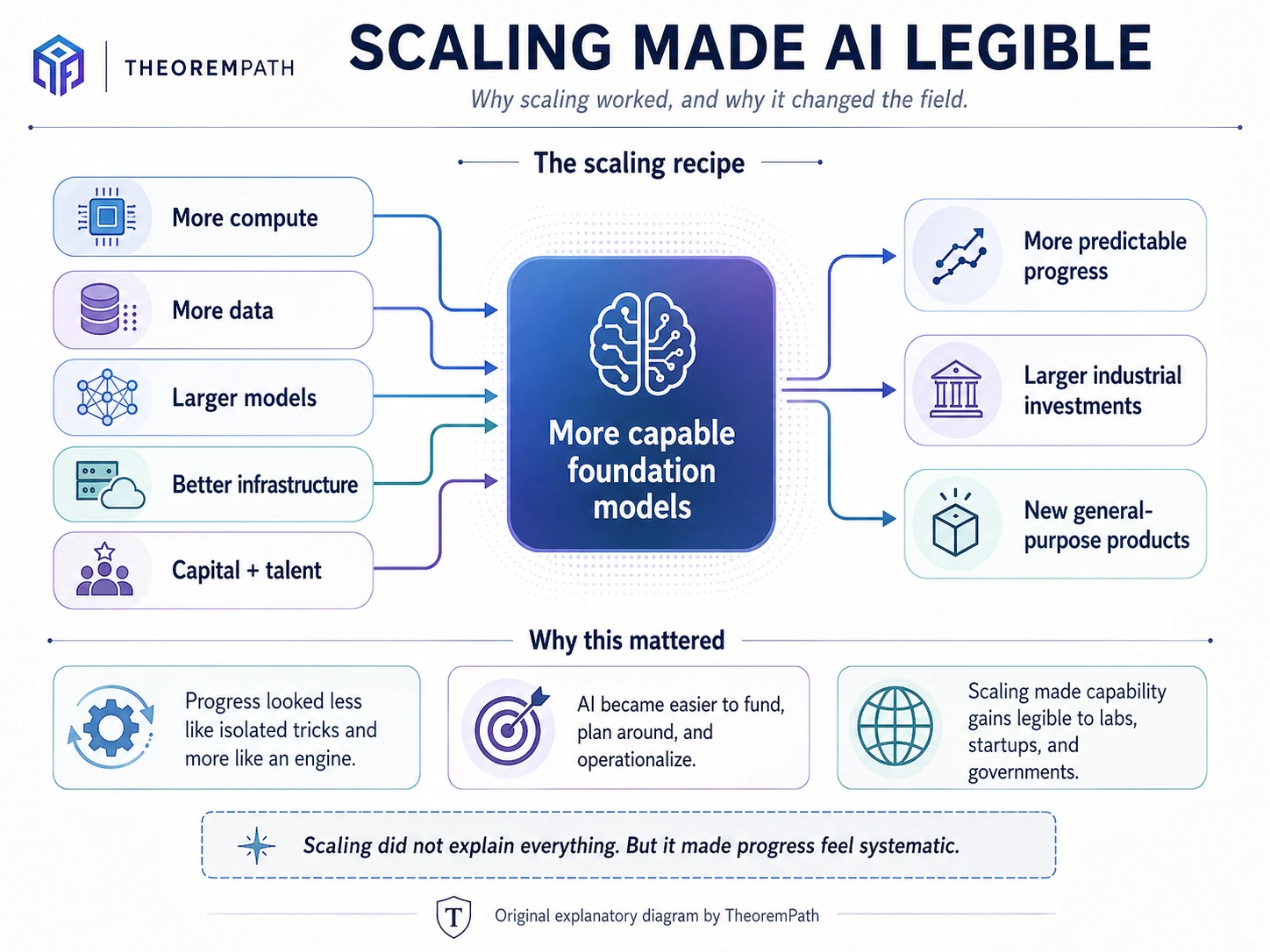

For several years, the field of Artificial Intelligence had a recipe that was unusually easy to organize around: more compute, more data, larger models, better infrastructure, and better execution. The surprising part was not only that this worked. The surprising part was that it worked reliably enough to change the psychology of the field.

AI progress started to feel less like a scattered collection of fragile research tricks and more like an industrial process.

That is why The Scaling Era is a useful book. Published by Stripe Press, The Scaling Era: An Oral History of AI, 2019–2025is by Dwarkesh Patel with Gavin Leech. Stripe describes it as a set of curated excerpts from Dwarkesh’s interviews with leading AI researchers and company founders, including Ilya Sutskever, Dario Amodei, Demis Hassabis, Eliezer Yudkowsky, and Mark Zuckerberg. The book covers technical questions about large language models, the possibility of AI takeover, explosive economic growth, and what comes next.[1]

A normal technology book often tries to impose a clean thesis too early. The Scaling Era is more useful as a record of a live transition. It shows a field trying to understand what had just happened while the consequences were still unfolding.

The central fact of that period was scaling.

For a while, the most reliable way to make AI systems better was to scale the recipe: more compute and data poured into larger models, with better training runs and infrastructure absorbing most of the field’s talent.

This did not explain everything. It did not remove the need for research. It did not make model behaviour fully understood. But it gave the field an engine.

That engine mattered because it made progress investable. If you could raise enough capital, acquire enough GPUs, hire enough infrastructure talent, and execute at sufficient scale, you had a plausible path to better systems. The frontier still required exceptional people, but the broad direction was legible enough for companies and governments to organize around it.

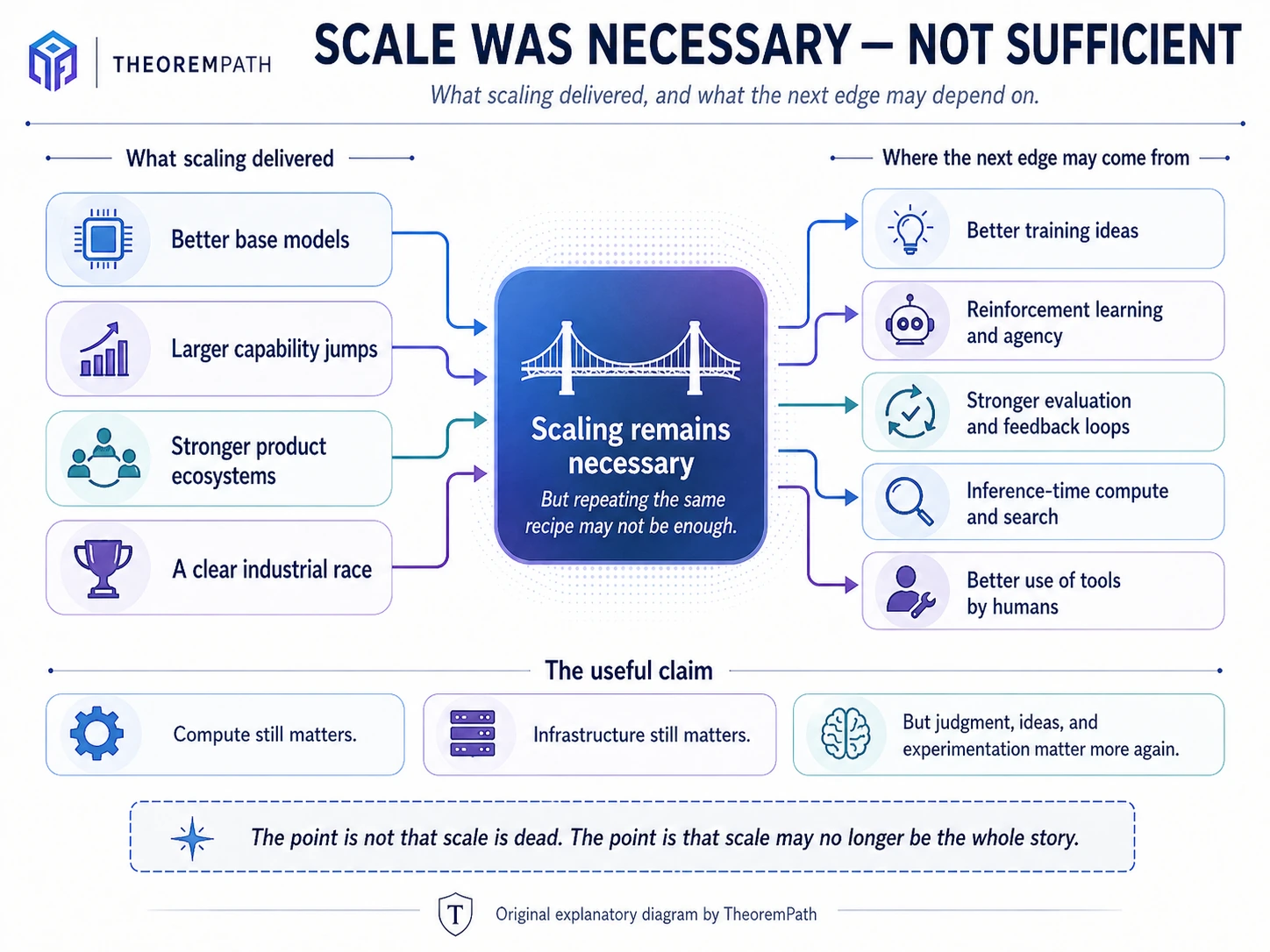

But the important question now is whether that recipe is still enough.

Ilya Sutskever’s recent conversation with Dwarkesh makes the issue sharper. Ilya describes roughly 2012 to 2020 as an age of research, 2020 to 2025 as an age of scaling, and the current moment as a return to research “just with big computers.” His point is not that compute stops mattering. It is that simply making the same recipe 100 times bigger may no longer transform the field in the same way.[2]

The next phase is not “scale is dead.” That is too simple. Compute still matters. Data still matters. Infrastructure still matters. Engineering still matters.

The better claim is that scale may no longer be the whole story.

The next edge may come from better training ideas, better reinforcement learning, better generalization, stronger evaluation, better use of inference-time compute, better value functions, and better ways of making models learn from less.

It may also come from humans learning to use the tools better.

That last point sounds less glamorous than frontier research, but it matters. A model’s capability is not the same thing as a useful workflow. The real world still requires judgment, evaluation, context, integration, and responsibility. A tool can be powerful and still easy to misuse.

This is where the scaling conversation starts to matter outside AI labs.

Most people are not asking whether pretraining has hit a data wall. They are asking whether their job is safe, whether their degree still matters, what they should study, and whether AI will make them more productive or easier to replace.

Those fears are not irrational. The World Economic Forum’s Future of Jobs Report 2025 says technological change, economic uncertainty, demographic shifts, and the green transition are among the major drivers expected to transform the global labour market by 2030; the report draws on more than 1,000 global employers representing over 14 million workers across 22 industry clusters and 55 economies.[3]Gallup also reports that 42% of bachelor’s degree students say AI has caused them to give at least a fair amount of thought to changing their major, and that 16% of currently enrolled students report already changing their major or field of study because of AI’s potential impact.[4]

So the anxiety is real. But the usual framing is weak.

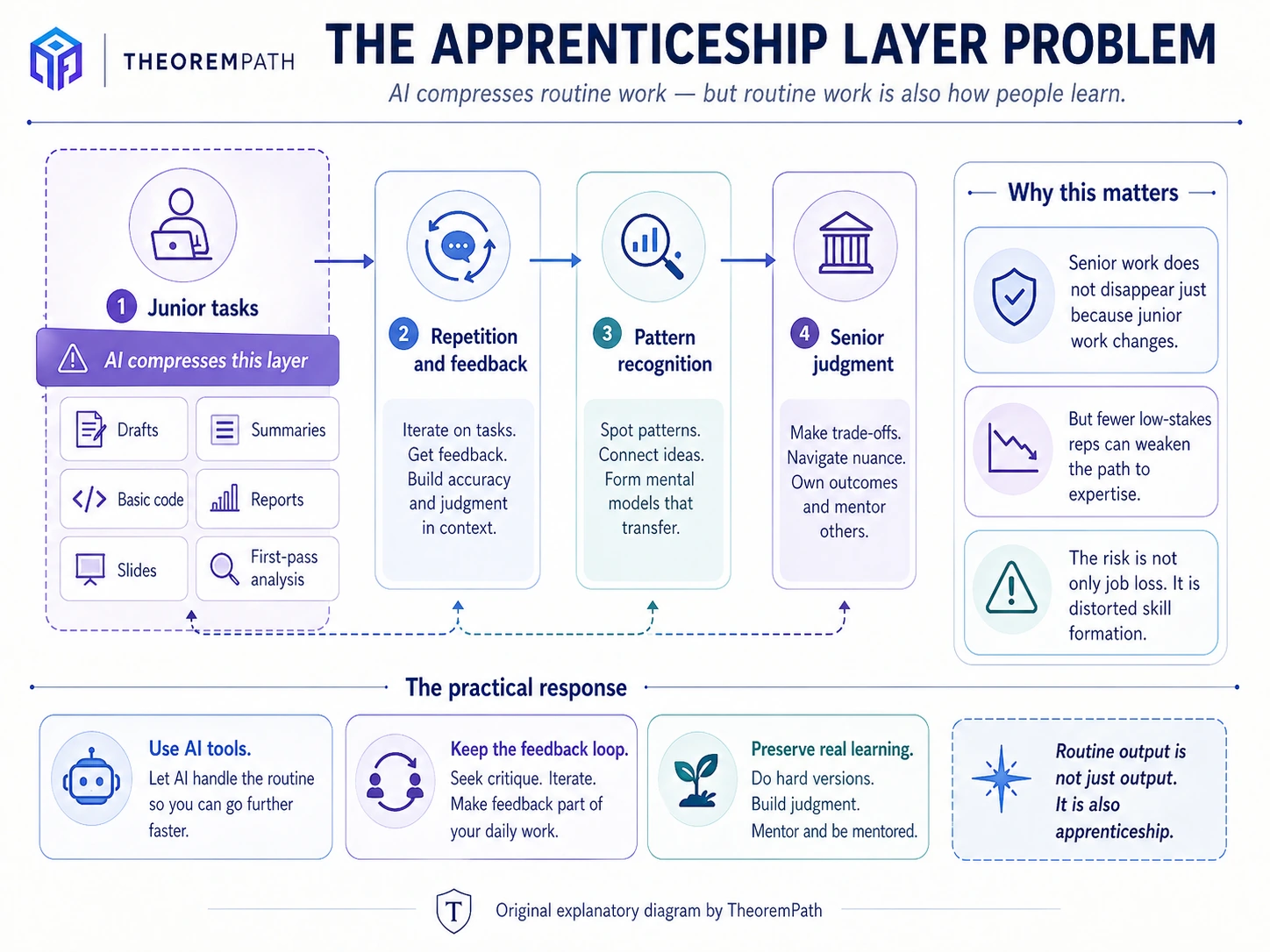

AI is not replacing jobs in one clean motion. It is changing the work inside jobs. Some tasks become faster, cheaper, or less dependent on junior labour. That does not mean every profession disappears. It means the training path can get distorted.

The senior expert may still be valuable, but the early work that teaches people how to become experts may be weakened.

That is the more realistic concern.

A lot of people learn by doing low-level work: drafting, summarizing, writing basic code, preparing reports, cleaning data, making slides, doing first-pass analysis, checking details, and getting feedback. These tasks are not glamorous, but they are how juniors become competent.

If AI absorbs too much of that layer, organizations may become more efficient in the short term while weakening the pipeline that produces future experts.

That is the apprenticeship layer problem.

The risk is not only job loss. It is distorted skill formation.

This matters because the common response to AI is still too defensive. People ask what field AI “cannot touch.” That is the wrong question. AI will touch software, statistics, law, medicine, design, education, public administration, engineering, and business.

The better question is:

Where does human judgment become more valuable when routine production gets cheaper?

Computer science still matters, but not because basic coding will remain scarce. It probably will not. The leverage is in systems, infrastructure, security, machine learning, data engineering, evaluation, and understanding where automated systems fail.

Math and statistics still matter, but not because formulas are safe from automation. Routine analysis will be automated aggressively. The value is in abstraction, uncertainty, causal reasoning, experimental design, model criticism, and knowing when an answer is numerically clean but conceptually wrong.

Writing still matters, but generic writing is in trouble. AI makes bland competence easy. That means structure, judgment, voice, taste, and actual insight matter more.

The same pattern holds almost everywhere. AI compresses routine production. It does not remove responsibility.

This is also where many anti-AI attitudes become unconvincing. There are serious criticisms of AI: reliability, copyright, safety, labour effects, bias, evaluation, institutional dependence, and the risk of weakening skill formation. Those are real issues. But refusing to engage with the tools does not make someone rigorous. It often just means they have not developed taste with the tools yet.

The calculator analogy is imperfect, but useful. A calculator did not remove the need to understand mathematics. It changed where the work sits. You still need to know what problem you are solving, whether the setup is valid, whether the answer makes sense, and what assumptions are hidden in the computation.

AI tools are similar, except the failure modes are richer. They can produce fluent nonsense. They can hide uncertainty. They can make weak reasoning look polished. They can overfit to conventional explanations. They can help you move faster in the wrong direction.

That is exactly why serious use matters.

A person who uses AI casually may see it as a toy or a shortcut. A person who uses it every day for coding, research, debugging, writing, simulation, data analysis, paper reading, and system design starts to see both its power and its limits.

The gap will not be between people who “believe in AI” and people who do not. That framing is too crude. The gap will be between people who have learned how to think and build with these systems, and people who keep treating them as optional.

For students, the answer is not to panic-change majors. The answer is to choose a domain where AI gives leverage, then build enough technical fluency to use that leverage better than peers.

For workers, the answer is not to wait for official training. The answer is to map your own workflow: what can be automated, what can be accelerated, what still requires judgment, and where the tool fails.

For builders, the answer is not to chase every model release. The answer is to build real things, test assumptions, and learn which AI capabilities are reliable enough for production.

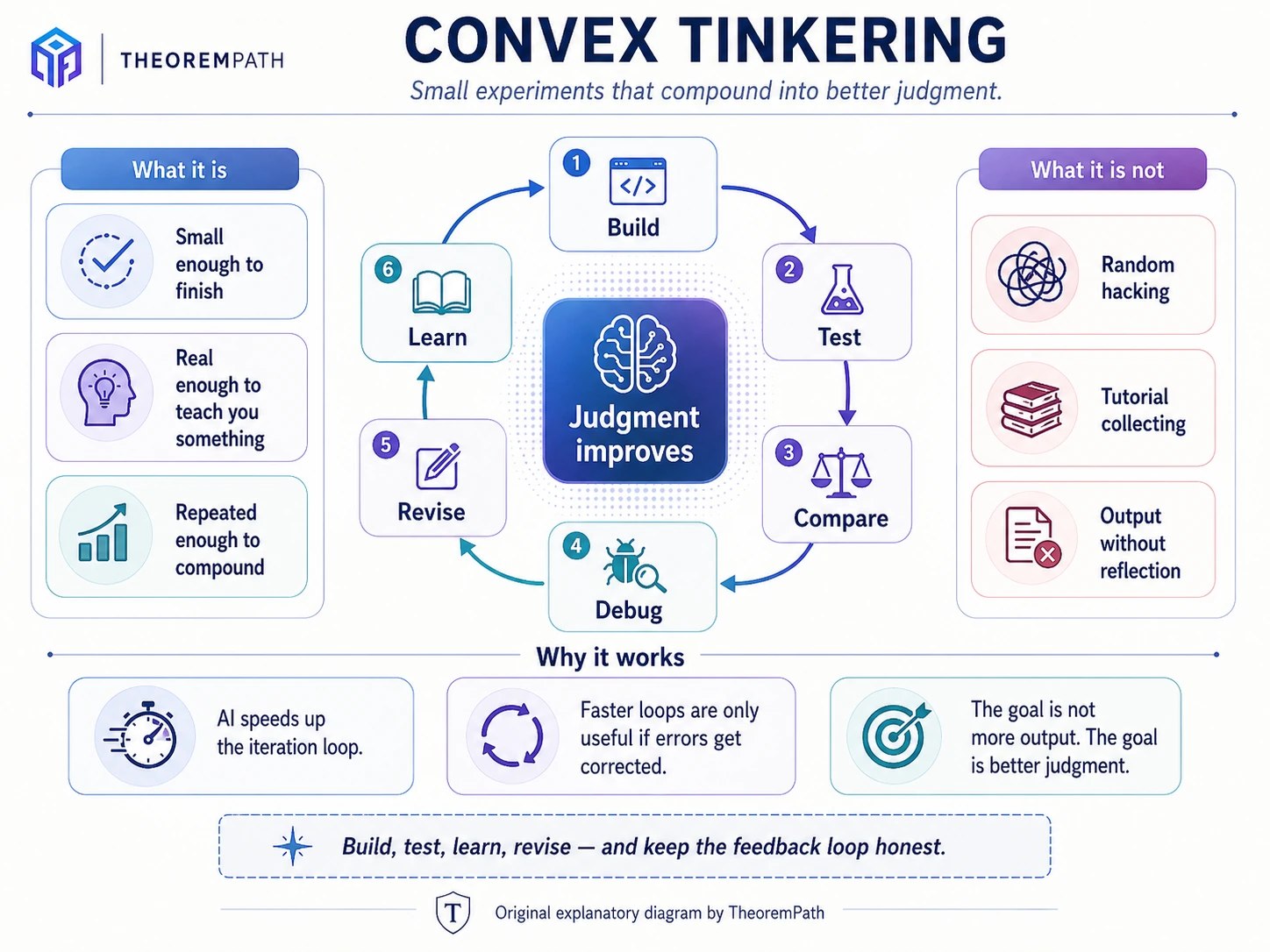

I still like the phrase convex tinkering, but it has to stay plain.

By convex tinkering, I mean small experiments that compound: build, test, compare, debug, revise, learn.

Not random hacking. Not chasing every tool. Not collecting tutorials. The point is to choose projects small enough to finish, but real enough to teach you something. Each attempt should make the next attempt sharper. You hit a wall, understand the wall, and then try again with a better question.

AI changes the iteration loop. You can move faster through documentation, code, simulations, drafts, examples, explanations, and prototypes. But faster iteration only matters if you keep improving your judgment. Otherwise you just generate more output with the same weak thinking underneath.

This connects back to Ilya’s point.

If the next phase of AI progress is more research-shaped, then the important skill is not memorizing a fixed path. It is learning how to investigate: how to ask better questions, how to test ideas, how to notice when an answer is plausible but wrong, and how to use tools without letting them think for you.

That is true inside frontier labs, but it is also true for ordinary technical work.

The scaling era made AI progress feel like a race of bigger runs. Whatever comes next will be messier. It will depend more on ideas, evaluation, taste, and the ability to search through uncertainty.

That is uncomfortable, but clarifying.

The practical response is not panic or worship. It is steadier than that: learn the tools, build real things, test your assumptions, and keep improving your judgment.

Sources and notes

This post was prompted by The Scaling Era: An Oral History of AI, 2019–2025 by Dwarkesh Patel with Gavin Leech, published by Stripe Press. Stripe describes the book as a curated selection of Dwarkesh’s interviews with leading AI researchers and company founders, ranging from technical details of LLMs to AI takeover and explosive economic growth.[1]

The “age of research / age of scaling / research again with big computers” framing comes from Ilya Sutskever’s November 2025 conversation with Dwarkesh Patel. In that transcript, Ilya says that 2012–2020 was roughly an age of research, 2020–2025 an age of scaling, and that with scale now very large, the field is “back to the age of research again, just with big computers.”[2]

The labour-market and education examples are included to motivate the practical section, not as precise forecasts. The World Economic Forum’s Future of Jobs Report 2025is based on employer perspectives across more than 14 million workers. Gallup’s April 2026 article reports survey results from the Lumina Foundation-Gallup 2026 State of Higher Education Study, including bachelor’s degree students reconsidering majors because of AI and students reporting major changes due to AI’s possible impact.[3][4]

References

- [1] Stripe Press. The Scaling Era: An Oral History of AI, 2019–2025. press.stripe.com/scaling

- [2] Dwarkesh Patel. “Ilya Sutskever — We’re moving from the age of scaling to the age of research.” November 25, 2025. dwarkesh.com

- [3] World Economic Forum. The Future of Jobs Report 2025. Published January 7, 2025. weforum.org

- [4] Stephanie Marken, Gallup. “College Students Weigh AI’s Impact on Majors and Careers.” April 2, 2026. news.gallup.com

Related on TheoremPath

- Model comparison table — current frontier snapshot.

- Claude model family

- GPT series evolution

- Gemini and Google models