Statistical Foundations

Copulas

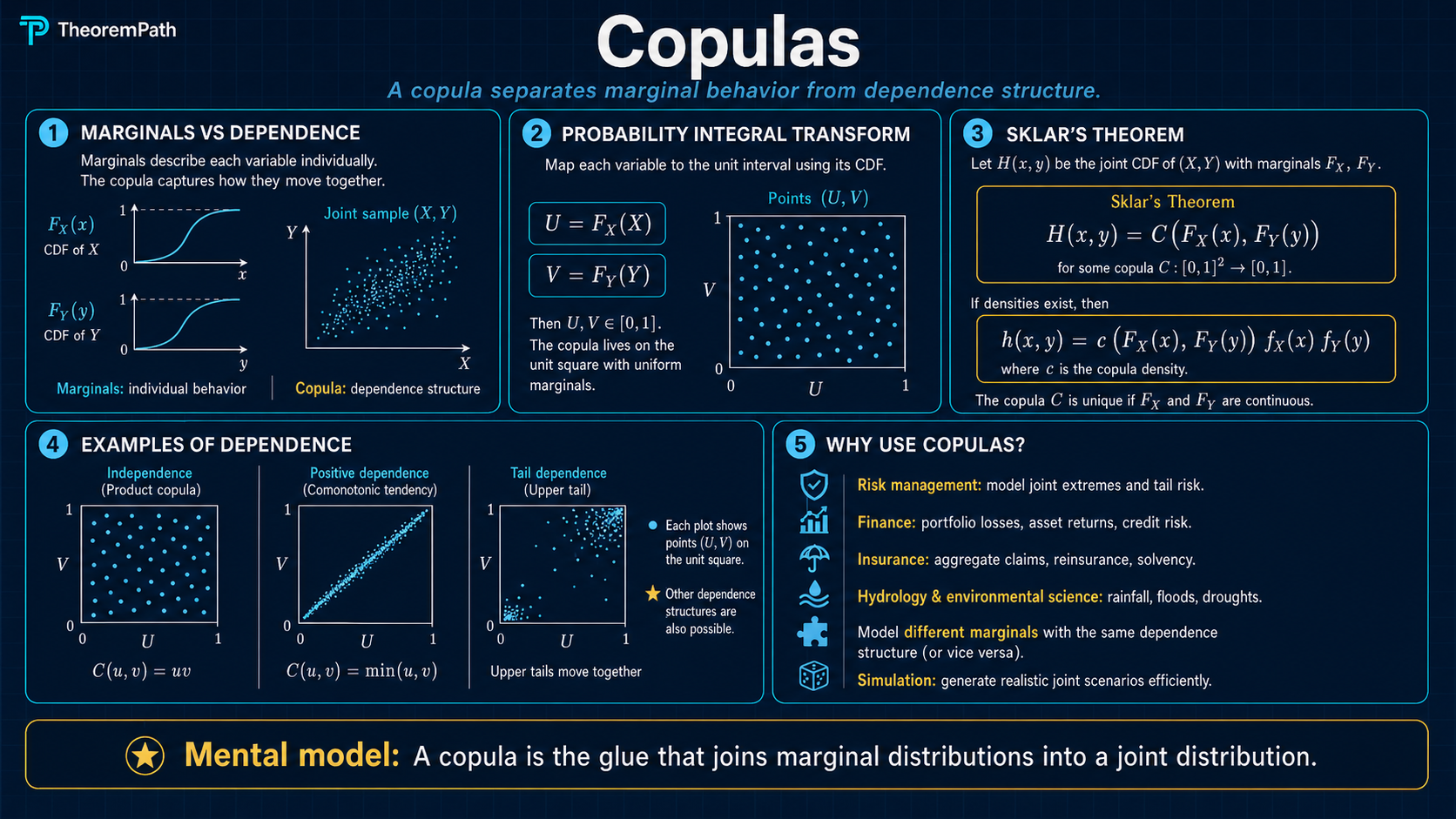

Copulas separate the dependence structure of a multivariate distribution from its marginals. Sklar's theorem guarantees that any joint CDF can be decomposed into marginals and a copula, making dependence modeling modular.

Prerequisites

Why This Matters

Correlation does not capture dependence. Two random variables can have zero correlation yet be strongly dependent. Copulas give you a complete description of the dependence structure, separated cleanly from the marginal distributions.

Hide overviewShow overview

This separation is not cosmetic. It is practically essential. In finance, risk models that assumed Gaussian dependence (ignoring tail dependence) contributed to massive underestimates of joint extreme losses. In survival analysis, copulas let you model the dependence between failure times without constraining the marginal survival functions.

Mental Model

Think of building a multivariate distribution in two steps. First, choose the marginal distributions (each variable's individual behavior). Second, choose the copula (how the variables move together). Sklar's theorem says this two-step decomposition always works and always gives you a valid joint distribution.

The copula lives on the unit cube . It takes uniform marginals as inputs and produces a joint distribution on . The probability integral transform maps any continuous marginal to uniform, so the copula captures only the dependence, with all expectation, variance, and higher moment information stripped away.

Formal Setup and Notation

Let be random variables with continuous marginal CDFs and joint CDF .

Copula

A copula is a multivariate CDF on with uniform marginals. That is, such that:

- if any

- for all

- is -increasing (assigns nonnegative probability to every hyperrectangle)

Main Theorems

Sklar's Theorem

Statement

For any joint CDF with marginal CDFs , there exists a copula such that:

If are all continuous, then is unique.

Intuition

Any joint distribution can be factored into its marginals and a copula. Conversely, you can combine any set of marginals with any copula to get a valid joint distribution. This is the modular decomposition that makes copulas so useful.

Proof Sketch

Define where is the quantile function. When marginals are continuous, the probability integral transform gives , so is a valid copula. Uniqueness follows from the continuity of the marginals.

Why It Matters

This theorem is the entire foundation of copula theory. It tells you that modeling marginals and modeling dependence are genuinely separable problems. You can estimate marginals nonparametrically and dependence via a parametric copula, or vice versa.

Failure Mode

When marginals are discrete, the copula is not unique. There are infinitely many copulas consistent with the same joint distribution. This makes copula modeling for discrete data substantially more delicate.

Frechet-Hoeffding Bounds

Statement

For any copula and any :

The upper bound (comonotonicity copula) is always a copula. The lower bound is a copula only when .

Intuition

These are the tightest possible bounds on any copula. The upper bound corresponds to perfect positive dependence (all variables are increasing functions of a single random variable). The lower bound (in ) corresponds to perfect negative dependence.

Proof Sketch

The upper bound follows from for each . The lower bound follows from the inclusion-exclusion inequality applied to the -increasing property of copulas.

Why It Matters

These bounds provide sanity checks for copula estimation and define the extremes of dependence. Any copula must lie between these bounds pointwise.

Failure Mode

In dimensions , the lower bound is not a valid copula. There is no single copula that achieves maximal negative dependence among all pairs simultaneously when .

Common Copula Families

Gaussian Copula

The Gaussian copula with correlation matrix is:

where is the joint CDF of a multivariate normal with correlation and is the standard normal quantile function. The Gaussian copula has zero tail dependence: extreme events in one variable do not increase the probability of extremes in another.

Clayton Copula

The Clayton copula (bivariate) with parameter is:

It has lower tail dependence and zero upper tail dependence. It captures the tendency for variables to crash together.

Gumbel Copula

The Gumbel copula with parameter is:

It has upper tail dependence and zero lower tail dependence. It captures the tendency for variables to boom together.

Core Definitions

Tail Dependence

The upper tail dependence coefficient is:

The lower tail dependence coefficient is:

where follow the copula . These measure the probability of joint extremes. Tail dependence connects to the study of concentration inequalities and extreme value behavior.

Canonical Examples

Gaussian copula misses tail risk

Suppose two asset returns have a Gaussian copula with . The probability of both assets losing more than 3 standard deviations is much lower under the Gaussian copula than under a -copula with the same rank correlation, because the Gaussian copula has . Using the Gaussian copula can lead to severe underestimation of joint crash risk.

Common Confusions

Copulas are not just about correlation

A copula captures the full dependence structure, not just linear correlation. Two distributions with the same Pearson correlation can have very different copulas. Rank correlations (Kendall's , Spearman's ) are functions of the copula alone, but even they do not fully determine it.

The Gaussian copula is not the same as a Gaussian distribution

A Gaussian copula paired with non-Gaussian marginals produces a non-Gaussian joint distribution. The copula only borrows the dependence structure of the Gaussian, not its marginal shape.

Summary

- Sklar's theorem: any joint CDF = copula composed with marginals

- Copulas separate dependence structure from marginal behavior

- Gaussian copulas have zero tail dependence, dangerous for risk

- Clayton captures lower tail dependence, Gumbel captures upper

- Frechet-Hoeffding bounds define the extremes of possible dependence

Exercises

Problem

Let have a Gaussian copula with and both marginals standard exponential. Write the joint CDF in terms of , , and the exponential CDF .

Problem

Show that the Clayton copula has lower tail dependence coefficient .

References

Canonical:

- Nelsen, An Introduction to Copulas (2006), Chapters 2-5

- Joe, Dependence Modeling with Copulas (2014)

Current:

- McNeil, Frey, Embrechts, Quantitative Risk Management (2015), Chapter 7

- Czado, Analyzing Dependent Data with Vine Copulas (2019), Chapters 1-5

- Aas, Czado, Frigessi, Bakken, "Pair-copula constructions of multiple dependence," Insurance: Mathematics and Economics 44 (2009), 182-198

Next Topics

Natural extensions from copulas:

- Vine copulas: flexible high-dimensional dependence via pair-copula constructions

- Tail dependence estimation: nonparametric approaches to measuring extremal dependence

Last reviewed: April 13, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

1- Common Probability Distributionslayer 0A · tier 1

Derived topics

0No published topic currently declares this as a prerequisite.