Algorithms Foundations

Fast Fourier Transform

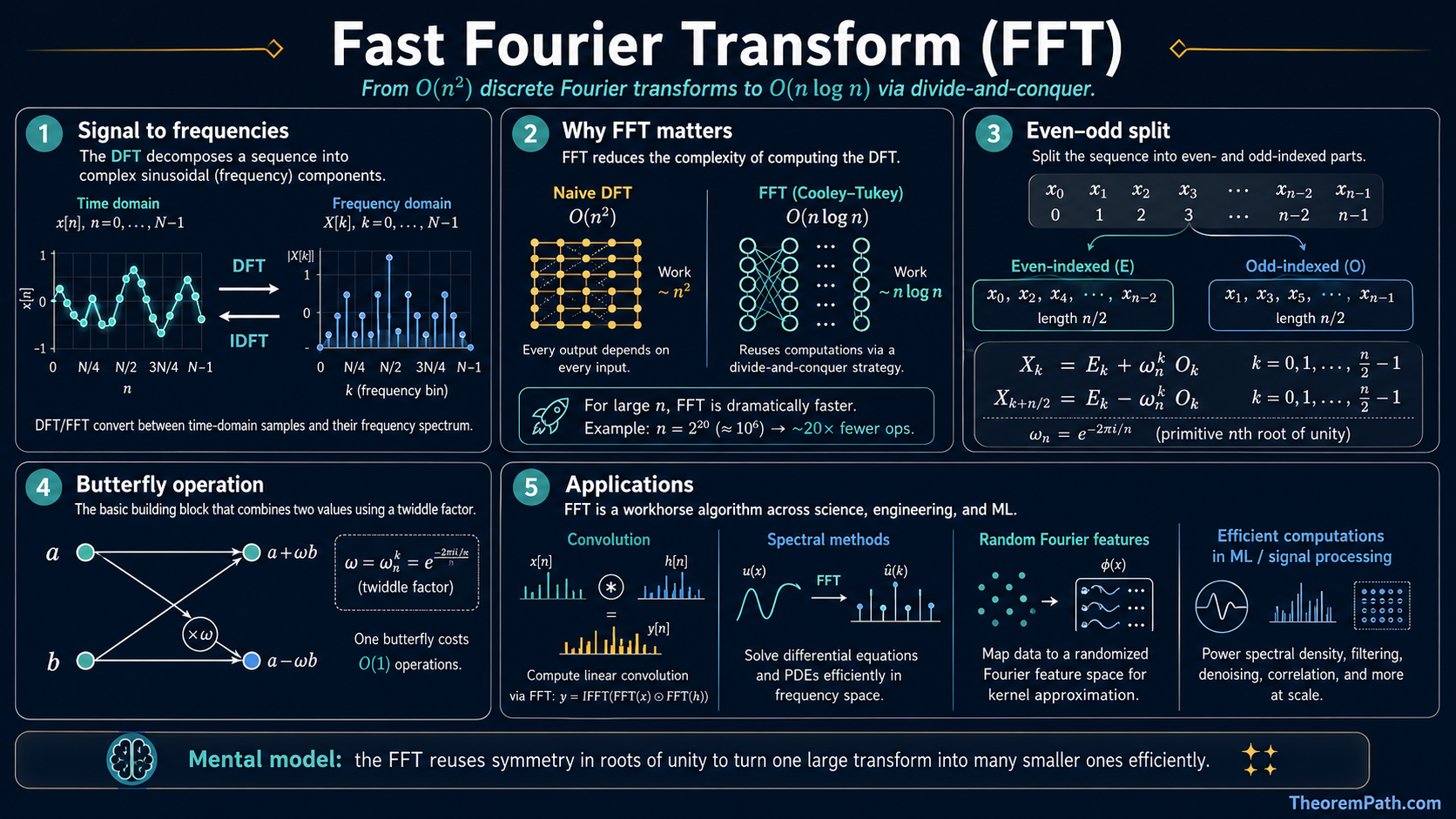

The Cooley-Tukey FFT reduces the discrete Fourier transform from O(n²) to O(n log n), enabling efficient convolution, spectral methods, and Fourier features for kernel approximation.

Why This Matters

The FFT is one of the most important algorithms in all of computational science. For ML specifically: convolutions in CNNs can be computed via FFT, spectral clustering uses eigenvalues of matrices constructed with Fourier-like tools, random Fourier features approximate kernel functions by sampling from the Fourier transform of the kernel, and some efficient attention mechanisms replace quadratic dot-product attention with FFT-based operations.

Hide overviewShow overview

The FFT takes and makes it . Any time you see convolution, correlation, or spectral analysis, the FFT is doing the work underneath.

The Discrete Fourier Transform

Discrete Fourier Transform

Given a sequence , the DFT produces a sequence defined by:

The inverse DFT recovers from :

The DFT decomposes a signal into its frequency components. Each measures how much the signal oscillates at frequency . The naive computation of all values of requires multiplications each, totaling .

Roots of Unity

The -th root of unity is . The DFT can be written as . The key algebraic property: and (when is even). These symmetries are what make the FFT possible.

The Cooley-Tukey FFT

The idea: split the DFT into two half-sized DFTs by separating even-indexed and odd-indexed terms.

Write with even indices and odd indices :

Since , each sum is a DFT of size . Let be the DFT of the even-indexed elements and the DFT of the odd-indexed elements. Then:

This is the butterfly operation. Two DFTs of size plus combining work.

FFT Complexity

Statement

The Cooley-Tukey FFT computes the DFT of complex numbers in arithmetic operations.

Intuition

Each level of recursion halves the problem size and does work for the butterfly combines. There are levels, so total work is .

Proof Sketch

The recurrence is . By the Master theorem with , , : since , we get .

Why It Matters

Reducing to is the difference between feasible and infeasible for large signals. For , this is a factor of roughly . Without the FFT, digital signal processing, medical imaging, and spectral methods in ML would be impractical.

Failure Mode

The standard radix-2 Cooley-Tukey requires to be a power of 2. For other sizes, you can pad with zeros (which changes the implicit periodicity) or use mixed-radix FFT variants. Numerical precision also degrades slightly with very large due to accumulation of floating-point errors in the twiddle factors .

The Convolution Theorem

This is the reason the FFT is so important for ML.

Circular Convolution

The circular convolution of two length- sequences and is:

Naive computation: .

Convolution Theorem

Statement

Let and . Then:

where denotes pointwise multiplication. Equivalently, .

Intuition

Convolution in the time domain becomes pointwise multiplication in the frequency domain. This converts an operation into three FFTs plus pointwise multiplications.

Proof Sketch

Direct computation. Write out , substitute the convolution definition, swap the order of summation, and recognize the inner sum as .

Why It Matters

This is the workhorse behind efficient convolution in signal processing and CNNs. Any convolution with a filter of length applied to a signal of length can be done in instead of by zero-padding to length and using FFTs.

Failure Mode

The theorem applies to circular convolution. For linear convolution (which is what CNNs actually compute), you must zero-pad the inputs to avoid wraparound artifacts. Also, for small filter sizes , direct convolution may beat FFT-based convolution due to FFT overhead.

Connections to ML

CNNs: Convolution layers apply filters to input feature maps. For large spatial dimensions, FFT-based convolution is faster than direct convolution. Libraries like cuDNN choose between direct and FFT implementations based on filter and input size.

Fourier features: The random Fourier features method (Rahimi and Recht, 2007) approximates a shift-invariant kernel by sampling from the Fourier transform of . This relies on Bochner's theorem: a continuous shift-invariant positive definite kernel is the Fourier transform of a non-negative measure.

Efficient attention: Several methods (FNet, for example) replace the self-attention mechanism with Fourier transforms along the sequence dimension, achieving complexity instead of for sequence length .

Spectral methods: Graph neural networks and spectral clustering use eigendecompositions and Fourier transforms on graphs. The graph Fourier transform generalizes the classical DFT to non-Euclidean domains.

Common Confusions

FFT computes the DFT, it is not a different transform

The FFT is an algorithm for computing the DFT. The DFT is the mathematical transform. The FFT and naive DFT produce exactly the same output. The FFT just does it faster.

Circular vs linear convolution

The convolution theorem gives circular convolution, not linear. In CNNs and signal processing, we typically want linear convolution. You must zero-pad to at least length before applying the FFT to get the correct linear convolution result.

FFT is not always faster for small filters

For a 3x3 convolution filter on a 224x224 image (common in CNNs), direct convolution is faster than FFT. The FFT advantage kicks in for larger filters or when computing many convolutions simultaneously.

Summary

- DFT: transforms time domain to frequency domain. Naive cost:

- FFT (Cooley-Tukey): computes DFT in by divide and conquer on even/odd indices

- Convolution theorem: convolution becomes pointwise multiply in frequency domain

- For ML: efficient convolution, random Fourier features, efficient attention, spectral methods

- Practical consideration: FFT beats direct convolution only when filter or signal size is large enough

Exercises

Problem

Compute the DFT of the sequence by hand. Verify that for .

Problem

You need to convolve a signal of length with a filter of length . Compare the operation counts of direct convolution vs FFT-based convolution.

References

Canonical:

- Cooley & Tukey, "An Algorithm for the Machine Calculation of Complex Fourier Series" (1965)

- Cormen, Leiserson, Rivest, Stein, Introduction to Algorithms (CLRS), Chapter 30

Current:

- Oppenheim & Willsky, Signals and Systems, Chapter 8

- Rahimi & Recht, "Random Features for Large-Scale Kernel Machines" (2007)

- Lee-Thorp et al., "FNet: Mixing Tokens with Fourier Transforms" (2021), arXiv:2105.03824

- Bluestein, "A Linear Filtering Approach to the Computation of the Discrete Fourier Transform" (1968)

Next Topics

- Efficient attention variants: some use FFT to avoid quadratic attention cost

- Convolutional neural networks: where convolution meets deep learning

Last reviewed: April 14, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

2- Exponential Function Propertieslayer 0A · tier 1

- Complex Numbers for Fourierlayer 0A · tier 2

Derived topics

4- PDE Fundamentals for Machine Learninglayer 1 · tier 2

- Convolutional Neural Networkslayer 3 · tier 2

- Fourier Neural Operatorlayer 3 · tier 2

- Attention Variants and Efficiencylayer 4 · tier 2