Modern Generalization

Mean Field Theory

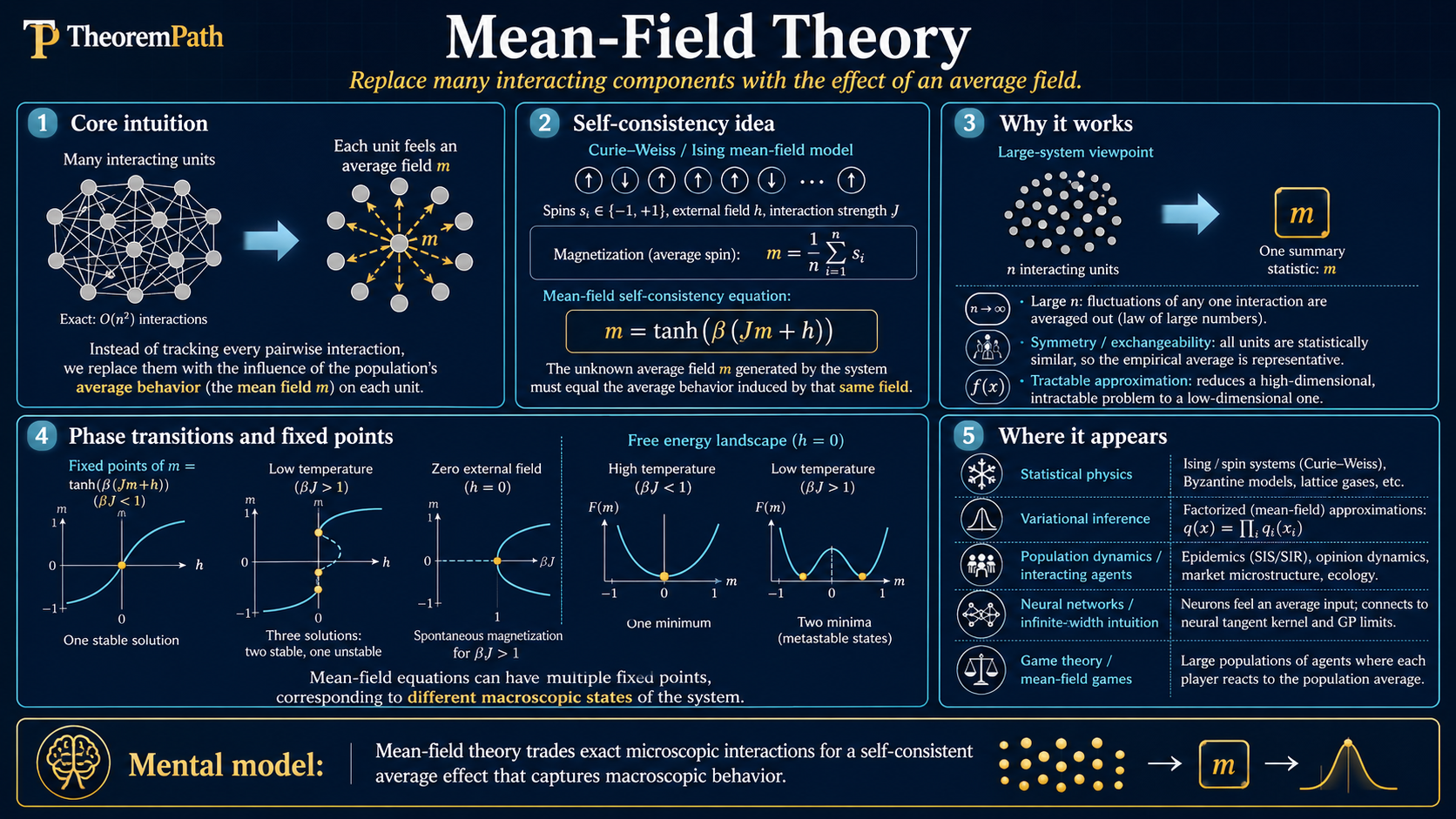

The mean field limit of neural networks: as width goes to infinity under the right scaling, neurons become independent particles whose weight distribution evolves by Wasserstein gradient flow, capturing feature learning that the NTK regime misses.

Prerequisites

Why This Matters

The Neural Tangent Kernel shows that infinitely wide networks in the lazy regime behave like kernel methods --- but this is precisely the regime where networks do not learn features. Practical neural networks learn representations, and NTK cannot explain this.

Hide overviewShow overview

Mean field theory provides an alternative infinite-width limit that does capture feature learning. Under a different parameterization (mean field scaling instead of NTK scaling), the network weights move substantially during training. In the infinite-width limit, individual neurons become independent, and the distribution of weights evolves according to a partial differential equation --- specifically, a Wasserstein gradient flow.

This is the theoretical framework for understanding what happens beyond the kernel regime, making it one of the most important directions in modern deep learning theory.

Mental Model

Think of a two-layer neural network as a collection of "particles" (neurons), each with a weight vector . Each particle contributes to the output. As , the sum becomes an integral over a probability distribution of weights:

Training the network is equivalent to moving the particles by gradient descent. In the infinite-width limit, this becomes evolving the distribution by a continuous flow. The distribution moves in the direction that decreases the loss fastest --- this is Wasserstein gradient flow.

The key difference from NTK: in NTK, each particle barely moves (order displacement). In mean field, particles move substantially (order 1 displacement). This substantial movement is what enables feature learning.

Formal Setup

Consider a two-layer neural network:

where are the parameters of neuron , and is an activation function (e.g., ReLU).

Mean Field Parameterization

In the mean field parameterization, the network output scales as (one factor of width in the denominator):

where is the contribution of a single neuron with parameters .

Compare to NTK parameterization: where the output scales as and both and are trained, but each parameter moves only during training. The distinct setup in which only is trained while the random hidden weights are frozen is the random-features model, not NTK.

The scaling in mean field is crucial: it means each neuron's contribution is order , so the network depends on the distribution of neurons, not on any individual one.

Empirical Measure of Neurons

The empirical measure of the neuron parameters is:

where is a point mass at . As , if the neurons are initialized i.i.d. from some distribution , then by the law of large numbers. The network output becomes:

This is a linear functional of the measure .

Wasserstein Gradient Flow

The Wasserstein gradient flow is the continuous-time evolution of a probability measure that follows the steepest descent direction of a functional in the Wasserstein-2 metric space:

where is the first variation (functional derivative) of with respect to .

In the neural network context, is the training loss viewed as a functional of the weight distribution. The first variation at a point is the gradient of the loss with respect to a single neuron's parameters: evaluated at the current distribution.

Main Theorems

Mean Field Limit for Two-Layer Networks

Statement

Consider a two-layer network with neurons trained by gradient flow on loss . Under regularity conditions on and , as :

- The empirical measure converges weakly to a deterministic measure for all

- The limiting measure satisfies the mean field PDE:

- The network output converges to

- Each neuron evolves independently in the limit, following: where is the population-level distribution

Intuition

The scaling means each individual neuron has a vanishing effect on the total output. As , changing one neuron does not affect the loss gradient seen by other neurons. This is the "propagation of chaos" phenomenon: interacting particles become independent in the many-particle limit. Each neuron follows its own gradient as if the distribution were fixed --- but itself evolves self-consistently as the aggregate of all neurons.

This is analogous to how individual gas molecules become effectively independent in the thermodynamic limit, even though they all interact via the mean field.

Proof Sketch

The proof uses propagation of chaos techniques from mathematical physics:

Step 1: Show that the gradient update for neuron depends on the other neurons only through the empirical measure . The gradient is .

Step 2: Show that concentrates around a deterministic trajectory . This uses the law of large numbers for interacting particle systems: as , the empirical measure of i.i.d. particles undergoing mean-field interactions converges to the solution of the mean field PDE.

Step 3: Verify that the limiting PDE is well-posed (existence and uniqueness of solutions) under the regularity assumptions on .

Why It Matters

This theorem says that infinitely wide mean-field networks are described by a PDE, not by a kernel. The distribution evolves nontrivially during training --- the neurons move to new locations in parameter space. This is feature learning: the features change during training because the change. NTK theory, by contrast, freezes the features at their initialization.

The mean field limit shows that feature learning is not a finite-width artifact --- it persists at infinite width under the right scaling.

Failure Mode

The regularity conditions on typically require smoothness, which excludes ReLU. Extensions to ReLU exist but require more delicate analysis. The convergence rate is typically in Wasserstein distance, which is slow. More critical, the mean field limit applies cleanly only to two-layer networks. Extending to deep networks requires multi-layer mean field theories that are still under active development.

Training Loss Decreases Along Wasserstein Gradient Flow

Statement

Along the Wasserstein gradient flow , the training loss is non-increasing:

The loss decreases at a rate proportional to the expected squared gradient norm under the current weight distribution.

Intuition

This is the infinite-width analogue of "gradient descent decreases the loss." Each neuron moves in its negative gradient direction, and the aggregate effect is a decrease in the loss. The rate of decrease depends on how large the gradients are on average under . The flow stops (reaches a critical point) when the gradient is zero -almost everywhere.

Proof Sketch

By the chain rule in Wasserstein space:

Substituting the mean field PDE and integrating by parts:

The integration by parts moves the divergence operator onto the first variation, producing the squared gradient norm with a negative sign.

Why It Matters

Non-increase of the loss along the flow is the analogue of monotonic loss decrease for finite-dimensional gradient descent. It does not by itself guarantee convergence to a critical point: that requires additional compactness or regularity assumptions (e.g., a Lyapunov argument plus tightness of ). Combined with global optimality results for over-parameterized mean field networks under specific structural assumptions, monotone descent is one ingredient in showing that gradient flow on infinitely wide networks finds good solutions. The key advantage over NTK is that whatever progress occurs happens while the features are being learned.

Failure Mode

The critical point reached by the flow may be a saddle point or local minimum, not a global minimum. Global convergence results require additional assumptions (e.g., convexity of the loss functional in Wasserstein space, or specific properties of the activation function).

Mean Field vs. NTK: The Central Comparison

| Property | NTK (Lazy Regime) | Mean Field (Rich Regime) |

|---|---|---|

| Parameterization | scaling | scaling |

| Weight movement | --- vanishing | --- substantial |

| Features | Frozen at initialization | Learned during training |

| Infinite-width limit | Kernel regression (linear) | Wasserstein gradient flow (nonlinear) |

| Mathematical tool | Kernel theory, RKHS | PDE, optimal transport |

| Feature learning | No | Yes |

| Captures practice | Poorly | Better (but still limited) |

The fundamental reason for the difference: the NTK scaling means that each neuron's output change during training is order , so the linearization of the network is accurate. The mean field scaling means the output depends on the average over neurons. Each neuron can move substantially (order 1) because its individual contribution to the output is only .

Canonical Examples

Mean field dynamics for a toy problem

Consider fitting a smoothed step for some moderate with a two-layer ReLU network on . (We use a smoothed step rather than because the standard mean-field theorems assume regularity that the discontinuous target violates.) Under NTK: the random features are fixed, and the network can only learn a linear combination of these random features. This is like approximating a smooth step using a fixed random basis.

Under mean field: the weight distribution evolves so that neurons concentrate their kink locations near the transition region of (with a bias absorbed into the input). The neurons discover that placing features near the steep region is useful. This is feature learning in action: the network adapts its features to the target function, rather than relying on random features.

Common Confusions

Mean field does not mean mean field approximation from physics

In statistical physics, "mean field approximation" means replacing interactions with their average --- an approximation that becomes exact in certain limits. In the neural network context, the mean field limit is not an approximation of a finite-width network. It is the exact limit as width goes to infinity under the scaling. The name comes from the same mathematical structure (propagation of chaos, interacting particle systems), but it is a theorem, not an approximation.

Mean field is not a replacement for NTK --- they describe different scaling regimes

NTK and mean field describe different limits of the same architecture with different parameterizations. Neither is "wrong." The question is which limit better describes the behavior of a practical network. For very wide networks with small learning rate, NTK is relevant. For networks that learn features (which includes most practical networks), the mean field perspective is more informative.

Mean field theory is currently limited to shallow networks

The cleanest mean field results are for two-layer networks. Deep mean field theory exists (e.g., through tensor programs) but is substantially more complex. The infinite-width limit for deep networks depends on the order in which layers are taken to infinity, and different orderings give different limits.

Summary

- Mean field parameterization uses scaling; NTK uses

- At infinite width, neurons become independent particles (propagation of chaos)

- The weight distribution evolves by Wasserstein gradient flow (a PDE)

- Mean field captures feature learning: weights move , not

- NTK freezes features (lazy regime); mean field learns features (rich regime)

- The mean field limit is a PDE, not a kernel --- structurally different mathematical object

- Currently best understood for two-layer networks; deep extensions are active research

- Mean field is the right framework for understanding why neural networks outperform kernel methods

Exercises

Problem

Consider a two-layer network with scalar input . Under the mean field limit, write the network output as an integral over the weight distribution and compute the first variation for the squared loss at a single data point .

Problem

Explain why the NTK parameterization ( scaling) prevents feature learning in the infinite-width limit, while the mean field parameterization ( scaling) allows it. Consider the magnitude of the gradient update for a single neuron's weight in both cases.

Problem

The mean field PDE is a Wasserstein gradient flow. Explain what it means for the loss functional to be displacement convex in the Wasserstein-2 metric, and why displacement convexity would guarantee global convergence of the mean field dynamics to the global minimum. Does displacement convexity hold for typical neural network loss functionals?

Related Comparisons

References

Canonical:

- Mei, Montanari, Nguyen, "A Mean Field View of the Landscape of Two-Layer Neural Networks" (PNAS 2018)

- Chizat & Bach, "On the Global Convergence of Gradient Descent for Over-Parameterized Models using Optimal Transport" (NeurIPS 2018)

Current:

- Rotskoff & Vanden-Eijnden, "Trainability and Accuracy of Artificial Neural Networks" (CPAM 2022)

- Yang and Hu, "Tensor Programs" series (2020-2023) --- extends mean field ideas to deep networks via the feature learning limit

Next Topics

Mean field theory connects to:

- Neural Tangent Kernel: the contrasting lazy regime that mean field theory goes beyond

- Implicit bias and modern generalization: understanding what solutions mean field dynamics converge to

Last reviewed: April 26, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

2- Neural Tangent Kernel: Lazy Training, Kernel Equivalence, μP, and the Limits of Widthlayer 4 · tier 1

- Information Geometrylayer 3 · tier 3

Derived topics

2- Lazy vs Feature Learninglayer 4 · tier 2

- Mean-Field Gameslayer 4 · tier 3

Graph-backed continuations