Numerical Optimization

Mirror Descent and Frank-Wolfe

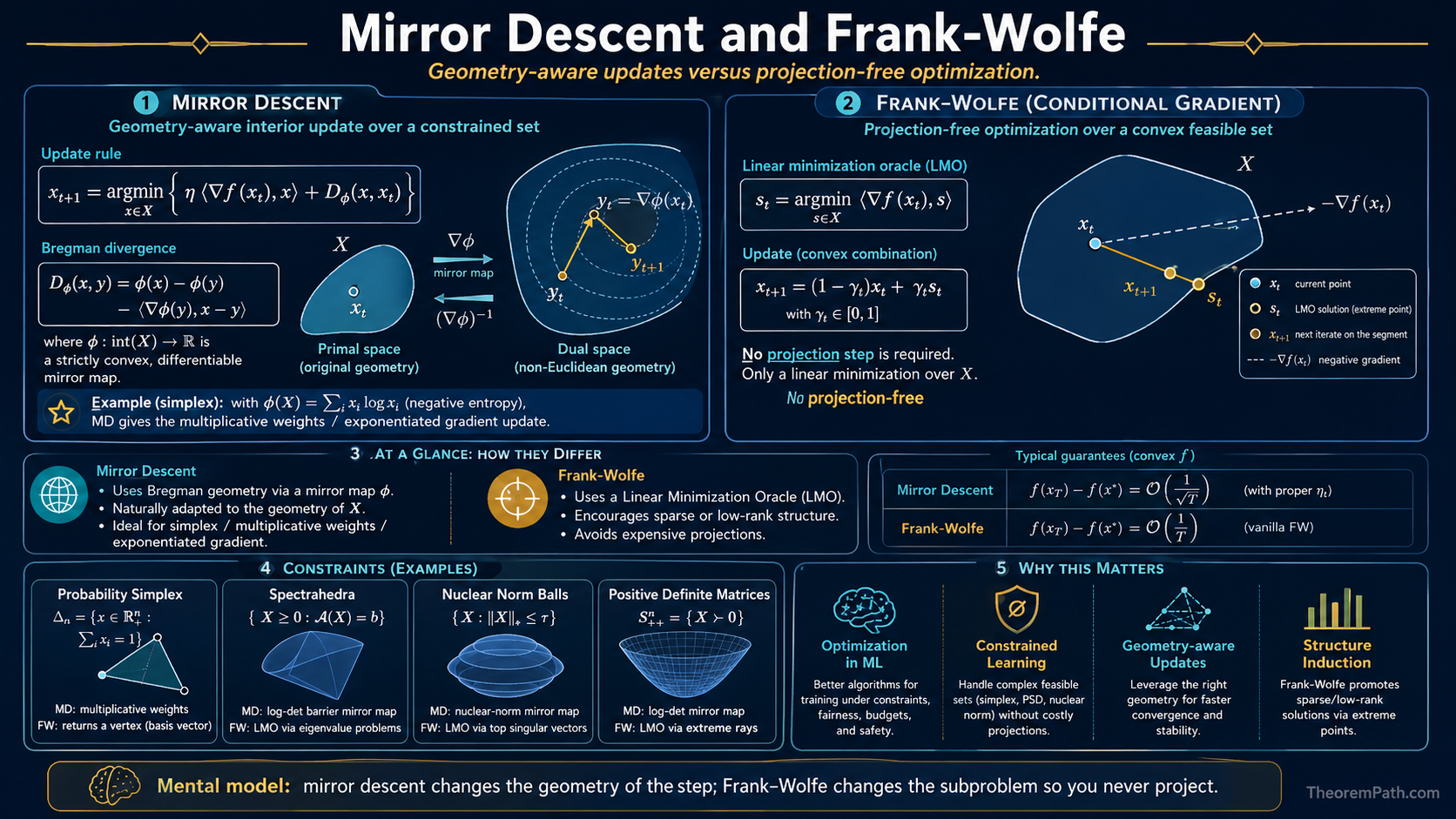

Mirror descent generalizes gradient descent via Bregman divergences, recovering multiplicative weights and exponentiated gradient as special cases. Frank-Wolfe replaces projections with linear minimization, making it projection-free.

Prerequisites

Why This Matters

Standard gradient descent uses the Euclidean distance to measure how far you move at each step. This is natural for unconstrained optimization in , but it is often the wrong geometry for constrained problems. Optimizing over the simplex (probability distributions), positive definite matrices, or combinatorial polytopes calls for a distance measure that respects the constraint geometry.

Hide overviewShow overview

Mirror descent replaces Euclidean distance with a Bregman divergence, adapting the algorithm to the problem geometry. Frank-Wolfe goes further: it avoids projection entirely by solving a linear subproblem over the constraint set at each step.

Bregman Divergences

Bregman Divergence

Given a strictly convex, differentiable function (the mirror map or distance-generating function), the Bregman divergence is:

This measures the gap between and its first-order Taylor approximation at . It is always non-negative by convexity of , but it is generally not symmetric: .

Key examples:

- : Bregman divergence is (squared Euclidean distance). Recovers standard gradient descent.

- (negative entropy): Bregman divergence is the KL divergence . Natural for the simplex.

Mirror Descent

Mirror Descent Update

Given a convex function to minimize over a convex set , the mirror descent update with step size is:

Equivalently, in the "dual space": compute , then "mirror back" via .

The update finds the point in that best balances two objectives: move in the direction of the negative gradient (minimize the linear term) while staying close to (minimize the Bregman divergence term).

Special cases:

- Euclidean mirror map (): recovers projected gradient descent.

- Entropic mirror map () over the simplex: recovers the exponentiated gradient / multiplicative weights update .

Mirror Descent Convergence

Statement

After iterations of mirror descent with step size , where and for all :

This is the rate for convex Lipschitz optimization.

Intuition

The rate depends on the ratio where is the "diameter" in Bregman divergence and is the gradient bound in the dual norm. Choosing to match the geometry of the constraint set can make much smaller than the Euclidean diameter, giving faster effective convergence.

Proof Sketch

The standard mirror descent analysis telescopes the Bregman divergence to the optimum: . Sum from to , use and , then optimize .

Why It Matters

Mirror descent with the entropic mirror map on the simplex achieves regret, compared to for projected gradient descent. The logarithmic dependence on dimension is a huge advantage in high-dimensional settings like online learning with exponentially many experts.

Failure Mode

The choice of mirror map is crucial. A poorly chosen gives a Bregman diameter that is no better (or worse) than Euclidean distance. The analysis also requires that the mirror map be strongly convex with respect to the chosen norm, which may be difficult to verify. For non-Lipschitz objectives, the rate degrades.

Frank-Wolfe (Conditional Gradient)

Frank-Wolfe Update

The Frank-Wolfe (conditional gradient) update for minimizing a smooth convex function over a compact convex set :

- Solve the linear minimization oracle (LMO):

- Update: where

The step direction always points into the feasible set, so no projection is needed.

The LMO is often much cheaper than projection. Over the nuclear norm ball, the LMO requires a single top singular vector computation (), while projection requires a full SVD (). Over polytopes, the LMO is a linear program.

Frank-Wolfe Convergence Rate

Statement

With step sizes , the Frank-Wolfe algorithm satisfies:

where .

Intuition

The rate is slower than the of accelerated gradient descent, but each iteration only requires a linear minimization, not a projection. The trade-off is worth it when the LMO is cheap and projection is expensive.

Proof Sketch

By smoothness, . Since minimizes the linear term over , we have by convexity. Substituting and setting gives the result by induction.

Why It Matters

Frank-Wolfe iterates are sparse: each iterate is a convex combination of at most vertices of . This makes it natural for problems where sparse solutions are desirable (e.g., sparse optimization, low-rank matrix completion). The method also never leaves the feasible set, which matters when feasibility has physical meaning.

Failure Mode

The rate cannot be improved in general for Frank-Wolfe without modifications (away steps, pairwise steps). The "zig-zagging" phenomenon arises when the optimum lies on the boundary of , and especially on a low-dimensional face: the iterates oscillate between vertices of the active face instead of moving cleanly along it, which is precisely the case the away-step and pairwise variants are designed to fix. For strongly convex objectives, vanilla Frank-Wolfe does not achieve the linear rate that projected gradient descent achieves.

Common Confusions

Mirror descent is not just projected gradient descent with a different norm

While projected gradient descent in the norm exists, it is not the same as mirror descent with the entropic mirror map. Mirror descent changes the geometry of the update step itself (using Bregman divergence instead of squared distance), not just the metric used in the projection. The two coincide only when .

Frank-Wolfe and mirror descent solve different bottlenecks

Mirror descent replaces Euclidean distance with a better geometry but still requires a Bregman projection. Frank-Wolfe eliminates projection entirely by using a linear oracle. If projection is cheap (e.g., onto a ball), use projected gradient descent or mirror descent. If projection is expensive but linear minimization is cheap (e.g., over a polytope or nuclear norm ball), use Frank-Wolfe.

Canonical Examples

Multiplicative weights from mirror descent

On the probability simplex , use the negative entropy . The mirror descent update for a linear loss gives:

This is the multiplicative weights update, with regret. The (instead of ) dependence on dimension is the key advantage over Euclidean methods.

Exercises

Problem

Verify that the Bregman divergence for is the squared Euclidean distance , and that the mirror descent update reduces to the projected gradient descent update.

Problem

Consider Frank-Wolfe on the ball in . What does the linear minimization oracle compute? What is the sparsity of the iterate after steps if ?

References

Canonical:

- Nemirovski & Yudin, Problem Complexity and Method Efficiency in Optimization (1983), Chapter 5

- Frank & Wolfe, "An algorithm for quadratic programming" (1956)

- Bubeck, "Convex Optimization: Algorithms and Complexity" (2015), Sections 4.2, 4.3

Current:

- Jaggi, "Revisiting Frank-Wolfe: Projection-Free Sparse Convex Optimization" (2013)

- Hazan, Introduction to Online Convex Optimization (2016), Chapter 5

Next Topics

- Projected gradient descent: the standard projection-based approach that mirror descent generalizes

- Coordinate descent: another projection-free approach for structured problems

Last reviewed: April 26, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

4- Convex Optimization Basicslayer 1 · tier 1

- Convex Dualitylayer 2 · tier 1

- Projected Gradient Descentlayer 2 · tier 2

- Online Convex Optimizationlayer 3 · tier 2

Derived topics

1- Coordinate Descentlayer 2 · tier 2

Graph-backed continuations