Mathematical Infrastructure

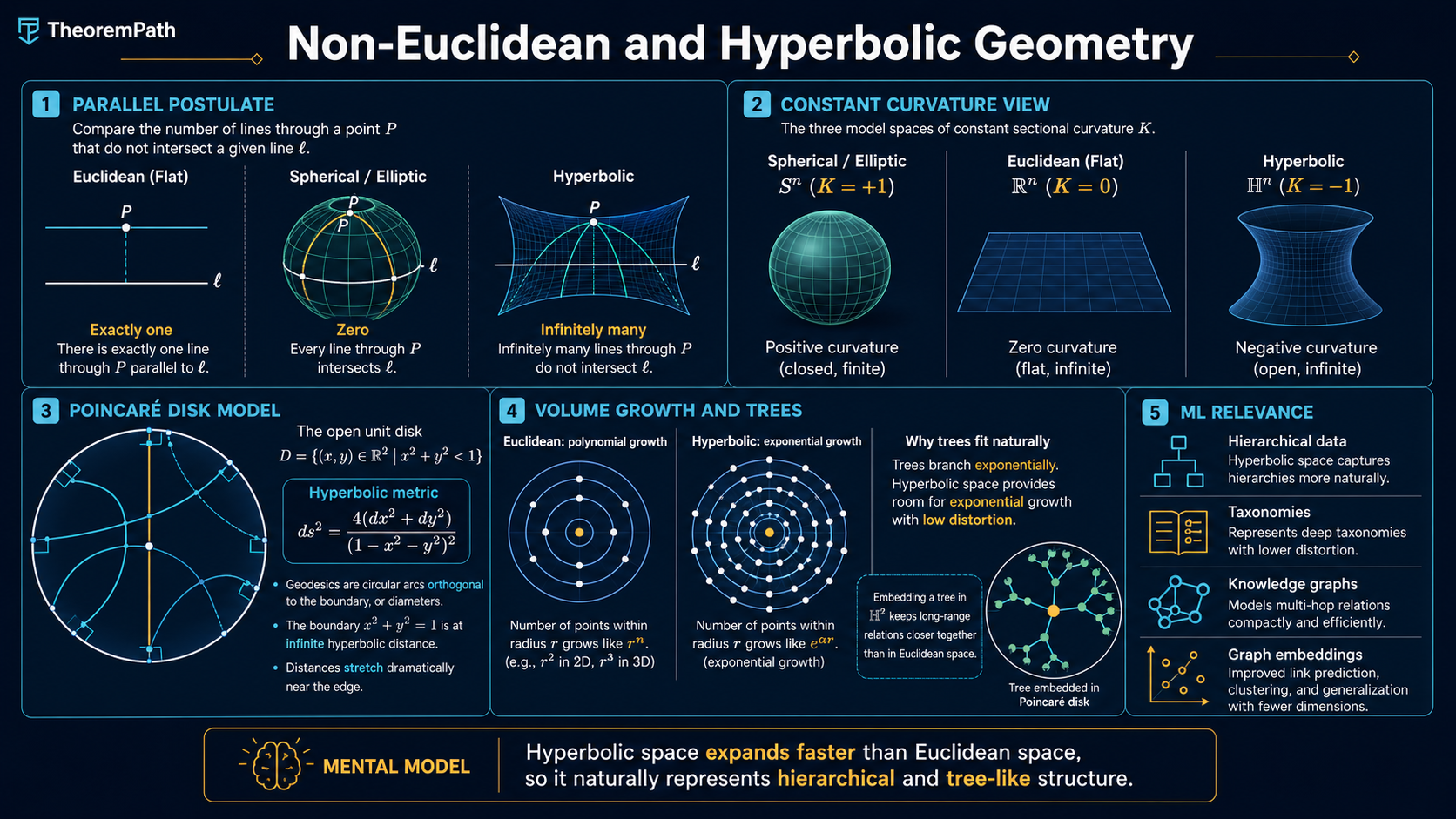

Non-Euclidean and Hyperbolic Geometry

The geometry that drops the parallel postulate. Hyperbolic and spherical models, sectional curvature, the Poincare disk, and why hyperbolic spaces embed tree-structured data with low distortion. The grounding for graph embeddings on curved spaces.

Why This Matters

Hierarchies, taxonomies, and trees are everywhere in ML data: WordNet, biological phylogenies, file systems, citation graphs, knowledge graphs. Embedding them faithfully in with the Euclidean metric is provably hard. The reason is geometric. Trees grow exponentially in the number of nodes per shell of radius ; Euclidean balls in have polynomial volume ; so the embedding must distort distances. In contrast, hyperbolic space has exponential volume growth, matching the combinatorial structure of trees, which is why hyperbolic embeddings (Nickel-Kiela 2017, Sala et al. 2018) achieve much lower distortion than Euclidean ones at the same dimension.

Hide overviewShow overview

This page is the geometric grounder. It states the parallel postulate, names the three constant-curvature model spaces, defines the Poincare disk metric, proves the volume-growth gap, and lists the operational consequences for graph and representation learning. It is not a substitute for a Riemannian geometry course; it is the prerequisite chain for hyperbolic-embeddings-for-graphs.

The Parallel Postulate and the Three Geometries

Euclid's fifth postulate, Playfair's version: through a point not on a given line there is exactly one line parallel to the given one. Dropping this postulate while keeping the others yields two consistent alternatives: zero parallels (spherical / elliptic geometry) or infinitely many parallels (hyperbolic geometry). The three constant-curvature model spaces in dimension are:

- , the round -sphere of constant sectional curvature .

- , Euclidean space, .

- , hyperbolic -space, .

A general Riemannian manifold has curvature that varies from point to point and from plane to plane in the tangent space. The constant-curvature spaces are the simplest models and serve as the local "tangent geometry" of the general case via the Gauss-Bonnet machinery.

Sectional Curvature

For a Riemannian manifold and a -plane in the tangent space at , the sectional curvature is the Gaussian curvature at of the small surface obtained by exponentiating at . The three model spaces above are exactly the simply connected complete Riemannian manifolds whose sectional curvature is constant in and .

The Poincare Disk Model

The cleanest model of for ML purposes is the Poincare disk. Take the open unit disk and equip it with the conformal metric The Riemannian distance between two points is The boundary of the disk is at infinite hyperbolic distance from any interior point: as , . Distances near the boundary stretch. Euclidean straight lines through the origin and Euclidean circular arcs that meet the boundary orthogonally are the geodesics (length-minimizing paths) of the model.

The conformal factor is what makes hyperbolic geometry so different from Euclidean geometry near the boundary, and is also the source of its embedding power. The same point that looks "small" in the Euclidean picture has enormous hyperbolic neighbourhood, so a polynomial number of dimensions can host an exponential number of well-separated points.

Volume Growth: The Embedding Argument

Volume Growth of a Ball

Statement

For the geodesic ball of radius in , where is the surface area of the unit -sphere. For large , .

For comparison, (polynomial) and (bounded).

Intuition

In hyperbolic space the metric coefficient grows exponentially with the radial distance , whereas in Euclidean space the coefficient is the polynomial . Volumes of shells therefore grow exponentially, which gives hyperbolic space "room" for a tree of branching factor to embed at depth .

Why It Matters

A complete binary tree of depth has nodes and pairwise graph distances up to . Embedding it isometrically in requires in the worst case (Bourgain), or significant distortion. In a tree of depth embeds with constant distortion: Sala et al. (2018) show that -ary trees embed in with distortion . The volume-growth match is the geometric reason. This is the launching pad for hyperbolic-embeddings-for-graphs.

Failure Mode

Hyperbolic embeddings help only when the data is approximately tree-like. For grid-structured or feature-grid data the hyperbolic gain disappears and the Euclidean baseline is at least as good. Gromov's -hyperbolicity of the data graph is the right diagnostic: small favours hyperbolic embedding, large does not.

Operations in the Poincare Ball

Practical hyperbolic ML works in the Poincare ball (the -dimensional analog of the disk). The basic operations live in the gyrovector formalism (Ungar 2008, Ganea et al. 2018):

- Mobius addition : the analog of vector addition.

- Exponential map : maps a tangent vector at to a point in . Replaces the Euclidean update .

- Logarithmic map : the inverse, mapping a target point to the tangent vector that reaches it.

- Hyperbolic distance : a curvature- rescaling of the Poincare formula above.

A hyperbolic neural-network layer applies a linear map in the tangent space at the origin, then exponentiates back to the ball. Optimization uses Riemannian gradient methods with the hyperbolic metric.

Spherical Geometry, Briefly

The sphere is the constant-positive-curvature counterpart. Key facts: geodesics are great circles; the diameter is ; volumes are bounded. In ML, spherical geometry shows up via L2-normalized embeddings (face recognition with cosine similarity, normalized text embeddings) and in the von Mises-Fisher distribution for directional data. The closed, finite nature of is a feature, not a bug, for problems with naturally bounded similarity.

Common Confusions

Hyperbolic distance is not Euclidean distance scaled

Two points near the centre of the Poincare disk have hyperbolic distance close to twice their Euclidean distance, but two points equally close in the Euclidean sense near the boundary can be infinitely far apart in the hyperbolic metric. The conformal factor depends on position. Reading off similarity from Euclidean distance in a hyperbolic embedding is a category error.

Negative curvature is not 'inverse' positive curvature

Spherical geometry is closed, bounded, and self-intersecting (great circles meet); hyperbolic geometry is open, unbounded, and tree-like. They are not each other's mirror image. Sign of curvature controls volume growth and geodesic spreading; the qualitative behaviour is asymmetric. Concretely, balls in saturate, balls in explode.

Exercises

Problem

Compute the Poincare-disk distance between the origin and the point for . Show that as the distance diverges to infinity, and compute the leading-order rate.

Problem

Show that a complete binary tree of depth requires at least Euclidean dimensions to embed with constant multiplicative distortion, but embeds in with constant distortion. Sketch the argument from volume growth.

References

- Manfredo P. do Carmo. Riemannian Geometry. Birkhauser, 1992. Chapters 4 and 8: sectional curvature, model spaces, volume comparison. Standard graduate Riemannian geometry text.

- James W. Anderson. Hyperbolic Geometry (2nd ed.). Springer, 2005. Chapters 1-4: Poincare disk, upper-half-plane model, isometries, distance formulas. Most accessible standalone hyperbolic-geometry reference.

- James W. Cannon, William J. Floyd, Richard Kenyon, Walter R. Parry. Hyperbolic Geometry. In Flavors of Geometry, MSRI Publications 31, 1997. Survey-style introduction with all five standard models and careful proofs.

- Maximilian Nickel, Douwe Kiela. Poincare Embeddings for Learning Hierarchical Representations. NeurIPS 2017. The paper that brought hyperbolic embeddings to mainstream representation learning. arXiv:1705.08039

- Frederic Sala, Christopher De Sa, Albert Gu, Christopher Re. Representation Tradeoffs for Hyperbolic Embeddings. ICML 2018. Distortion bounds for tree embeddings; precision-vs-dimension analysis. arXiv:1804.03329

- Octavian Ganea, Gary Becigneul, Thomas Hofmann. Hyperbolic Neural Networks. NeurIPS 2018. Mobius addition, exp/log maps, and hyperbolic layers in the gyrovector formalism. arXiv:1805.09112

Related Topics

Last reviewed: April 18, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

2- Metric Spaces, Convergence, and Completenesslayer 0A · tier 1

- Vectors, Matrices, and Linear Mapslayer 0A · tier 1

Derived topics

4- Distance Metrics Comparedlayer 1 · tier 2

- Hyperbolic Embeddings for Graphslayer 2 · tier 2

- Riemannian Optimization and Manifold Constraintslayer 3 · tier 2

- Information Geometrylayer 3 · tier 3