Modern Generalization

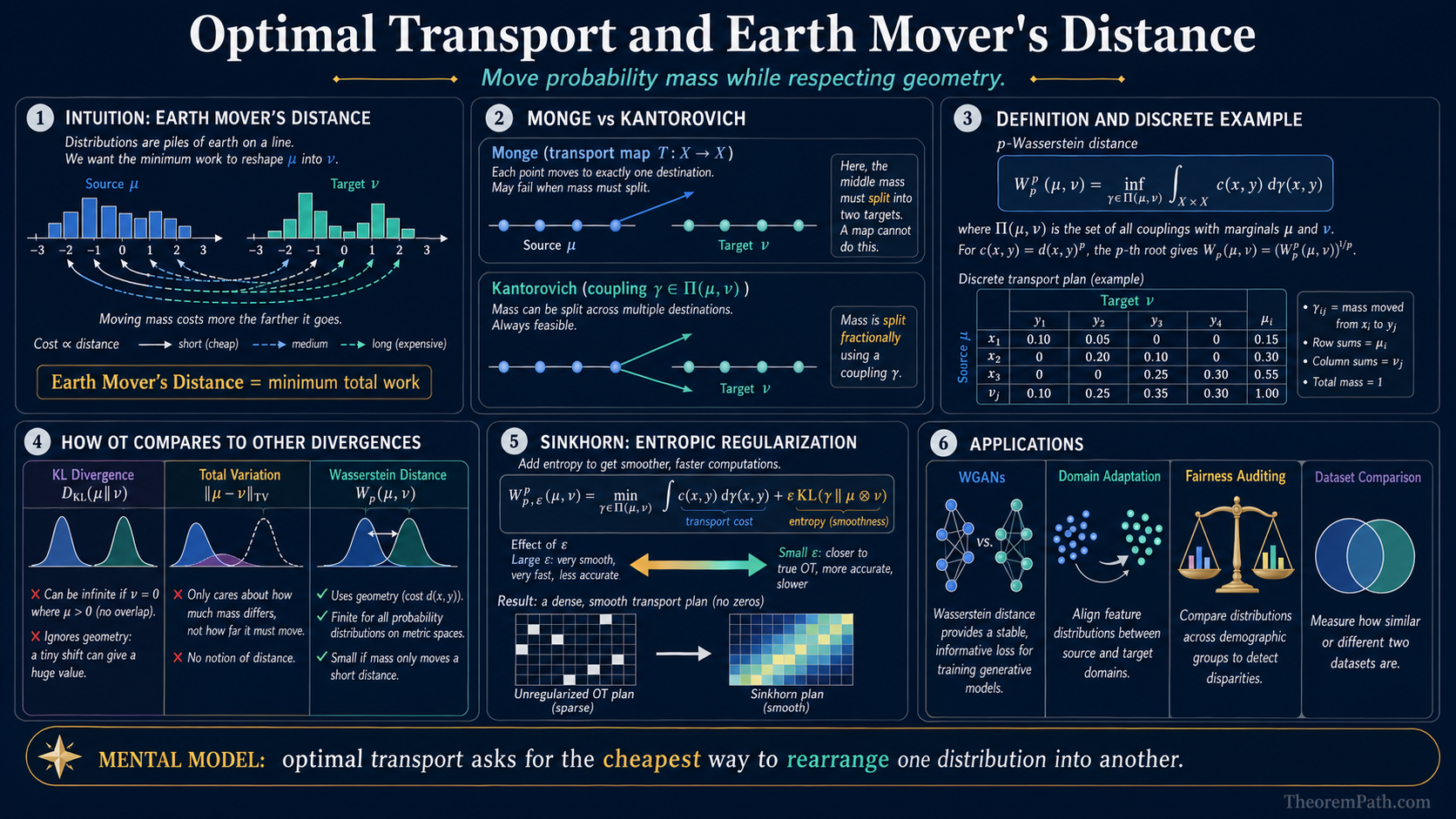

Optimal Transport and Earth Mover's Distance

The Monge and Kantorovich formulations of optimal transport, the linear programming dual, Sinkhorn regularization, and applications to WGANs, domain adaptation, and fairness.

Prerequisites

Why This Matters

Optimal transport (OT) gives you a principled way to measure the distance between probability distributions that respects the geometry of the underlying space. Unlike KL divergence or total variation, the Wasserstein distance does not blow up when distributions have non-overlapping support. This property made it central to Wasserstein GANs, and it continues to appear in domain adaptation, fairness auditing, and dataset comparison.

Hide overviewShow overview

Formal Setup

Let and be probability measures on a metric space . We want to quantify the "cost" of transforming into .

Monge Problem

Find a transport map such that (the pushforward of under equals ) and the total transport cost is minimized:

where is a cost function (typically ).

The Monge problem is hard: it requires a deterministic map, which may not exist (e.g., transporting a Dirac mass to a sum of two Dirac masses).

Kantorovich Relaxation

Replace the deterministic map with a coupling (joint distribution) on with marginals and :

where is the set of all joint distributions with first marginal and second marginal . For , the -th root of this quantity is the -Wasserstein distance .

The Kantorovich relaxation is a linear program: minimize a linear objective over a convex polytope of couplings.

Main Theorems

Kantorovich Duality

Statement

The Kantorovich primal has a dual:

For the case with , the supremum is over all 1-Lipschitz functions .

Intuition

The primal asks: what is the cheapest way to move mass from to ? The dual asks: what is the largest price difference a 1-Lipschitz "potential function" can extract between the two distributions? Strong duality says these two quantities are equal.

Proof Sketch

This follows from LP duality for the discrete case, or from Fenchel-Rockafellar convex duality in the continuous case. The constraint introduces dual variables (one for each marginal constraint), which become the potential functions and . The 1-Lipschitz constraint arises from , and at optimality (the -transform).

Why It Matters

The dual is what makes Wasserstein GANs work. Instead of solving a combinatorial transport problem, the WGAN critic learns a 1-Lipschitz function that maximizes . This is exactly the Kantorovich dual.

Failure Mode

Computing the exact via the dual requires enforcing the Lipschitz constraint on . Weight clipping (original WGAN) is crude and causes training instability. Gradient penalty (WGAN-GP) is better but adds computational cost. Spectral normalization provides a tighter constraint but still does not guarantee exact 1-Lipschitz behavior.

Sinkhorn Algorithm

Exact OT in discrete settings costs via network simplex. This is prohibitive for large datasets.

Entropic Regularization

Add an entropy penalty to the Kantorovich problem:

where is the entropy of the coupling. As , this recovers the original OT problem.

Sinkhorn Convergence

Statement

The Sinkhorn algorithm (alternating row and column normalization of ) converges linearly to the unique optimal entropic coupling. Each iteration costs . To approximate the unregularized OT cost within additive error , the sharp iteration complexity is (Altschuler, Weed, Rigollet 2017, arXiv:1705.09634), giving a total time of . Some expositions state a looser bound for convergence in the KL sense at fixed regularization; the form is the correct complexity for approximating the original OT problem.

Intuition

Entropic regularization makes the optimal coupling strictly positive and unique. The Sinkhorn iteration is coordinate ascent on the dual, alternating between optimizing the two marginal constraints. The matrix acts as a soft assignment kernel.

Proof Sketch

The entropically regularized dual decomposes into two blocks of variables (one per marginal). Each block update has a closed-form solution involving a row or column normalization of . Convergence follows from the contraction property of these updates in Hilbert's projective metric.

Why It Matters

Sinkhorn reduced OT computation from (exact LP) to for an -approximate OT cost (Altschuler, Weed, Rigollet 2017), making it practical for mini-batch computation in ML pipelines. It is differentiable, enabling end-to-end training with OT-based losses.

Failure Mode

Small is needed for accuracy but causes numerical instability (exponentials of large numbers). Log-domain stabilization is necessary in practice. Large gives fast convergence but a blurred, inaccurate transport plan.

Applications in ML

Wasserstein GANs. The original GAN loss uses JS divergence, which has zero gradient when generator and data supports do not overlap. The loss has informative gradients everywhere, stabilizing training. The WGAN critic approximates the Kantorovich dual.

Domain adaptation. If source and target domains have distributions and , bounding generalization on the target requires a measure of distribution shift. OT provides this measure while respecting feature geometry.

Fairness. Measuring whether a model treats two demographic groups similarly can be framed as measuring the OT distance between the conditional output distributions for each group.

Sliced Wasserstein. Computing in high dimensions is expensive. The sliced Wasserstein distance projects distributions onto random 1D lines (where OT reduces to sorting, costing ) and averages. This is a valid metric and scales to high-dimensional settings.

Common Confusions

Wasserstein distance is not just another f-divergence

KL divergence, JS divergence, and total variation are all -divergences. The Wasserstein distance is not. It requires a metric on the underlying space and metrizes weak convergence (convergence in distribution), while -divergences do not. This is precisely why gives useful gradients when supports do not overlap.

Earth movers distance is W1, not Wp for arbitrary p

The name "earth mover's distance" specifically refers to with the ground metric cost . The distance (quadratic cost) has different properties and a different dual formulation involving the Brenier theorem.

Exercises

Problem

For two discrete distributions and on with ground metric , compute by writing out the coupling polytope and solving the LP.

Problem

Show that the Kantorovich dual for on a finite metric space reduces to a linear program. How many constraints does the LP have if both distributions are supported on points?

References

Canonical:

- Villani, Optimal Transport: Old and New (2009), Chapters 1-6

- Peyre & Cuturi, Computational Optimal Transport (2019), Chapters 2-4

Current:

- Arjovsky, Chintala, Bottou, "Wasserstein GAN" (2017)

- Cuturi, "Sinkhorn Distances" (NeurIPS 2013)

- Altschuler, Weed, Rigollet, "Near-linear time approximation algorithms for optimal transport via Sinkhorn iteration" (NeurIPS 2017, arXiv:1705.09634), tight complexity analysis

Last reviewed: April 14, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

2- Convex Dualitylayer 2 · tier 1

- Wasserstein Distanceslayer 4 · tier 3

Derived topics

0No published topic currently declares this as a prerequisite.