Mathematical Infrastructure

Radon-Nikodym and Conditional Expectation

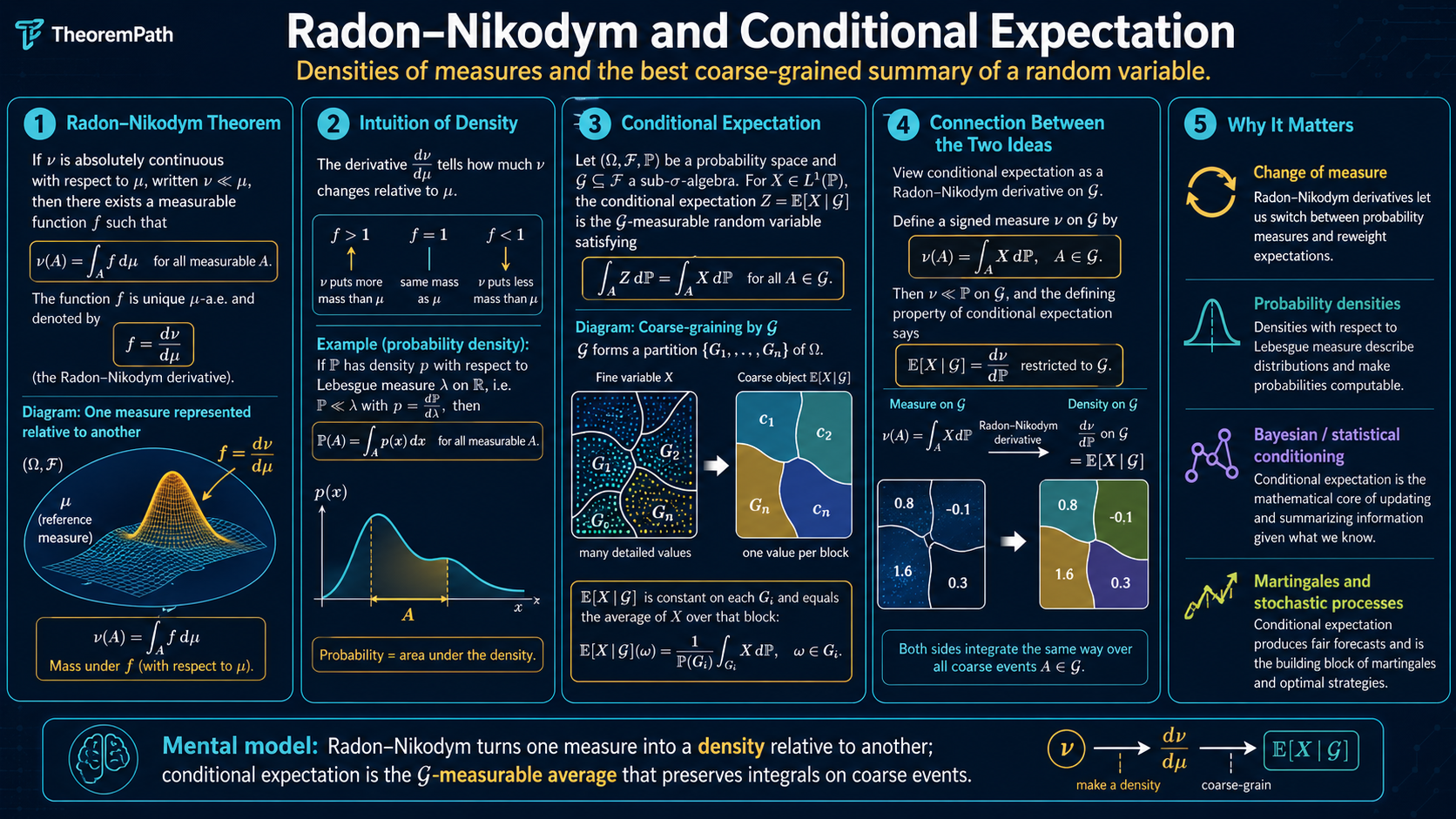

The Radon-Nikodym theorem: what 'density' really means. Absolute continuity, the Radon-Nikodym derivative, conditional expectation as a projection, tower property, and why this undergirds likelihood ratios, importance sampling, and KL divergence.

Prerequisites

Why This Matters

The word "density" appears on nearly every page of a statistics or ML textbook. But what is a density? It is not just "the derivative of the CDF." Rigorously, a density is a Radon-Nikodym derivative --- the ratio of one measure with respect to another. This single concept unifies:

Hide overviewShow overview

- Likelihood ratios: is literally a Radon-Nikodym derivative

- Importance sampling: reweighting samples by

- KL divergence:

- Bayesian posteriors: the posterior density is the prior density times the likelihood, normalized

- Conditional expectation: is defined via the Radon-Nikodym theorem

If you skip this topic, you will use "density" as a vague synonym for "PDF on ." You will not understand why likelihood ratios require absolute continuity, why importance sampling can fail catastrophically, or what conditional expectation actually is beyond the formula .

Mental Model

Think of two measures and on the same space. If is "compatible" with --- meaning that whenever says a set has zero size, agrees --- then can be expressed as a "weighted version" of . The weight function is the Radon-Nikodym derivative . It tells you: at each point, how much more (or less) does care about this region compared to ?

A PDF is precisely this: it tells you how much the probability measure weighs each region compared to Lebesgue measure . The formula is just .

The two tabs highlight the page's central equivalence of viewpoint. In the Radon-Nikodym view, you compare one measure against a reference measure and read off a density ratio. In the conditional-expectation view, you keep the same underlying probability space but collapse information down to a coarser -algebra, replacing fine variation by the unique -measurable function with matching integrals on every -measurable set.

Formal Setup

Absolute Continuity

Let and be measures on . We say is absolutely continuous with respect to , written , if and only if:

Equivalently: every -null set is also -null. If assigns positive measure to some set that considers negligible, then is not absolutely continuous with respect to .

Examples:

- Any probability distribution with a PDF is absolutely continuous with respect to Lebesgue measure

- A discrete distribution (point masses) is not absolutely continuous with respect to Lebesgue measure

- Two Gaussians and are mutually absolutely continuous

Singular Measures

Two measures and are mutually singular, written , if and only if there exists a set such that and . They "live on disjoint sets."

Example: Lebesgue measure and the counting measure on are mutually singular. Any discrete distribution is singular with respect to any continuous distribution.

Main Theorems

Radon-Nikodym Theorem

Statement

If and both are -finite, then there exists a measurable function such that for every :

The function is called the Radon-Nikodym derivative of with respect to , written . It is unique -almost everywhere.

Intuition

The Radon-Nikodym derivative is the "local ratio" of two measures. At each point , tells you how much more mass puts near compared to . If concentrates more mass somewhere, is large there. If puts less mass, is small.

When is Lebesgue measure and is a probability measure with a density, then is exactly the PDF. The Radon-Nikodym theorem says: densities exist whenever absolute continuity holds, and not just on --- on any measurable space.

Proof Sketch

(Hilbert space proof for finite measures): Consider the measure . On , the map is a bounded linear functional (by Cauchy-Schwarz, since ). By the Riesz representation theorem, there exists with for all .

One shows -a.e. (by testing with indicator functions). Then set on . Absolute continuity of with respect to ensures has -measure zero. Then .

Why It Matters

The Radon-Nikodym theorem is the rigorous foundation for:

-

PDFs: where is Lebesgue measure. The "density" is not a property of alone --- it is a relationship between and a reference measure.

-

Likelihood ratios: is the likelihood ratio, and it is meaningful only when . If the two models assign positive probability to disjoint regions, the likelihood ratio does not exist.

-

Importance sampling: . This requires ; if assigns zero probability to a region where is positive, you will never sample there and the estimator is biased.

-

KL divergence: . This requires ; if not, .

Failure Mode

Without absolute continuity, the Radon-Nikodym derivative does not exist. If is a point mass at and is Lebesgue measure, then but , so is not absolutely continuous with respect to . There is no function such that . This is why you cannot write a "PDF" for a discrete distribution with respect to Lebesgue measure.

Conditional Expectation

Conditional Expectation (Measure-Theoretic)

Let be a probability space, an integrable random variable, and a sub-sigma-algebra. The conditional expectation is the -measurable random variable satisfying:

It exists (by the Radon-Nikodym theorem applied to the signed measure restricted to ) and is unique -almost surely.

Why is this the right definition? The condition says: is the "best guess" of given only the information in , in the sense that it has the same integral as over every -measurable set. It is a projection of onto the space of -measurable functions.

When (the sigma-algebra generated by a random variable ), we write , which is a function of . In the special case where and are jointly continuous with density, this reduces to the familiar formula .

Existence of Conditional Expectation

Statement

For any integrable random variable and sub-sigma-algebra , there exists a -measurable random variable satisfying for all . This is unique -a.s.

Intuition

Think of as a Hilbert space. The -measurable functions form a closed subspace. is the orthogonal projection of onto this subspace. The projection is the element of the subspace closest to in norm, which is the best -measurable predictor of in the mean-squared error sense.

Proof Sketch

Define for . This is a signed measure on that is absolutely continuous with respect to (since implies when is integrable). By the Radon-Nikodym theorem for signed measures, has a density with respect to . This is -measurable and satisfies the defining property.

Why It Matters

Conditional expectation is the central object in:

- Bayesian statistics: the posterior mean is

- Martingale theory: a martingale satisfies

- Dynamic programming: the Bellman equation involves

- Regression: is the regression function, the optimal predictor of given under squared loss

Failure Mode

The naive formula requires and a well-defined conditional density. For general random variables (not jointly continuous), this formula does not work. The measure-theoretic definition handles all cases but is less intuitive. When working with conditional expectations in proofs, always use the abstract property () rather than the density formula.

Properties of Conditional Expectation

The following properties are used constantly in probability and ML theory. Let be sub-sigma-algebras with .

Tower property (law of iterated expectations):

Coarse information washes out finer conditioning. The special case gives .

Linearity: .

Pull-out property: If is -measurable and is integrable, then .

Jensen's inequality for conditional expectation: If is convex, then .

Why "Density" Is Not Just a PDF on R

A common source of confusion: students think "density" always means a function that integrates to 1 with respect to Lebesgue measure. But a density is a Radon-Nikodym derivative, and the reference measure can be anything:

- PDF on : where is Lebesgue measure

- PMF on : where is counting measure. The PMF is the Radon-Nikodym derivative of with respect to counting measure

- Likelihood ratio: is a density of one probability measure with respect to another

- Change of variables: if , the density of with respect to Lebesgue measure involves the Jacobian, but this is just the chain rule for Radon-Nikodym derivatives

The unified view: a "density" is always for some pair of measures. The Radon-Nikodym theorem says this exists if and only if .

| Quantity | Reference measure | Radon-Nikodym derivative | What it means |

|---|---|---|---|

| PDF on | Lebesgue measure | ordinary continuous density | |

| PMF on a countable set | counting measure | discrete mass function | |

| Likelihood ratio | baseline model | how one model reweights another | |

| Conditional expectation | the restricted measure for the signed measure | the best -measurable average of |

Canonical Examples

Gaussian likelihood ratio

Let and . Since both are absolutely continuous with respect to Lebesgue measure, they are mutually absolutely continuous ( and ). The likelihood ratio is:

This ratio is the sufficient statistic for testing vs (Neyman-Pearson lemma). In importance sampling, if you draw and want , you compute .

Conditional expectation of a Gaussian given a linear observation

Let be jointly Gaussian with , , , and . Then:

This is a linear function of . The conditional variance is , which does not depend on . For Gaussians, the conditional expectation is always linear, and the conditional variance is always constant. This is the foundation of linear regression.

Common Confusions

Density is not an intrinsic property of a distribution

The density depends on the reference measure. The standard normal has density with respect to Lebesgue measure, but density 1 with respect to itself. A Bernoulli distribution has no density with respect to Lebesgue measure (it is singular), but has a perfectly good density (its PMF) with respect to counting measure. The question "what is the density?" is incomplete without specifying "with respect to what?"

Conditional expectation is a random variable, not a number

is a function of , hence a random variable. Only when you condition on a specific value do you get a number . A common mistake is to treat as if it were a fixed number. It is not --- it depends on the outcome through the information in .

Tower property requires the inclusion G contains H

The tower property requires . You are conditioning on coarser information in the outer expectation. If and are unrelated sigma-algebras, the tower property does not apply. A common error is to apply it when the nesting condition fails.

Summary

- Absolute continuity means cannot assign positive mass where assigns zero

- Radon-Nikodym: if , then

- A "density" is always a Radon-Nikodym derivative with respect to some reference measure

- Conditional expectation is defined as the Radon-Nikodym derivative of on

- Tower property: when

- Likelihood ratios, importance sampling weights, and KL divergence are all functions of Radon-Nikodym derivatives

- Conditional expectation is a random variable (function of the conditioning information), not a fixed number

Exercises

Problem

Let and . Compute the Radon-Nikodym derivative and verify that .

Problem

Use the tower property to prove that if is an unbiased estimator of (i.e., ) and is a sufficient statistic, then is also unbiased and has variance at most that of (Rao-Blackwell theorem).

Problem

Give an example where but the importance sampling estimator with has infinite variance, even though is finite. What property of causes this, and what does it imply for practical importance sampling?

References

Canonical:

- Billingsley, Probability and Measure (3rd ed., 1995), Chapter 32

- Durrett, Probability: Theory and Examples (5th ed., 2019), Sections 4.1, 5.1

- Williams, Probability with Martingales (1991), Chapters 6, 9

Current:

- Schervish, Theory of Statistics (1995), Chapter 1

- Pollard, A User's Guide to Measure Theoretic Probability (2002), Chapter 5

Next Topics

Building on the Radon-Nikodym theorem:

- Maximum likelihood estimation: the likelihood function is a Radon-Nikodym derivative

- Importance sampling: reweighting by to estimate expectations under using samples from

- Concentration inequalities: the first application of measure-theoretic tools to bounding tail probabilities

Last reviewed: April 23, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

1- Measure-Theoretic Probabilitylayer 0B · tier 1

Derived topics

5- Maximum Likelihood Estimation: Theory, Information Identity, and Asymptotic Efficiencylayer 0B · tier 1

- Concentration Inequalitieslayer 1 · tier 1

- Importance Samplinglayer 2 · tier 1

- Weighted Conformal Prediction Under Covariate Shiftlayer 3 · tier 1

- Adaptive Learning Is Not IIDlayer 3 · tier 2