ML Methods

Recurrent Neural Networks

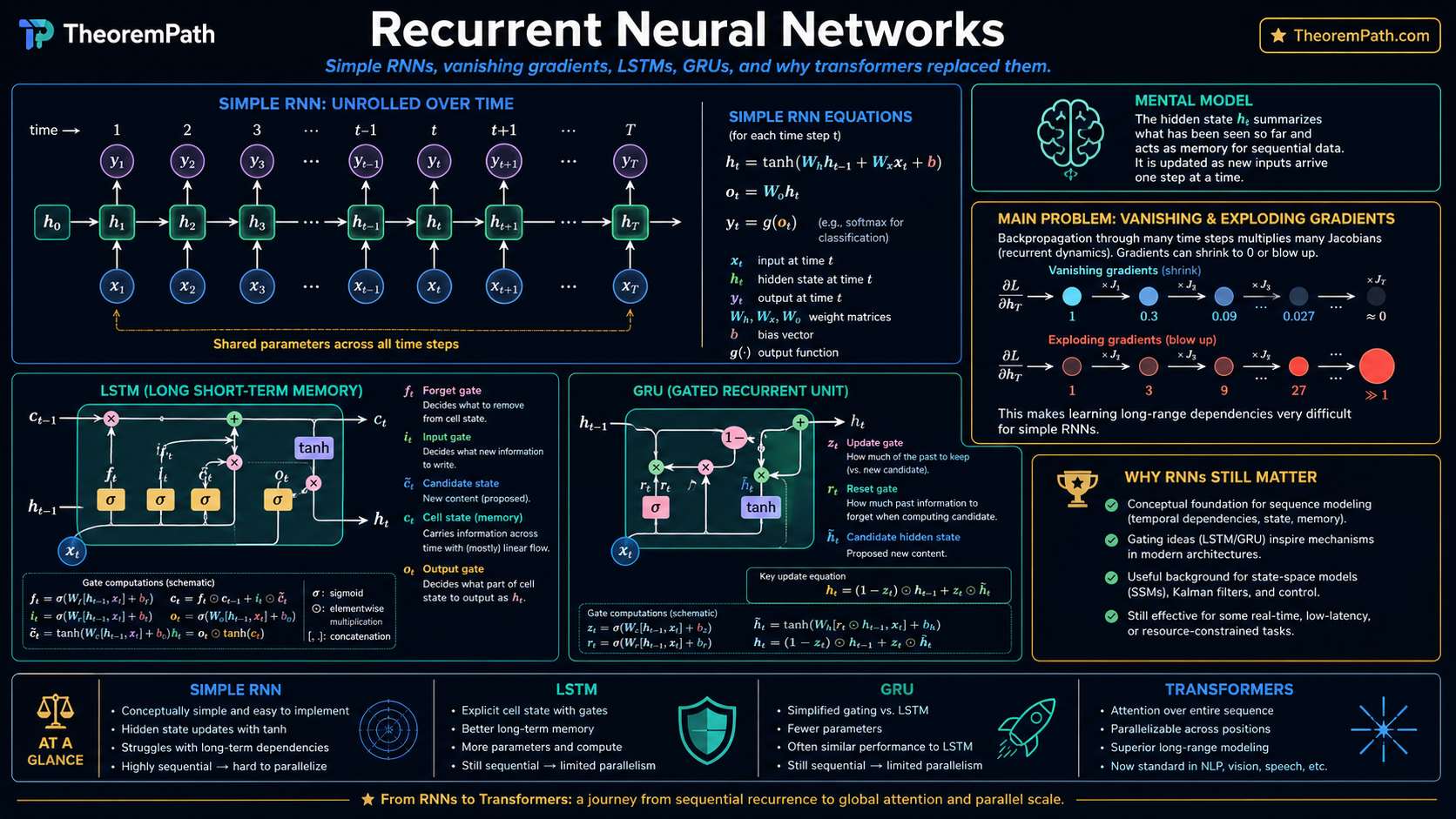

Sequential processing via hidden state recurrence: the simple RNN, vanishing and exploding gradients, LSTM gating mechanisms, and why transformers have largely replaced RNNs.

Prerequisites

Why This Matters

RNNs are the conceptual foundation for sequence modeling in deep learning. Even though transformers have largely replaced them in practice, understanding RNNs is essential because: (1) the vanishing gradient problem they suffer from motivates the design of LSTMs, GRUs, and ultimately attention mechanisms; (2) gating mechanisms invented for LSTMs appear throughout modern architectures; (3) RNN-like state machines are resurgent in efficient inference (state space models, linear attention).

Hide overviewShow overview

Mental Model

A feedforward network processes each input independently. An RNN maintains a hidden state that summarizes everything seen so far. At each time step, the new hidden state is a function of the previous state and the current input. This is a learned dynamical system. The hidden state is the systems memory.

The Simple RNN

Simple (Elman) RNN

The simple RNN update rule is:

where is the hidden state, is the input at time , is the recurrent weight matrix, is the input weight matrix, and is the bias. The output at each step is typically .

The same parameters are shared across all time steps. this is weight sharing over time, analogous to weight sharing over space in CNNs.

Backpropagation Through Time (BPTT)

Training an RNN requires computing gradients through the entire sequence. Unrolling the recurrence for steps produces a very deep feedforward network with shared weights. The gradient of the loss at time with respect to the hidden state at time involves a product of Jacobians:

where . This product of matrices is the source of both vanishing and exploding gradients.

The Vanishing/Exploding Gradient Problem

Gradient Scaling via Eigenvalues of W_h

Statement

For the simple RNN, the norm of the gradient scales approximately as (ignoring the tanh derivative, which is bounded by 1). Specifically:

- If the largest singular value , gradients vanish exponentially: as

- If , gradients can explode exponentially

With the tanh derivative included (which is in ), vanishing is the dominant failure mode.

Intuition

Multiplying a matrix by itself many times amplifies its dominant eigenvalue. If that eigenvalue is less than 1, repeated multiplication drives the product to zero. If greater than 1, it explodes. Since , the tanh activation only makes vanishing worse, never better.

Proof Sketch

Bound since . If (as an operator norm), this bound decays exponentially with .

Why It Matters

Vanishing gradients mean the RNN cannot learn long-range dependencies: the gradient signal from a target at time cannot reach the hidden state at time . This is the fundamental limitation that motivated LSTMs, GRUs, and eventually transformers.

Failure Mode

This analysis uses worst-case norm bounds. In practice, gradients can be well-behaved even when if the network operates in a regime where the effective Jacobian spectral radius stays near 1 (the "edge of chaos"). But simple RNNs are fragile. slight changes push them into vanishing or exploding regimes.

Gradient clipping is a common remedy for exploding gradients: if , rescale . This prevents catastrophic parameter updates but does not solve vanishing gradients.

LSTM: Long Short-Term Memory

LSTM Cell

The LSTM introduces a separate cell state that acts as an information highway, controlled by three gates:

Forget gate (what to erase from cell state):

Input gate (what new information to write):

Cell state update (highway with gated read/write):

Output gate (what to expose as hidden state):

Here is the sigmoid function and is element-wise multiplication. All gates output values in .

The cell state is the critical innovation. When and , the cell state passes through unchanged. gradients flow backward without decay. This is the "highway" that solves vanishing gradients: the gradient through the cell state is , and when forget gates are near 1, this is approximately the identity.

GRU: Gated Recurrent Unit

GRU Cell

The GRU simplifies the LSTM by merging the cell state and hidden state, using two gates instead of three:

Reset gate:

Update gate:

Candidate state:

Hidden state update:

The update gate interpolates between keeping the old state and adopting the new candidate. It simultaneously plays the roles of the LSTM forget and input gates.

GRUs have fewer parameters than LSTMs ( vs. for recurrent weights) and often perform comparably. Neither consistently outperforms the other across all tasks.

Why Transformers Replaced RNNs

RNNs process sequences step by step. The computation at time depends on time . This creates two problems:

- Sequential bottleneck: cannot parallelize across time steps during training. Transformers process all positions simultaneously.

- Long-range dependencies: even LSTMs struggle with very long sequences (hundreds to thousands of steps). Transformers connect every position to every other via self-attention with path length.

However, RNNs retain advantages for online/streaming settings and when memory is limited (constant state size vs. growing KV cache). State space models (Mamba, etc.) combine RNN-like recurrence with transformer-like performance.

Canonical Examples

Simple RNN language model

Given a vocabulary of words, embed each word as , update , and predict the next word via . Parameters: , , , plus embeddings in . Total parameters.

LSTM forget gate initialization

A practical trick: initialize the forget gate bias to a positive value (e.g., 1 or 2). This makes at initialization, allowing information to flow through the cell state from the start. Without this, the network may learn to forget everything before it learns what to remember.

Common Confusions

RNN hidden state is not memory in the human sense

The hidden state is a fixed-size vector that must compress the entire history into dimensions. It is not a content-addressable memory. it cannot selectively recall specific past inputs. This fundamental bottleneck is what attention mechanisms solve by allowing the model to look back at all previous hidden states.

Vanishing gradients do not mean zero gradients

Vanishing gradients mean that the gradient contribution from distant time steps becomes negligible compared to recent ones. The total gradient is not zero. It is dominated by short-range dependencies. The RNN can still learn, but it effectively ignores long-range patterns.

Summary

- Simple RNN: . same weights at every time step

- Gradient scales as : vanishing if , exploding if

- LSTM adds a cell state highway with forget/input/output gates to preserve long-range gradient flow

- GRU simplifies LSTM to two gates (reset and update) with comparable performance

- Transformers replaced RNNs due to parallelism and superior long-range modeling, but RNN-like models are resurging via state space models

Exercises

Problem

An LSTM has hidden dimension . How many parameters are in the recurrent weight matrices (ignore biases and input weights)? Compare to a simple RNN with the same hidden dimension.

Problem

Show that when all forget gates and all input gates , the LSTM cell state for all . What does this imply about the gradient ?

References

Canonical:

- Hochreiter & Schmidhuber, "Long Short-Term Memory" (1997)

- Cho et al., "Learning Phrase Representations using RNN Encoder-Decoder" (2014)

- Goodfellow, Bengio, Courville, Deep Learning (2016), Chapter 10

Current:

- Gu & Dao, "Mamba: Linear-Time Sequence Modeling with Selective State Spaces" (2023)

Next Topics

The natural next steps from RNNs:

- Transformers: the attention-based architecture that replaced RNNs

- Attention mechanisms: the key innovation enabling long-range dependencies

Last reviewed: April 13, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

3- Feedforward Networks and Backpropagationlayer 2 · tier 1

- Convolutional Neural Networkslayer 3 · tier 2

- Macroeconomic Time-Series Forecastinglayer 4 · tier 3

Derived topics

9- Attention Mechanisms Historylayer 3 · tier 2

- Deep Learning for Time Serieslayer 3 · tier 2

- Speech and Audio MLlayer 3 · tier 2

- Forgetting Transformer (FoX)layer 4 · tier 2

- Mamba and State-Space Modelslayer 4 · tier 2

+4 more on the derived-topics page.