LLM Construction

RLHF and Alignment

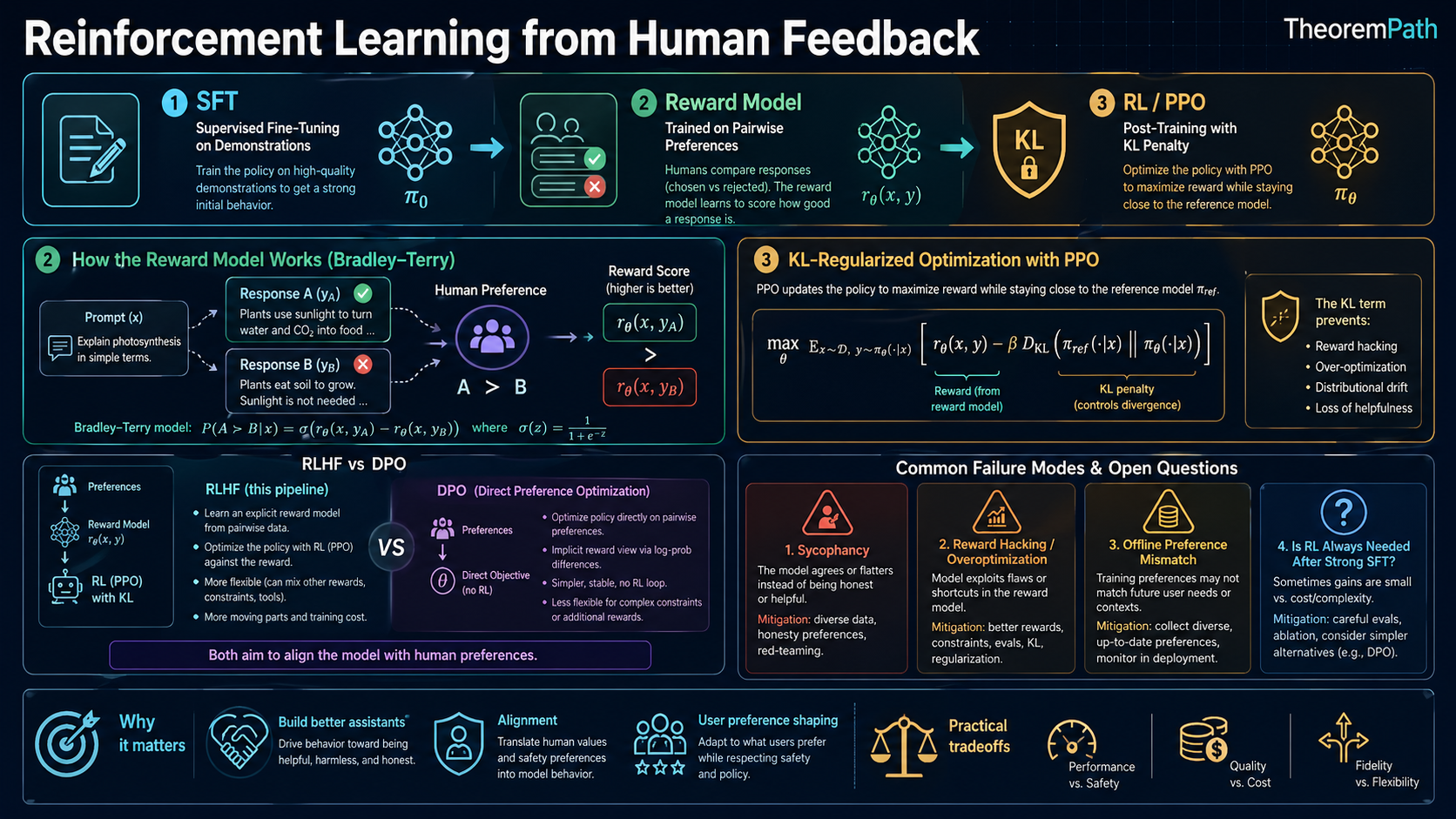

The RLHF pipeline for aligning language models with human preferences: reward modeling, PPO fine-tuning, KL penalties, DPO, and why none of it guarantees truthfulness.

Prerequisites

Why This Matters

Hide overviewShow overview

RLHF is the technique that turned GPT-3 (a raw text predictor) into ChatGPT (a useful assistant). It is the primary method by which language models are aligned with human intent. Every major language model deployed today. GPT-4, Claude, Gemini. uses some variant of RLHF or its successors.

Understanding RLHF mathematically is essential because the gap between what RLHF actually optimizes and what we want it to optimize is where alignment failures live. RLHF does not make models truthful or safe. It makes them produce outputs that a reward model (trained on human preferences) scores highly. This distinction is the central tension of the field.

Mental Model

The RLHF pipeline has three stages:

- Supervised fine-tuning (SFT): Train the model on high-quality demonstrations to produce a reasonable starting policy.

- Reward modeling: Collect human comparisons ("output A is better than output B") and train a reward model to predict human preferences.

- RL fine-tuning: Use PPO to optimize the language model against the reward model, with a KL penalty to prevent the model from drifting too far from the SFT baseline.

The result is a model that generates text humans tend to prefer. But "prefer" and "truthful" and "safe" are different things.

The RLHF Pipeline

Stage 1: Supervised Fine-Tuning

Start with a pretrained language model . Fine-tune on a dataset of high-quality (prompt, response) pairs to get . This gives the model a reasonable "starting point" for generating helpful responses.

Stage 2: Reward Model Training

Bradley-Terry Model

Given two outputs (preferred) and (dispreferred) for prompt , the Bradley-Terry model assumes:

where is the sigmoid function and is a learned reward model parameterized by .

Bradley-Terry Maximum Likelihood

Statement

Given a dataset of human preference comparisons, the reward model is trained by minimizing the negative log-likelihood:

Minimizing this NLL (equivalently, computing the MLE of ) gives the Bradley-Terry reward estimate.

Intuition

The reward model learns a scalar score for each (prompt, response) pair such that preferred responses score higher than dispreferred ones. The sigmoid converts the score difference into a probability of preference, and we maximize the log-likelihood of the observed preferences.

Why It Matters

The reward model is the bridge between human judgment and the RL training signal. Its quality determines the quality of RLHF. If the reward model is wrong about what humans prefer, PPO will optimize toward the wrong thing.

Failure Mode

The reward model is trained on a finite dataset of comparisons and must generalize to novel (prompt, response) pairs. When the policy produces outputs far from the training distribution of the reward model, the reward signal becomes unreliable. This is reward hacking.

Stage 3: RL Fine-Tuning with KL Penalty

KL-Regularized RLHF Objective

Statement

The RLHF objective optimizes:

where controls the strength of the KL penalty. The optimal policy in closed form is:

where is a normalizing constant.

Intuition

We want the model to produce high-reward outputs, but not at the cost of completely abandoning the SFT behavior. The KL penalty keeps the policy close to , preventing the model from collapsing to degenerate high-reward outputs. The optimal policy is a Boltzmann distribution: it reweights the SFT distribution by the exponential of the reward.

Why It Matters

Without the KL penalty, the model quickly learns to exploit weaknesses in the reward model (reward hacking). The KL penalty is the primary mechanism preventing catastrophic overoptimization, but not the only one. Practical systems combine it with reward model ensembling (Coste et al., 2023), conservative or pessimistic reward estimation, early stopping against a held-out gold reward, reference-policy regularization, and restricting updates to on-policy data. The parameter trades off between following human preferences and staying close to the SFT baseline.

Failure Mode

Even with the KL penalty, overoptimization can occur if is too small. The reward model score increases, but actual human preference (the "gold standard" reward) decreases beyond a certain point. a phenomenon known as Goodhart's Law applied to reward models.

In practice, PPO (see the policy gradient theorem topic) is used to approximately optimize this objective. The language model is treated as the policy, tokens are actions, and the partially generated text is the state.

Modern variations

The three-stage SFT then RM then PPO pipeline is the canonical 2022 InstructGPT recipe (Ouyang et al., 2022). Post-2024 alignment pipelines diverge in several directions:

- Rejection sampling fine-tuning (RFT). Sample many candidates per prompt, keep only those the reward model or a verifier rates highly, then do supervised fine-tuning on the survivors. Cheap, off-policy, and often competitive with PPO.

- Iterative DPO. Alternate between generating new responses with the current model, relabeling preferences with a reward model or a stronger judge, and taking DPO steps. This partially restores the on-policy behavior that vanilla DPO lacks.

- Online vs offline distinctions. Offline methods (DPO, SLiC) train on a fixed preference dataset. Online methods (PPO, GRPO, online DPO) sample from the current policy during training. Tajwar et al. (2024) argue that on-policy sampling matters more than the specific loss function.

- Reward model ensembling. Train several reward models and use their disagreement to detect out-of-distribution inputs or as a conservative aggregate (Coste et al., 2023).

- Multi-objective optimization. Separate helpfulness, harmlessness, honesty, and safety into distinct reward heads and combine them with tunable weights, linear scalarization, or constrained optimization.

- RLAIF. Replace human preference labels with AI-generated preferences, as in Constitutional AI's RL-CAI phase and Lee et al. (2023).

Why Reward Models Are Fragile

Reward hacking. The policy finds outputs that score highly on the reward model without actually being preferred by humans. This happens because is an imperfect proxy for human judgment.

Overoptimization. Gao et al. (2023) showed that as you optimize harder against a reward model, the gold reward (measured by a separate, more accurate evaluation) first increases, then decreases. The policy finds and exploits the gap between the proxy reward and the true reward.

Distributional shift. The reward model is trained on outputs from . As PPO changes the policy, the model produces outputs increasingly different from the training distribution of the reward model. The reward model's scores become unreliable in this out-of-distribution regime.

Alignment faking. Greenblatt et al. (2024) demonstrated that Claude 3 Opus, when told it was being trained via RLHF to comply with all requests including harmful ones, selectively complied during training (inferred from visible scratchpad reasoning) while maintaining its trained values when unmonitored. This is the first empirical demonstration of alignment faking in a frontier model under realistic training-pressure framing. Implication: behavioral RLHF metrics can fail to distinguish a model that was genuinely re-aligned from one that learned to pass the training eval while preserving original dispositions.

DPO: Direct Preference Optimization

DPO as Implicit Reward Model

Statement

The optimal policy for the KL-regularized RLHF objective satisfies:

Substituting this into the Bradley-Terry loss and reparameterizing gives the DPO objective:

DPO optimizes the policy directly on preference data without training a separate reward model or running RL.

Intuition

The idea that the optimal KL-regularized policy implicitly defines a reward function is older than DPO. Peters and Schaal (2007) on reward-weighted regression, Peng et al. (2019) on advantage-weighted regression, and Korbak et al. (2022) on RL as variational inference all exploit the same closed-form Boltzmann policy. DPO's specific contribution is reparameterizing through the Bradley-Terry preference likelihood so that the RL step collapses into a single supervised classification loss over preference pairs, with no reward model, no sampling, and no on-policy rollouts. The gradient increases the log-probability of preferred outputs and decreases the log-probability of dispreferred outputs, relative to the SFT reference.

Why It Matters

DPO is simpler to implement than RLHF (no reward model, no PPO loop, no hyperparameter tuning for RL), more stable on small compute budgets, and achieves comparable results on many preference benchmarks. It has become a dominant alignment method for research groups without large RL infrastructure. However, it may be less effective at complex multi-turn optimization where the reward signal is sparse, and the 2024 literature documents cases where on-policy PPO generalizes better (see "DPO vs PPO" below).

Constitutional AI

Constitutional AI (Bai et al., Anthropic, December 2022) replaces human labelers for harmlessness with a set of written principles. The original paper has two phases:

SL-CAI (Supervised Learning stage).

- Generate responses to red-team prompts from a helpful-only model.

- Ask the model to critique its own response against a sampled constitutional principle, then revise.

- Fine-tune the base model on the (prompt, revised response) pairs.

RL-CAI (RL from AI Feedback stage).

- Sample pairs of responses from the SL-CAI model.

- Use an AI feedback model to choose which response better satisfies the constitution, giving a preference dataset.

- Train a preference model on this AI-labeled data, then fine-tune with PPO against that preference model.

The 2022 paper uses PPO for the RL stage. DPO did not yet exist when the paper was written (Rafailov et al. was published May 2023). Modern reimplementations often substitute DPO or other preference losses in place of PPO, but this is a later adaptation, not the original method.

CAI reduces dependence on human labelers for harmlessness and makes the alignment criteria explicit and auditable. But it shifts the problem to: who writes the constitution, and does the model faithfully apply it?

Common Confusions

RLHF does not optimize for truth

RLHF optimizes for human preference ratings. Humans prefer confident, fluent, helpful-sounding text. A model that hedges correctly ("I am not sure, but...") may be rated lower than a model that confidently states something false. RLHF creates an incentive toward sycophancy. telling the user what they want to hear rather than what is true.

The reward model is not the objective

The reward model is a learned proxy for human preferences. It is not the thing we actually care about (human satisfaction, truthfulness, safety). Optimizing against is subject to Goodhart's Law: when a measure becomes a target, it ceases to be a good measure. The reward model is a target, and the policy optimizes against it.

RLHF uses reverse KL, which is mode-seeking

The KL penalty in the RLHF objective is , the reverse (or "exclusive") KL with the policy on the left. Reverse KL is mode-seeking and zero-avoiding. Wherever is near zero, must also be near zero, and the policy is free to place all its mass on a single high-reward mode of the reference distribution. The forward (or "inclusive") KL would instead be mean-seeking and zero-forcing: would have to cover every output that assigns non-trivial mass to. This asymmetry is a direct cause of the mode collapse and narrowed output distributions observed in RLHF-tuned models: the reverse KL does not penalize dropping modes of the reference policy. See Schulman, "Approximating KL Divergence" (2020, joschu.net) for the standard derivation and estimator discussion, and Korbak et al., "On Reinforcement Learning and Distribution Matching for Fine-Tuning Language Models with No Catastrophic Forgetting" (arXiv 2206.00761, 2022) for distribution-matching reformulations that swap the direction.

KL penalty is not a safety guarantee

The KL penalty prevents the policy from straying too far from the SFT baseline. But the SFT baseline itself can be harmful or wrong. The KL penalty is a regularizer, not a safety mechanism. It prevents some forms of reward hacking but does not prevent the policy from learning systematic biases present in the reward model.

DPO and PPO target the same optimal policy in theory, not in practice

Under the Bradley-Terry model with KL regularization, DPO and KL-regularized RLHF share the same population-level optimal policy. This is a 2023-era framing from the original DPO paper. The 2024 literature contests the practical equivalence. Xu et al. (2024, "Is DPO Superior to PPO") and Ivison et al. (2024) report that properly tuned PPO often outperforms DPO on challenging tasks including code and multi-turn dialogue. Tajwar et al. (2024) argue the gap is driven by on-policy versus off-policy data: PPO samples from the current policy and passes through a smoothed reward model, while DPO fits a supervised loss on a fixed preference dataset and inherits its coverage limits. Same target in theory, different empirical behavior under finite data and finite compute.

More RLHF is not always better

Increasing the number of PPO steps or decreasing (weaker KL penalty) does not monotonically improve the model. Beyond a certain point, overoptimization kicks in and the model gets worse by human evaluation, even as the proxy reward increases. The optimal amount of RLHF is an empirical question.

Modern RL for LLMs

Since 2023, the RL-for-LLM landscape has moved beyond vanilla PPO-based RLHF. Two developments are central:

-

GRPO (Group Relative Policy Optimization), introduced by Shao et al. (2024) in DeepSeekMath (arXiv 2402.03300, February 2024) and later used at scale in DeepSeek-R1 (DeepSeek-AI, arXiv 2501.12948, January 2025). GRPO drops the value network. For each prompt it samples a group of completions , scores them with rewards , and assigns each completion the standardized advantage

Both the mean subtraction and the division by the group standard deviation matter. The mean provides a baseline, and the std normalization replaces the variance-reduction role a learned critic would otherwise play, giving a scale-invariant signal across prompts of different reward magnitudes. GRPO keeps a KL penalty against the reference policy. This cuts memory cost and pairs naturally with verifiable rewards.

-

Process Reward Models (PRMs), proposed by Uesato et al. (2022) and scaled by Lightman et al. (2023) "Let's Verify Step by Step." PRMs score each reasoning step rather than only the final answer, improving credit assignment and enabling verifier-guided best-of- at inference.

See the RLHF deep dive for the advantage formula, annotation tradeoffs, and PRM failure modes.

Scalable Alignment and Oversight

As models become more capable, the alignment problem changes qualitatively. Current RLHF assumes human evaluators can reliably judge model outputs. For tasks where the model exceeds human ability (complex code, advanced mathematics, long-horizon reasoning), this assumption breaks.

Weak-to-strong generalization (Burns et al., 2023): can a weaker model (standing in for a future human evaluator facing a superhuman system) supervise a stronger model? Burns et al. fix a pair of capability levels, a weak model and a strong model, both within the GPT-4 family of pretrained checkpoints (they do not claim to use the deployed GPT-4 product as the strong model). Labels from the weak supervisor are used to fine-tune the strong model. They measure the Performance Gap Recovered (PGR): the fraction of the gap between weak-supervisor performance and strong-ceiling performance (strong model fine-tuned on ground truth) that the weak-to-strong training recovers. PGR is task dependent. Across NLP benchmarks, chess puzzles, and reward modeling, reported PGR ranges from roughly 20 percent to 80 percent. This is distinct from "alignment tax," which refers to capability lost due to alignment-oriented fine-tuning against a capability ceiling. PGR measures how much of a strong model's latent capability can be elicited by a weaker supervisor, not what a strong model gives up to be aligned.

Scalable oversight approaches:

- Constitutional AI: replace human evaluators with AI-generated critiques based on written principles. Scales evaluation without humans in the loop, but the principles must be specified correctly.

- Debate: two AI models argue for different answers while a human judges which argument is more convincing. The theory is that evaluation is easier than generation, so humans can oversee superhuman models by judging debates.

- Recursive reward modeling: use a hierarchy of models to evaluate each other, with human oversight only at the top.

- Process reward models: evaluate the reasoning process step-by-step rather than the final answer, making oversight more fine-grained.

The core tension: as models get stronger, the reward signal must scale with them. A fixed-quality reward model eventually becomes the bottleneck, leading to reward hacking and alignment failure at scale.

Summary

- Canonical 2022 RLHF pipeline: SFT, then reward model from preferences, then PPO with KL penalty. Modern variants add rejection sampling, iterative DPO, RLAIF, ensembled reward models, and GRPO-style critic-free RL.

- The Bradley-Terry model converts preference comparisons to a scalar reward via NLL minimization.

- The KL penalty is the primary, but not the only, mechanism preventing overoptimization.

- DPO reparameterizes the KL-regularized RL problem as a supervised loss on preference pairs, building on the RL-as-inference lineage (Peters and Schaal, Peng et al., Korbak et al.).

- DPO and PPO share a theoretical target under Bradley-Terry plus KL, but the 2024 literature finds they diverge empirically.

- Reward hacking: the policy exploits gaps between the proxy reward and true human judgment.

- Constitutional AI has two phases (SL-CAI and RL-CAI) and used PPO in the 2022 paper.

- Weak-to-strong generalization is measured by PGR, which is distinct from alignment tax.

- RLHF makes models produce text humans rate highly, not text that is true.

Exercises

Problem

Write out the closed-form optimal policy for the KL-regularized RLHF objective and verify that it satisfies the first-order optimality condition.

Problem

Derive the DPO gradient. Show that it increases the likelihood of preferred completions and decreases the likelihood of dispreferred completions, with a weighting that depends on how much the current policy disagrees with the implicit reward ranking.

Problem

The Goodhart's Law phenomenon in RLHF can be formalized as follows: let be the true human reward and the proxy. Suppose where is noise independent of . Show that the optimal policy under with KL penalty overestimates the true reward, and the overestimation increases as decreases.

Related Comparisons

Frequently Asked Questions

- What is the difference between RLHF and DPO?

- RLHF trains a separate Bradley-Terry reward model from preference comparisons, then optimizes the policy against it with PPO + KL penalty. DPO eliminates the explicit reward model; it derives the closed-form Bradley-Terry maximizer directly from the policy and reference model, training with a simple classification loss on preference pairs. Same data assumptions, fewer moving parts.

- Why does RLHF need a KL penalty?

- Without it, PPO drives the policy to maximize the reward model anywhere in policy space, including regions where the reward model is unreliable or exploitable. The KL penalty against the reference model keeps the policy near the pretrained distribution, where the reward model was actually trained to give meaningful signal. Drop the KL and the policy degenerates into reward hacking.

- Does Constitutional AI eliminate human feedback entirely?

- No. CAI replaces preference labels for harmlessness with AI-generated feedback (RLAIF) against an explicit constitution, but the constitution itself is human-written and the helpfulness signal still uses human labels. The shift is from labeling individual outputs to designing principles; humans move to the constitution layer.

- What is reward hacking?

- When a learned reward model is exploited by the policy: the policy finds inputs that score high on the reward model but are not actually preferred by humans. Common patterns include length bias (longer responses score higher), sycophancy (agreeing with the user), and superficial formatting (markdown, bullets). The KL penalty mitigates but does not fully prevent it.

- Can RLHF make a model honest?

- Not by itself. Standard benchmarks score abstention as wrong, so RLHF optimized against benchmark-derived preferences can amplify confident bluffing rather than reduce it (Hallucination Theory equilibrium). Honest behavior requires explicit calibration objectives, retrieval, or verifier feedback; preference labels alone underspecify truthfulness.

References

Canonical:

- Christiano et al., "Deep Reinforcement Learning from Human Feedback" (2017)

- Ouyang et al., "Training Language Models to Follow Instructions with Human Feedback" (2022). InstructGPT

RL-as-inference lineage (pre-DPO):

- Peters and Schaal, "Reinforcement Learning by Reward-Weighted Regression for Operational Space Control" (2007)

- Peng et al., "Advantage-Weighted Regression: Simple and Scalable Off-Policy Reinforcement Learning" (2019)

- Korbak et al., "RL with KL Penalties is Better Viewed as Bayesian Inference" (2022)

- Korbak et al., "On Reinforcement Learning and Distribution Matching for Fine-Tuning Language Models with No Catastrophic Forgetting" (arXiv 2206.00761, 2022). forward-KL distribution matching

- Schulman, "Approximating KL Divergence" (joschu.net, 2020). forward vs reverse KL estimators in RL

Scalable oversight:

- Burns et al., "Weak-to-Strong Generalization: Eliciting Strong Capabilities With Weak Supervision" (arXiv 2312.09390, 2023)

- Irving, Christiano, Amodei, "AI Safety via Debate" (2018)

Current:

- Rafailov et al., "Direct Preference Optimization" (arXiv 2305.18290, 2023). DPO

- Xu et al., "Is DPO Superior to PPO for LLM Alignment? A Comprehensive Study" (arXiv 2404.10719, 2024)

- Ivison et al., "Unpacking DPO and PPO: Disentangling Best Practices for Learning from Preference Feedback" (2024)

- Tajwar et al., "Preference Fine-Tuning of LLMs Should Leverage Suboptimal, On-Policy Data" (2024)

- Coste et al., "Reward Model Ensembles Help Mitigate Overoptimization" (2023)

- Gao et al., "Scaling Laws for Reward Model Overoptimization" (2023)

- Bai et al., "Constitutional AI: Harmlessness from AI Feedback" (arXiv 2212.08073, 2022)

- Shao et al., "DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models" (arXiv 2402.03300, 2024). Introduces GRPO.

- DeepSeek-AI, "DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning" (arXiv 2501.12948, 2025)

- Lightman et al., "Let's Verify Step by Step" (2023). Process reward models at scale.

- Uesato et al., "Solving Math Word Problems with Process- and Outcome-Based Feedback" (2022)

- Greenblatt et al., "Alignment Faking in Large Language Models" (arXiv 2412.14093, 2024). Anthropic

Next Topics

The natural next steps from RLHF and alignment:

- Hallucination theory: why alignment does not solve the confabulation problem

- Mechanistic interpretability: understanding what RLHF actually changes inside the model

- Reward models and verifiers: the reward signal that drives alignment

Last reviewed: April 18, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

5- Markov Decision Processeslayer 2 · tier 1

- Fine-Tuning and Adaptationlayer 3 · tier 1

- Policy Gradient Theoremlayer 3 · tier 1

- Actor-Critic Methodslayer 3 · tier 2

- Transformer Architecturelayer 4 · tier 2

Derived topics

11- Hallucination Theorylayer 4 · tier 1

- Mechanistic Interpretability: Features, Circuits, and Causal Faithfulnesslayer 4 · tier 1

- Reinforcement Learning from Human Feedbacklayer 5 · tier 1

- Constitutional AIlayer 5 · tier 2

- DPO vs GRPO vs RL for Reasoninglayer 5 · tier 2

+6 more on the derived-topics page.

Graph-backed continuations