Statistical Foundations

Robust Statistics and M-Estimators

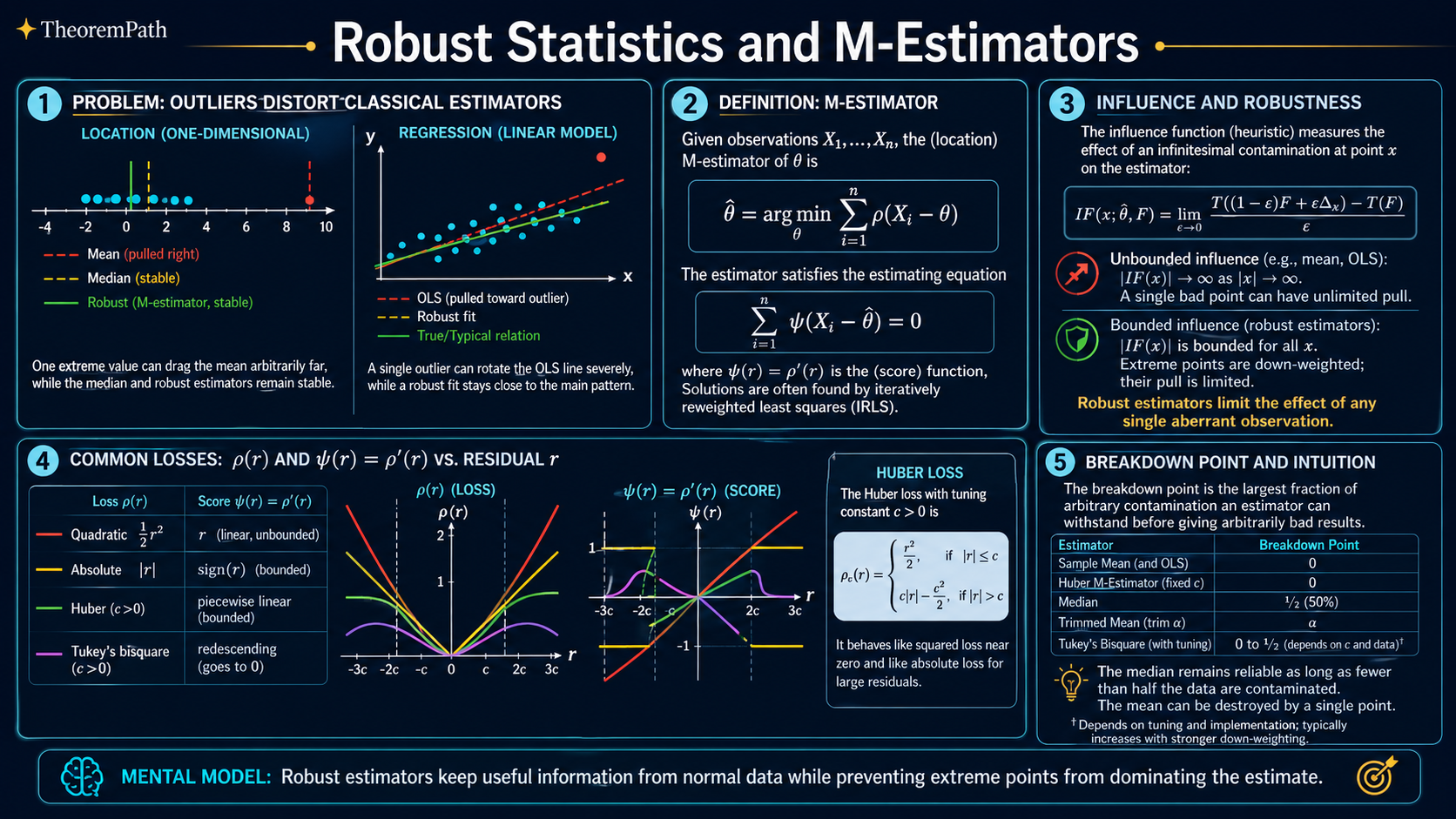

When data has outliers or model assumptions are wrong, classical estimators break. M-estimators generalize MLE to handle contamination gracefully.

Prerequisites

Why This Matters

Real data is messy. Sensor readings glitch. Labels get corrupted. Distributions have heavier tails than your model assumes. Classical estimators like the sample mean and ordinary least squares are exquisitely sensitive to such problems: a single outlier can drag the estimate arbitrarily far from the truth.

Hide overviewShow overview

Robust statistics asks: can we build estimators that work reasonably well under mild assumptions, degrading gracefully when those assumptions are violated? M-estimators are the main tool for doing this. If you have ever used Huber loss, you have used an M-estimator.

Mental Model

Think of the sample mean as a tug-of-war: every data point pulls with equal force. An outlier at pulls just as hard as a normal observation. The median, by contrast, only cares about order, not magnitude, so outliers cannot exert unbounded influence.

M-estimators sit on a spectrum between these extremes. By choosing a loss function that grows less aggressively than the quadratic, you limit the pull of outliers while still using magnitude information from well-behaved observations.

Formal Setup and Notation

Let be i.i.d. observations from some distribution .

M-Estimator

An M-estimator of a location parameter is any value that minimizes:

where is a loss function. Equivalently, solves the estimating equation:

where is the influence function of .

Influence Function

The influence function of a statistical functional at distribution is:

where is a point mass at . This measures how much a single contamination point at shifts the estimate.

Breakdown Point

The breakdown point of an estimator is the largest fraction of the data that can be replaced by arbitrary values before the estimator becomes unbounded (or otherwise useless):

The sample mean has breakdown point (one outlier can make it infinite). The median has breakdown point (the best possible).

Core Definitions

Common functions and their properties:

The quadratic loss yields the sample mean. Its -function is , which is unbounded, so outliers have unlimited influence.

The absolute loss yields the sample median. Its -function is , which is bounded, giving robustness.

The Huber loss with threshold is:

Its -function is . This acts like squared loss for small residuals (efficient) and like absolute loss for large residuals (robust). The parameter controls the tradeoff: gives 95% efficiency at the Gaussian while still being robust.

Tukey's bisquare (biweight) goes further: for , completely ignoring extreme outliers. This gives a redescending -function. The tradeoff is that the optimization problem becomes non-convex.

Main Theorems

Influence Function of an M-Estimator

Statement

For an M-estimator with -function , the influence function at distribution is:

where is the true parameter value under .

Intuition

The numerator is how hard a contamination point at pulls on the estimator. The denominator is a normalizing factor from the population. If is bounded, the influence function is bounded, and no single contamination point can move the estimator far.

Proof Sketch

Write the estimating equation at the contaminated distribution . Differentiate with respect to at using implicit differentiation. The result drops out directly from the chain rule applied to .

Why It Matters

This theorem is the main diagnostic tool for robustness. Before using an estimator in practice, compute its influence function. If the IF is unbounded, a single outlier can cause arbitrarily large bias. Bounded IF is the minimum requirement for robustness.

Failure Mode

The influence function is a local measure: it describes the effect of infinitesimal contamination. It does not tell you what happens when 10% of your data is corrupted. For that, you need the breakdown point.

Maximum Breakdown Point

Statement

For any translation-equivariant estimator of a location parameter based on observations, the breakdown point satisfies:

The sample median achieves this bound, so the maximum possible breakdown point is at most (with equality in the limit ).

Intuition

If at least half the data is corrupted, the corrupted points can form a majority and dictate the estimate. No translation-equivariant estimator can survive corruption of the majority.

Proof Sketch

Donoho and Huber (1983). Replace observations with copies of . Any translation-equivariant estimator must follow these points to infinity because they now form a (weak) majority. For the sample median, replacing fewer than points leaves the median pinned by the uncorrupted majority.

Why It Matters

This sets a fundamental limit on robustness. It also explains why the median is special: it achieves the highest possible breakdown point for location estimation.

Canonical Examples

Huber M-estimator for location

Suppose you observe . The sample mean is , dragged far from the bulk by the outlier at .

The Huber M-estimator with downweights the outlier. Iteratively solving converges to , which reflects the bulk of the data. The outlier's contribution is clipped to instead of pulling with force .

Robust regression with Huber loss

In linear regression , replace the squared loss with Huber loss:

This is robust to outliers in (vertical outliers). For protection against leverage points (outliers in ), you need more sophisticated methods like MM-estimators or least trimmed squares.

Common Confusions

Robustness is not just about outlier removal

Robust estimators do not simply remove outliers and then apply classical methods. They use all the data but reweight observations continuously based on residual size. This is more principled: you do not need to choose a hard threshold for what counts as an outlier.

Efficiency and robustness are not mutually exclusive

A common misconception is that robust estimators are much less efficient at the Gaussian. The Huber estimator with achieves 95% asymptotic efficiency at the Gaussian while having a breakdown point of roughly . For higher breakdown, use MM-estimators which achieve both high efficiency and .

Summary

- M-estimators generalize MLE by minimizing for a chosen loss function

- The influence function measures sensitivity to contamination: bounded IF means no single point can cause unbounded bias

- Breakdown point measures the fraction of data that can be corrupted before the estimator fails; is the maximum

- Huber loss is the workhorse: quadratic for small residuals, linear for large ones, controlled by threshold

- In ML, Huber loss and similar robust losses are standard in regression, reinforcement learning, and any setting with noisy labels

Exercises

Problem

Compute the influence function of the sample mean (i.e., the M-estimator with ) and verify that it is unbounded.

Problem

Show that the Huber -function yields a bounded influence function. What is the maximum influence?

Problem

The Huber M-estimator has breakdown point approximately (it can survive one outlier but not two). Explain why, and describe how MM-estimators achieve breakdown point while maintaining high Gaussian efficiency.

References

Canonical:

- Huber & Ronchetti, Robust Statistics (2nd ed., 2009), Chapters 1-4

- Hampel, Ronchetti, Rousseeuw, Stahel, Robust Statistics (1986)

Current:

- Maronna, Martin, Yohai, Salibián-Barrera, Robust Statistics: Theory and Methods (2nd ed., 2019)

- van der Vaart, Asymptotic Statistics (1998), Chapter 5 (M- and Z-estimators)

- Diakonikolas & Kane, Algorithmic High-Dimensional Robust Statistics (2023), Chapters 1-3

- Lugosi & Mendelson, "Mean estimation and regression under heavy-tailed distributions: a survey," FoCM 19 (2019), 1145-1190

Next Topics

Natural continuations from robust statistics:

- Empirical risk minimization: the general framework for choosing loss functions

- Hypothesis testing for ML: testing under model misspecification

Last reviewed: April 13, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

4- Maximum Likelihood Estimation: Theory, Information Identity, and Asymptotic Efficiencylayer 0B · tier 1

- Skewness, Kurtosis, and Higher Momentslayer 1 · tier 1

- Minimax and Saddle Pointslayer 2 · tier 2

- Winsorizationlayer 1 · tier 3

Derived topics

2- Empirical Risk Minimizationlayer 2 · tier 1

- Hypothesis Testing for MLlayer 2 · tier 2

Graph-backed continuations