LLM Construction

Scaling Compute-Optimal Training

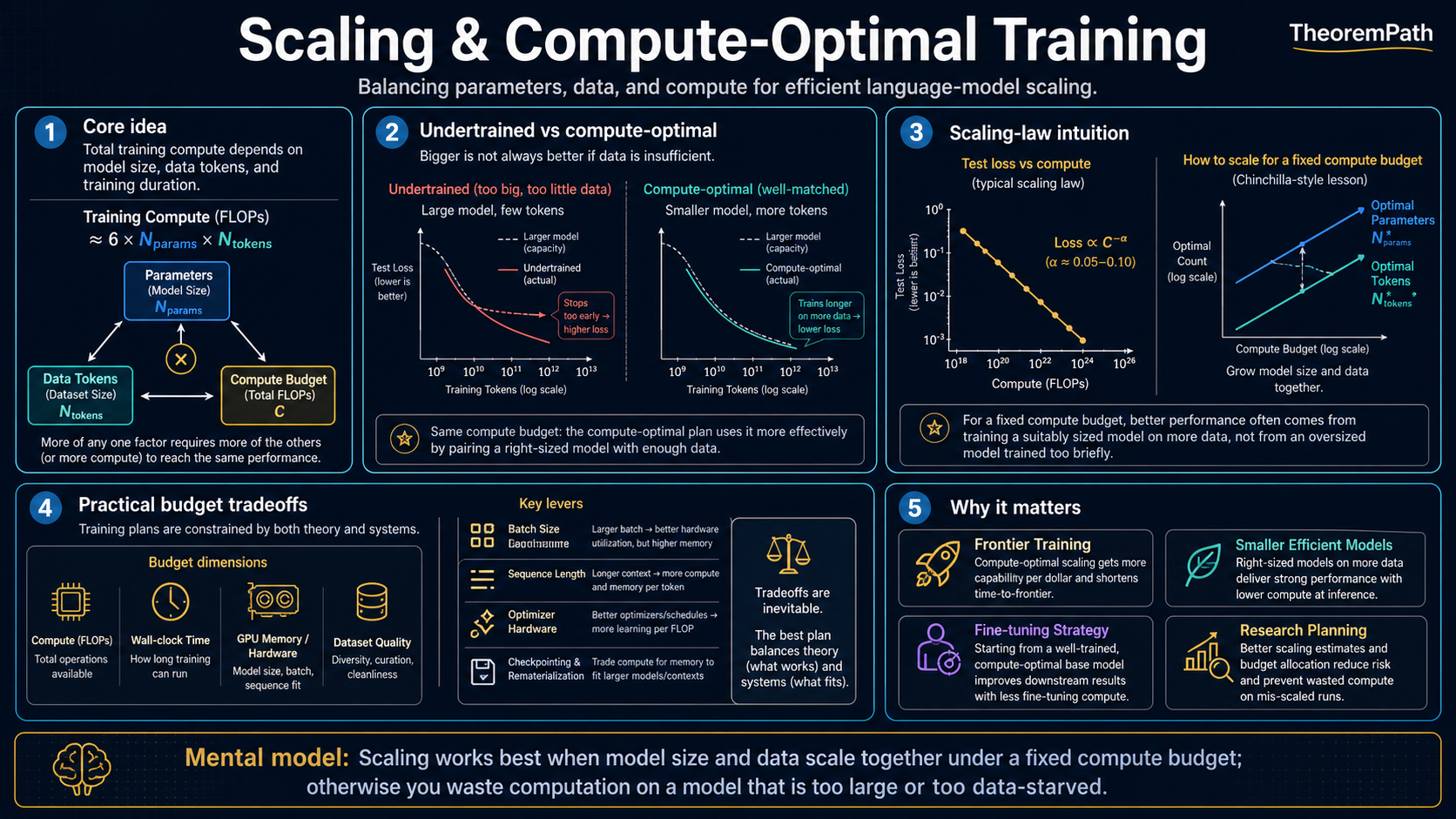

Chinchilla scaling: how to optimally allocate a fixed compute budget between model size and training data, why many models were undertrained, and the post-Chinchilla reality of data quality and inference cost.

Prerequisites

Why This Matters

Training a large language model costs millions of dollars. The question of how to spend that compute budget is not academic. Chinchilla (Hoffmann et al., 2022) showed that many existing models, including Gopher (280B parameters), were significantly undertrained: they used too many parameters relative to the amount of training data. The practical consequence was that a smaller, properly trained model (Chinchilla, 70B) outperformed a larger undertrained one (Gopher, 280B) while using the same total compute.

Hide overviewShow overview

This reshaped how labs allocate training budgets and shifted attention toward data quality, data efficiency, and the previously underappreciated cost of inference.

Background: Kaplan Scaling

Kaplan et al. (2020) established power-law relationships between loss and three quantities: parameters , data , and compute .

Kaplan Scaling Laws

For a fixed architecture (decoder-only transformer), cross-entropy loss on held-out data follows:

where the exponents are fit empirically. Kaplan concluded that loss is more sensitive to than to : you should scale parameters faster than data.

The Kaplan recommendation: for a 10x increase in compute, increase by 5.5x and by 1.8x. This led to the trend of building very large models trained on relatively little data (e.g., GPT-3 at 175B parameters trained on 300B tokens).

Chinchilla Optimal Allocation

Chinchilla Compute-Optimal Allocation

Statement

For a fixed compute budget , the loss-minimizing allocation scales parameters and data roughly equally:

Equivalently, the optimal token-to-parameter ratio is approximately . A model with parameters should be trained on roughly tokens.

Intuition

If you have parameters and very little data, you overfit. If you have vast data but a tiny model, you underfit. The optimal point balances these two sources of error. Chinchilla found this balance is roughly equal scaling, not the parameter-heavy allocation Kaplan suggested.

Proof Sketch

Model the loss as where is irreducible loss. Using , substitute and minimize over . Setting gives . Chinchilla found , giving exponent for both and .

Why It Matters

This directly changed how labs train models. GPT-3 (175B, 300B tokens) had a tokens-per-parameter ratio of about 1.7, far below the Chinchilla-optimal 20. Llama 1 (65B, 1.4T tokens) and Llama 2 (70B, 2T tokens) adopted Chinchilla-like ratios. The result: smaller models that perform as well as or better than larger undertrained models, at lower inference cost.

Failure Mode

The Chinchilla result assumes that all tokens are equally valuable and that data is abundant. In practice, high-quality text data is finite. When you run out of quality data, the model cannot absorb more tokens effectively. This shifts the problem from "how many tokens" to "which tokens."

Kaplan vs Chinchilla

Kaplan vs Chinchilla Exponent Discrepancy

Statement

Kaplan found optimal allocation exponents of and , favoring parameters over data. Chinchilla found and , favoring equal allocation. The discrepancy comes from differences in experimental methodology.

Intuition

Kaplan's experiments did not train each model to convergence. Models with more parameters appeared to improve loss more per FLOP because they had not yet reached their optimal loss for that amount of data. Chinchilla trained each model fully, revealing that data had been undervalued.

Proof Sketch

Not a formal proof. The key methodological differences: (1) Kaplan used a fixed number of training steps for models of different sizes; Chinchilla varied both model size and training duration. (2) Kaplan used a fixed learning rate schedule; Chinchilla tuned the schedule per run. (3) Chinchilla used three independent estimation approaches (fixed varying , IsoFLOP profiles, and parametric loss fitting) that agreed on the equal-scaling result.

Why It Matters

This is a cautionary tale about empirical scaling research. Both groups fit power laws to real data and reached different conclusions because of experimental design choices. The lesson: scaling law exponents are not universal constants. They depend on the training protocol, architecture, and data distribution.

Failure Mode

Neither Kaplan nor Chinchilla accounts for data quality. A model trained on 20N tokens of noisy web scrapes may perform worse than one trained on 5N tokens of curated text. The Chinchilla ratio of 20 tokens per parameter is a rough guideline, not a physical law.

Post-Chinchilla Reality

Data quality dominates data quantity. Llama 3 (2024) trained on 15T tokens for a 70B model (ratio: ~214 tokens per parameter), far exceeding the Chinchilla-optimal 20. This works because the data was heavily filtered and deduplicated. The Chinchilla analysis assumed constant data quality; real training benefits from spending more compute on better data even past the "optimal" ratio.

Inference cost matters. Chinchilla minimizes training loss per training FLOP. But a model is trained once and served many times. A 70B model costs roughly 4x more per token to serve than a 20B model. If your total lifetime cost is dominated by inference (which it usually is at scale), you may prefer a smaller model trained longer, even if it uses more training FLOPs than Chinchilla-optimal. This is sometimes called "inference-aware scaling."

Overtraining is common and deliberate. Llama models are deliberately "overtrained" relative to Chinchilla: more tokens per parameter than the compute-optimal ratio. This increases training cost but yields a smaller model that is cheaper to serve. The trade-off is rational when inference volume is high.

Repeating data degrades performance. Muennighoff et al. (2023) showed that repeating training data (training for more epochs) gives diminishing returns after about 4 epochs, and performance can degrade. This creates a hard constraint: if you run out of unique high-quality data, more compute does not help much.

Common Confusions

Chinchilla optimal does not mean actually optimal

Chinchilla-optimal minimizes loss for a given training compute budget. It does not account for inference cost, data quality variation, or the finite supply of training data. A model that is "Chinchilla-optimal" may be suboptimal for actual deployment.

Scaling laws predict loss, not capability

A 0.01 nats improvement in cross-entropy loss can flip a model across a qualitative capability (like arithmetic) or might have no noticeable effect on downstream tasks. Scaling laws describe smooth loss curves, but capabilities can emerge discontinuously (though the sharpness of emergence is debated).

Exercises

Problem

You have a compute budget of FLOPs and use the approximation . Using the Chinchilla-optimal ratio , compute the optimal model size and number of training tokens .

Problem

Suppose inference costs per token and you will serve total inference tokens over the model's lifetime. Training costs total. The total cost is (where inference cost scales linearly with ). For fixed target loss , how does the optimal change compared to pure Chinchilla-optimal?

Related Comparisons

References

Canonical:

- Hoffmann et al., "Training Compute-Optimal Large Language Models" (NeurIPS 2022)

- Kaplan et al., "Scaling Laws for Neural Language Models" (2020)

Current:

- Touvron et al., "Llama 2: Open Foundation and Fine-Tuned Chat Models" (2023)

- Muennighoff et al., "Scaling Data-Constrained Language Models" (NeurIPS 2023)

- Sardana & Frankle, "Beyond Chinchilla-Optimal: Accounting for Inference in Language Model Scaling Laws" (arXiv:2401.00448, 2024) -- formalizes the inference-aware scaling argument used implicitly by the Llama overtraining recipe

- Besiroglu et al., "Chinchilla Scaling: A Replication Attempt" (arXiv:2404.10102, 2024) -- replication of Hoffmann et al. recovers but flags reporting errors in the original loss-fit table

- Schaeffer, Miranda, Koyejo, "Are Emergent Abilities of Large Language Models a Mirage?" (NeurIPS 2023) -- argues many "emergent" capabilities are artifacts of discontinuous metrics; relevant to the loss-vs-capability confusion above

- Krajewski et al., "Scaling Laws for Fine-Grained Mixture of Experts" (ICML 2024) -- joint scaling law for MoE that subsumes Chinchilla as the dense limit

Foundational context:

- Sutton, "The Bitter Lesson" (2019) -- the methodological backdrop for why scaling-law work matters at all

Last reviewed: April 26, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

1- Scaling Lawslayer 4 · tier 1

Derived topics

0No published topic currently declares this as a prerequisite.