LLM Construction

Sparse Attention and Long Context

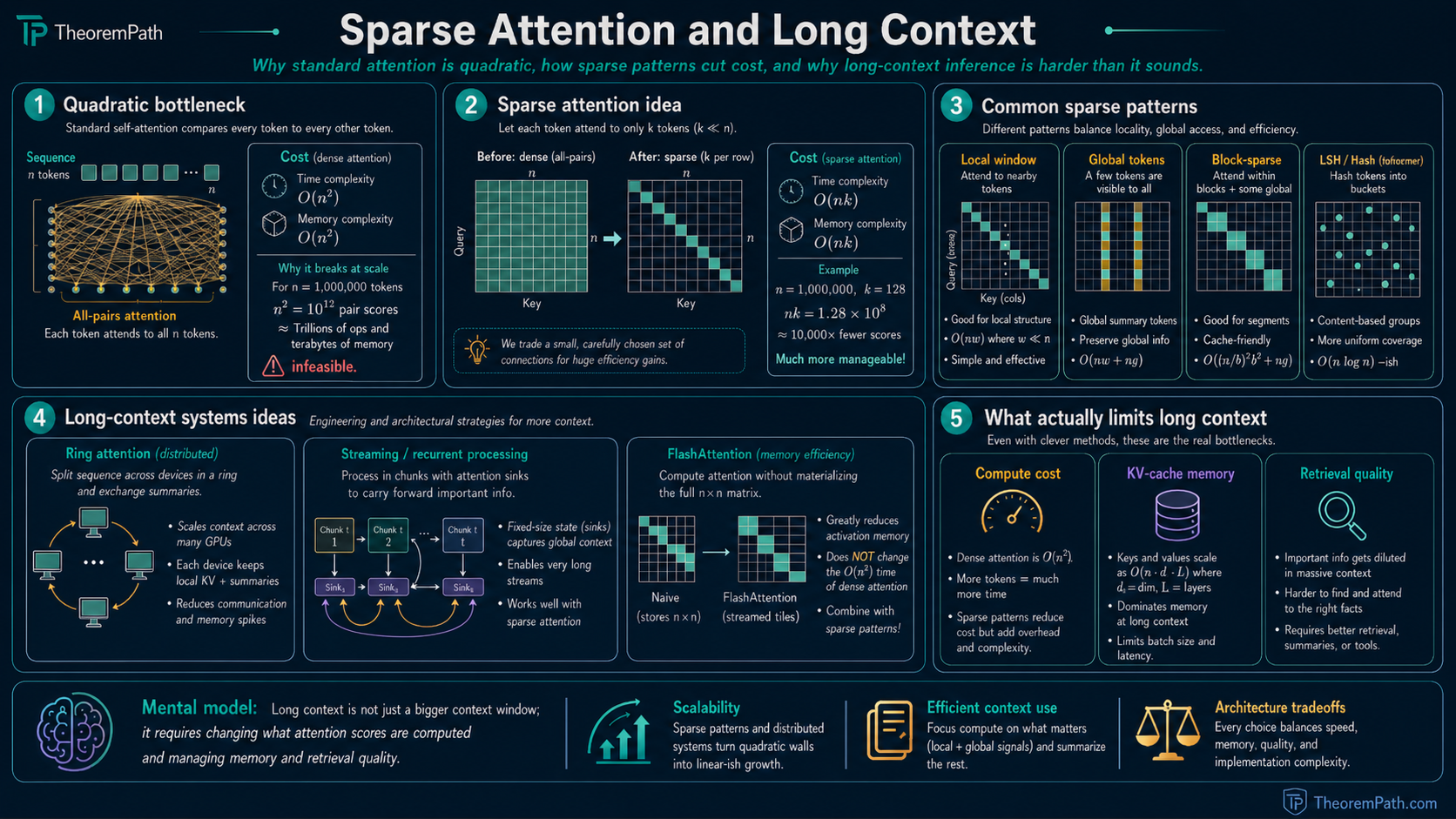

Standard attention is O(n²). Sparse patterns (Longformer, Sparse Transformer, Reformer), ring attention for distributed sequences, streaming with attention sinks, and why extending context is harder than it sounds.

Prerequisites

Why This Matters

Standard self-attention computes all pairwise interactions between tokens, costing in time and memory. For a context of 1 million tokens, this means operations per attention layer. This is not feasible.

Hide overviewShow overview

Long-context capability is a central bottleneck for LLMs. Longer context enables processing entire codebases, full books, and long conversations. But "just make the context longer" hits three walls: quadratic compute cost, memory for storing the KV cache, and degraded retrieval quality as context grows.

The Quadratic Bottleneck

Standard Attention Complexity

For a sequence of tokens with head dimension , the attention computation requires:

- Time: for the matrix multiply and the attention-weighted sum

- Memory: to store the attention matrix (or with FlashAttention recomputation, but time remains )

FlashAttention reduces the memory bottleneck from to by tiling and recomputation, but the time complexity remains. To break the quadratic barrier, you must change which attention scores are computed.

Sparse Attention Patterns

Sparse Attention Reduces Complexity

Statement

If each token attends to at most other tokens (where ), the attention computation requires time and memory for the sparse attention matrix. When or , this is subquadratic in .

Intuition

Instead of computing all attention scores, compute only of them. The question is which to choose. Different methods answer this differently, and the quality of the answer determines whether the approximation is good enough.

Proof Sketch

Direct counting. Each of tokens computes attention scores (each costing for the dot product), applies softmax over values, and computes a weighted sum of value vectors (each of dimension ). Total: .

Why It Matters

This is the foundation of all efficient attention methods. The specific value of and the choice of which tokens each position attends to define the design space.

Failure Mode

If the sparsity pattern misses tokens that carry critical information, the model performs worse than full attention regardless of computational savings. Sparse attention trades off expressiveness for efficiency. The right tradeoff depends on the task.

Local Window Attention (Longformer)

Each token attends to a fixed window of surrounding tokens: token attends to tokens . Complexity: , linear in for fixed .

Longformer (Beltagy et al., 2020) adds a small number of "global" tokens that attend to all positions and are attended to by all positions. This combines local context (from windows) with global context (from designated tokens like [CLS]).

Limitation: information must propagate through hops to travel across the full sequence. For a 100k-token sequence with , this requires about 200 layers of propagation.

Strided Attention (Sparse Transformer)

Child et al. (2019) use two interleaved patterns: a local window pattern and a strided pattern where token attends to tokens for stride . Each pattern has connections per token, giving total complexity.

The strided pattern enables direct long-range connections without multi-hop propagation.

Hash-Based Attention (Reformer)

LSH Attention Approximation

Statement

Reformer (Kitaev et al., 2020) uses locality-sensitive hashing (LSH) to assign queries and keys to buckets. Tokens only attend within their bucket. If the hash function satisfies where is monotonically increasing, then the expected attention pattern concentrates on the highest-scoring key-query pairs. With buckets of average size , the complexity is .

Intuition

Similar queries and keys are likely to land in the same hash bucket. Since attention weights are dominated by the largest dot products (after softmax), ignoring low-similarity pairs has minimal effect on the output.

Proof Sketch

By the LSH property, query-key pairs with high cosine similarity collide with high probability. Softmax concentrates mass on large dot products exponentially. The attention output is therefore approximated well by only summing over tokens in the same bucket, up to an error that decreases with the number of hash rounds.

Why It Matters

LSH attention is when bucket size is . It adapts the sparsity pattern to the data rather than using a fixed pattern.

Failure Mode

Hash collisions are stochastic: important keys may land in different buckets from their queries. Multiple hash rounds reduce this risk but increase cost. In practice, Reformer has been largely superseded by FlashAttention and other approaches that keep full attention but optimize memory and compute constants.

Ring Attention: Distributing Long Sequences

Ring attention (Liu et al., 2023) distributes a long sequence across devices. Each device holds tokens. The devices are arranged in a ring. Each device computes attention for its local tokens against one block of KV pairs, then passes the KV block to the next device. After rounds, each device has attended to all tokens.

Total memory per device: for the attention matrix (or with FlashAttention). This scales context length linearly with the number of devices.

Streaming with Attention Sinks (StreamingLLM)

Xiao et al. (2023) found empirically that pretrained LLMs allocate disproportionate attention to the first few tokens regardless of their content, naming this pattern "attention sinks." Why this happens mechanistically is still debated; one common hypothesis is that softmax forces attention mass to land somewhere even when no later token is informative, and the first tokens become the default sink. StreamingLLM keeps a fixed-size window of recent tokens plus the first few tokens (the sinks), enabling arbitrarily long inference streams with fixed memory, at the cost of losing access to middle-of-context information.

Classical Sparse Patterns, Further Coverage

BigBird

Zaheer et al. (2020) combine three components: a local window, a small set of global tokens (as in Longformer), and a random attention pattern where each token attends to uniformly sampled positions. The random edges act as shortcuts across the sequence. Total complexity is per layer for fixed window, global, and random budgets. The paper also proves that BigBird attention is a universal approximator of sequence-to-sequence functions and is Turing-complete, matching the expressivity of full attention.

Linear Attention Lineage

Several 2020 methods replaced softmax attention with kernelizable forms that give cost.

- Linear Attention (Katharopoulos et al., 2020). Replace with for a feature map . The right-hand product is computed first in time, avoiding the matrix. Autoregressive inference becomes an RNN-style recurrence.

- Performers (Choromanski et al., 2020) use random features (FAVOR+) to approximate the softmax kernel with positive orthogonal features, giving an unbiased estimator of standard attention at cost.

- Linformer (Wang et al., 2020) projects keys and values to a fixed low-rank dimension via a learned linear map, reducing complexity to . Assumes the attention matrix is approximately low-rank.

Hyena and H3

- H3 (Fu et al., 2022). Hungry Hungry Hippos. A state-space layer that closed much of the quality gap between SSMs and Transformers and directly motivated Mamba.

- Hyena (Poli et al., 2023). A sub-quadratic operator built from long implicit convolutions combined with data-controlled element-wise gating. Competitive with attention at moderate scales.

Non-Attention Alternatives for Long Context

The dominant long-context research direction since 2023 is not sparse attention but replacing attention with mechanisms that are linear in sequence length by construction.

Selective State-Space Models: Mamba

Mamba (Gu and Dao, 2023) extends structured state-space models with input-dependent parameters. A vanilla SSM applies a fixed linear recurrence , . Mamba makes , , and the discretization step functions of the input token, giving a selection mechanism that lets the model choose what to remember and what to ignore. Training is via a hardware-aware parallel scan, and autoregressive inference is per token since the recurrent state has fixed size independent of context length. At roughly 3B parameters, Mamba matches Transformer language-modeling quality and exceeds it on long-context synthetic tasks.

Mamba-2 (Dao and Gu, 2024) introduces structured state-space duality (SSD), showing that a restricted family of SSMs is equivalent to a form of masked attention. This duality makes the SSM update expressible as matrix multiplications that map onto tensor cores, recovering the training throughput of attention. SSD also enables hybrid Mamba-Transformer architectures where a few attention layers are interleaved with many SSM layers.

RWKV and RetNet

- RWKV (Peng et al., 2023). A receptance-weighted key-value design that mixes linear-attention-like updates with time-decay gating. Trains in parallel like a Transformer and runs inference as an RNN with constant state.

- RetNet (Sun et al., 2023). Introduces a retention mechanism with an explicit exponential decay matrix. The same operator admits parallel, recurrent, and chunkwise-recurrent forms, giving Transformer-style training and RNN-style inference in the same architecture.

Gated Linear Attention

GLA (Yang et al., 2024) adds data-dependent gating to linear attention, closing much of the quality gap with softmax attention. A hardware-efficient chunkwise algorithm keeps training throughput competitive with FlashAttention-style implementations.

Positional Encoding Scaling and Benchmarks

Transformers with RoPE (rotary position embeddings) trained at context length do not automatically generalize to much longer contexts. A family of methods rescales or reinterprets RoPE frequencies to extend the usable range.

Position Interpolation

Chen et al. (2023) linearly rescale position indices so that position at inference maps to . This compresses the rotary frequencies into the range the model saw during training. Combined with a short fine-tune, it extended Llama from 2k to 32k context.

NTK-Aware and Dynamic NTK

Community posts in mid-2023 (u/bloc97 and u/emozilla on r/LocalLLaMA) proposed NTK-aware scaling, which modifies the RoPE base rather than the positions, leaving high-frequency components intact while stretching low-frequency ones. Dynamic NTK adjusts the base on the fly as the observed sequence length grows. Both ideas were later formalized and benchmarked in follow-up papers.

YaRN and LongRoPE

- YaRN (Peng et al., 2023), Yet another RoPE extensioN, combines NTK-aware interpolation with frequency-dependent scaling: low-frequency dimensions are interpolated, high-frequency dimensions are preserved, and a temperature adjustment compensates for attention-score magnitudes at longer lengths. Achieves strong perplexity at context lengths the base model never saw.

- LongRoPE (Ding et al., 2024) searches for non-uniform per-dimension scaling factors using evolutionary search, extending Llama-family models to 2M tokens with short fine-tunes.

Long-Context Benchmarks

Perplexity at long context is a weak signal; it can stay flat while task performance collapses. Modern benchmarks probe specific long-context capabilities.

- NIAH (Needle in a Haystack, Kamradt 2023, github.com/gkamradt/LLMTest_NeedleInAHaystack). Insert a single fact at a random depth of a long document and ask the model to recover it. Ad-hoc but widely cited.

- RULER (Hsieh et al., 2024). A synthetic benchmark covering single-needle and multi-needle retrieval, variable tracking, multi-hop aggregation, and long-context QA. Quantifies the "effective context length" below the claimed window.

- InfiniteBench (Zhang et al., 2024). Realistic tasks at 100k+ tokens including code debugging, math, and long-document retrieval.

Production Long-Context Systems

- Gemini 1.5 (Google, 2024 technical report). Deployed 1M token context with strong NIAH recall, later extended to 2M for the Pro tier.

- Claude 3 (Anthropic, 2024). 200k token context as the enterprise default across the Opus, Sonnet, and Haiku tiers.

Serving these context lengths is as much a KV-cache engineering problem as an attention-mechanism problem. MLA, SGLang's RadixAttention, FP8 KV quantization, and KV-cache selection methods like SnapKV dominate the wall-clock cost at long context, independent of whether attention is dense, sparse, or an SSM.

Why Extending Context is Hard

Three problems compound:

1. Quadratic cost. Even with FlashAttention, wall-clock time grows fast. Doubling context quadruples attention compute.

2. Lost-in-the-middle. Liu et al. (2023) showed empirically that LLMs retrieve information well from the beginning and end of a long context but poorly from the middle. The phenomenon is robust across models, but the mechanism is still open. Candidate explanations include positional encoding extrapolation, training-data position bias, and attention-sink interactions; the paper itself documents the effect without committing to a single cause.

3. Distributional shift. Models trained on 4k context and extended to 128k via positional interpolation may have degraded quality at the extended lengths. The model has never seen token interactions at distance 100k during training.

Common Confusions

FlashAttention does not reduce attention complexity

FlashAttention is an exact algorithm that reduces memory from to through tiling and recomputation. It does not change the time complexity. It is an IO optimization, not an algorithmic one. Sparse attention methods change the algorithm itself.

Longer context does not mean better retrieval

A model with 1M token context can hold a full book, but it may not reliably answer questions about a specific paragraph in the middle. Context length is a capacity bound, not a guarantee of utilization. Retrieval quality within context is a separate problem.

Canonical Examples

Longformer vs full attention on document classification

For a 4096-token document with window size and 8 global tokens, Longformer computes attention scores. Full attention computes attention scores. Longformer uses about 6.5% of the compute while matching full attention accuracy on document classification tasks (Beltagy et al., 2020).

Summary

- Standard attention costs time; this is the primary bottleneck for long context

- Sparse patterns (local, strided, hash-based) reduce to or

- Ring attention distributes sequences across devices for linear memory scaling

- Attention sinks enable streaming inference at the cost of mid-context access

- Longer context does not guarantee better retrieval due to the lost-in-the-middle effect

- Most production systems combine efficient attention with retrieval (RAG) rather than relying on context length alone

Exercises

Problem

A model uses local window attention with . How many attention layers are needed for information at token 0 to influence the output at token 8192?

Problem

You have 8 devices with ring attention and want to process a 1M-token sequence. Each device has 80GB of memory. The KV cache stores 2 floats (K and V) per token per head in fp16 (2 bytes each). The model has 32 layers and 32 heads with head dimension 128. Does the KV cache fit on each device?

References

Canonical:

- Vaswani et al., "Attention Is All You Need" (2017), Section 3 (baseline complexity)

- Child et al., "Generating Long Sequences with Sparse Transformers" (2019)

- Dao et al., "FlashAttention: Fast and Memory-Efficient Exact Attention with IO-Awareness" (2022), arXiv:2205.14135

- Dao, "FlashAttention-2: Faster Attention with Better Parallelism and Work Partitioning" (2023), arXiv:2307.08691

Current, sparse and efficient attention:

- Beltagy et al., "Longformer: The Long-Document Transformer" (2020), arXiv:2004.05150

- Kitaev et al., "Reformer: The Efficient Transformer" (2020), arXiv:2001.04451

- Zaheer et al., "Big Bird: Transformers for Longer Sequences" (2020), arXiv:2007.14062

- Katharopoulos et al., "Transformers are RNNs: Fast Autoregressive Transformers with Linear Attention" (2020), arXiv:2006.16236

- Choromanski et al., "Rethinking Attention with Performers" (2020), arXiv:2009.14794

- Wang et al., "Linformer: Self-Attention with Linear Complexity" (2020), arXiv:2006.04768

- Liu et al., "Ring Attention with Blockwise Transformers for Near-Infinite Context" (2023), arXiv:2310.01889

- Xiao et al., "Efficient Streaming Language Models with Attention Sinks" (2023), arXiv:2309.17453

- Liu et al., "Lost in the Middle: How Language Models Use Long Contexts," TACL 2024 (arXiv:2307.03172)

Non-attention long-context architectures:

- Fu et al., "Hungry Hungry Hippos: Towards Language Modeling with State Space Models" (2022), arXiv:2212.14052

- Poli et al., "Hyena Hierarchy: Towards Larger Convolutional Language Models" (2023), arXiv:2302.10866

- Gu and Dao, "Mamba: Linear-Time Sequence Modeling with Selective State Spaces" (2023), arXiv:2312.00752

- Dao and Gu, "Transformers are SSMs: Generalized Models and Efficient Algorithms Through Structured State Space Duality" (2024), arXiv:2405.21060

- Peng et al., "RWKV: Reinventing RNNs for the Transformer Era" (2023), arXiv:2305.13048

- Sun et al., "Retentive Network: A Successor to Transformer for Large Language Models" (2023), arXiv:2307.08621

- Yang et al., "Gated Linear Attention Transformers with Hardware-Efficient Training" (2024), arXiv:2312.06635

Positional encoding scaling:

- Chen et al., "Extending Context Window of Large Language Models via Positional Interpolation" (2023), arXiv:2306.15595

- Peng et al., "YaRN: Efficient Context Window Extension of Large Language Models" (2023), arXiv:2309.00071

- Ding et al., "LongRoPE: Extending LLM Context Window Beyond 2 Million Tokens" (2024), arXiv:2402.13753

- bloc97 and emozilla, NTK-aware and Dynamic NTK scaling (2023), community posts on r/LocalLLaMA

Long-context benchmarks:

- Kamradt, "Needle in a Haystack - Pressure Testing LLMs" (2023), github.com/gkamradt/LLMTest_NeedleInAHaystack

- Hsieh et al., "RULER: What's the Real Context Size of Your Long-Context Language Models?" (2024), arXiv:2404.06654

- Zhang et al., "Bench: Extending Long Context Evaluation Beyond 100K Tokens" (InfiniteBench, 2024), arXiv:2402.13718

KV-cache and serving optimization:

- Liu et al., "DeepSeek-V2: A Strong, Economical, and Efficient Mixture-of-Experts Language Model" (2024), arXiv:2405.04434 (introduces Multi-Head Latent Attention / MLA)

- Li et al., "SnapKV: LLM Knows What You are Looking for Before Generation" (2024), arXiv:2404.14469

- Zheng et al., "SGLang: Efficient Execution of Structured Language Model Programs" (2024), arXiv:2312.07104 (introduces RadixAttention for KV prefix sharing)

Production systems:

- Google, "Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context" (2024), technical report

- Anthropic, "Introducing the next generation of Claude" (Claude 3, 2024), product announcement

Next Topics

- Context engineering: system-level strategies for managing what goes into the context window

Last reviewed: April 27, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

2- Attention Mechanism Theorylayer 4 · tier 2

- Gemini and Google Modelslayer 5 · tier 2

Derived topics

2- Forgetting Transformer (FoX)layer 4 · tier 2

- Context Engineeringlayer 5 · tier 2

Graph-backed continuations