Learning Theory Core

VC Dimension

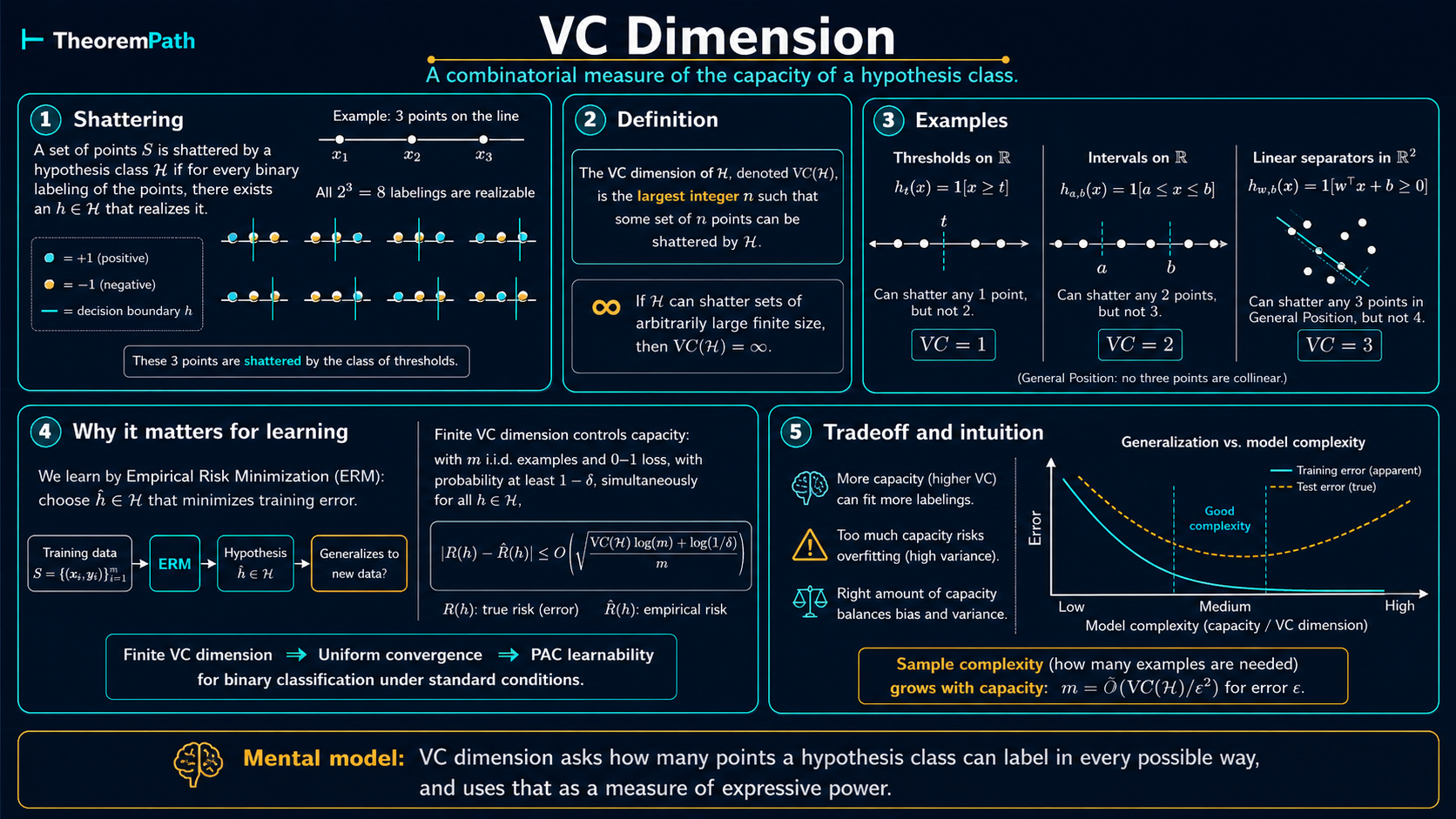

The Vapnik-Chervonenkis dimension: a combinatorial measure of hypothesis class complexity that characterizes learnability in binary classification.

Prerequisites

Why This Matters

Uniform convergence tells you that controlling hypothesis class complexity gives generalization. VC dimension tells you how to measure that complexity for infinite classes.

Hide overviewShow overview

The finite-class bound depends on , which is infinite for continuous hypothesis classes like linear classifiers. VC dimension replaces the crude counting argument with a combinatorial measure: how many points can the class label in all possible ways? This turns out to be the right notion of complexity for binary classification. Finite VC dimension is both necessary and sufficient for PAC learnability.

Mental Model

Imagine placing points on a table and asking: can my hypothesis class produce every possible labeling of these points? If I put down 3 points and my class can label them in all ways, the class "shatters" those 3 points. The VC dimension is the largest number of points you can shatter.

A class with high VC dimension can fit many patterns, including spurious ones. A class with low VC dimension is constrained. It cannot memorize arbitrary labelings, so good performance on training data is more likely to reflect genuine structure.

Formal Setup and Notation

We work with binary classification: and . The loss is the 0-1 loss .

For a set , the restriction of to is:

This is the set of all labeling patterns that can produce on .

Core Definitions

Shattering

A hypothesis class shatters a set if and only if for every labeling , there exists such that for all .

Equivalently, . The restriction of to contains all possible binary labelings.

Growth Function

The growth function (or shattering coefficient) of is:

It counts the maximum number of distinct labelings can produce on any set of points. Always , with equality if and only if can shatter some set of size .

VC Dimension

The VC dimension of , denoted or , is the largest such that .

if and only if can shatter arbitrarily large sets.

Critical asymmetry: To show , you exhibit one set of size that is shattered. To show , you must show that no set of size can be shattered. The existential vs. universal quantifiers make lower bounds easier than upper bounds.

Main Theorems

Sauer-Shelah Lemma

Statement

If , then for all :

Formalization note. The claim-level Lean wrapper verifies the binomial-sum form above by translating the restriction of a binary hypothesis class to a finite sample into a finite family of subsets. The common analytic estimate

for is a downstream corollary, not part of the current verified Lean claim. The form requires ; for , it can be smaller than the actual bound and is not valid.

Intuition

Once exceeds the VC dimension, the hypothesis class cannot produce all labelings. The Sauer-Shelah lemma quantifies exactly how constrained the class becomes: the number of achievable labelings grows only polynomially, not exponentially. This is the phase transition that makes uniform convergence possible for finite-VC-dimension classes.

Proof Sketch

By induction on . The formal object is the restriction on the set . The key step: for any restriction on points with VC dimension , partition the labelings by what happens when you project out one point . The labelings where each projection onto appears with only one value of the -coordinate form a restriction of VC dimension at most on the remaining points. The labelings where both values of the -coordinate appear (both extensions are achieved) form a restriction of VC dimension at most on points, because if this class shattered a set of size , adding would give a shattered set of size for the original class. Apply the inductive hypothesis to both parts and use the identity . Rigorous versions are usually presented via Pajor's trace argument; see Alon, Ben-David, Cesa-Bianchi, and Haussler (1997) or Pajor (1985).

Why It Matters

Without Sauer-Shelah, you cannot plug VC dimension into uniform convergence bounds. The lemma is the bridge: it converts the combinatorial statement "VC dimension is " into the quantitative bound , which can then be used in place of in union-bound-style arguments. The transition from exponential to polynomial growth is what makes learning possible.

Failure Mode

The Sauer-Shelah bound is tight in the worst case (there exist classes achieving equality), but can be very loose for specific hypothesis classes. For example, the class of thresholds on has VC dimension 1 and growth function , which matches the Sauer-Shelah bound . But for more structured classes the bound can significantly overestimate.

VC Generalization Bound

Statement

Let have VC dimension . For any distribution and any , with probability at least over an i.i.d. sample of size :

where is a universal constant independent of , , , and . The original VC proof (Vapnik 1998) gives up to logarithmic factors; Mohri, Rostamizadeh, and Talwalkar (2018), Theorem 3.22, give an explicit form suitable for numerical sample-size calculations. In particular, the sample complexity of uniform convergence (and hence ERM learning) is:

Intuition

Replace in the finite-class bound with . Since is finite for learnable classes, this gives a meaningful bound even for infinite hypothesis classes. For a continuous class, is infinite; finite VC dimension gives in place of , which is what makes learning with infinite classes (like all halfspaces) possible.

Proof Sketch

The proof uses the symmetrization technique in four steps:

- Double sampling. Introduce a "ghost sample" of the same size. Show that .

- Rademacher symmetrization. Swap labels between and using random signs . The supremum does not change in expectation because the swapped version has the same distribution.

- Conditioning and growth function. Condition on the points and use the growth function to bound the number of effective distinct hypotheses: at most .

- Sauer-Shelah + Hoeffding. Replace with and apply Hoeffding to the resulting finite set of hypotheses. Union bound over at most effective hypotheses.

Why It Matters

This is the central theorem of classical learning theory for binary classification. Combined with the fundamental theorem of statistical learning, it shows a clean equivalence—a binary hypothesis class is PAC-learnable if and only if its VC dimension is finite. The sample complexity scales linearly in (up to log factors).

Failure Mode

The VC bound is distribution-free (worst case over all ). This makes it robust but often extremely loose. For "nice" distributions (e.g., data with large margin), data-dependent bounds like Rademacher complexity can be much tighter. More critically, the VC bound gives vacuous guarantees for modern neural networks, which have VC dimension proportional to the number of parameters. often in the billions.

Binary VC Bridge for Zero-One Loss

Statement

For binary classifiers with zero-one loss , the number of distinct loss patterns on a sample equals the number of distinct binary labeling patterns (the trace):

As a corollary, the effective class satisfies the Sauer-Shelah bound directly from the binary trace's VC dimension :

This removes the "user-supplied growth assumption" caveat from the VC-style Rademacher bounds for binary classification with 0-1 loss.

Intuition

A binary classifier's loss pattern on a sample is determined entirely by its prediction pattern: knowing which points are classified as 0 vs 1 determines which points incur loss 0 vs loss 1. The map from prediction patterns to loss vectors is injective (distinct predictions yield distinct losses), and the map from prediction patterns to trace sets is also injective. Since both the effective class and the trace are images of the same prediction-pattern set through injective maps, they have equal cardinality.

Proof Sketch

Both the effective class and the binary trace factor through the prediction pattern :

- effectiveClass = image of prediction patterns under (map )

- binaryClassTrace = image of prediction patterns under (map )

Both toLossVec and toFilterSet are injective on , so .

Why It Matters

Without this bridge, the VC-style Rademacher sample-complexity chain requires the user to supply an external growth bound on the effective class. This theorem closes that gap for binary classification with 0-1 loss: the Sauer-Shelah bound on the binary trace directly bounds the effective loss-pattern class, making the full chain self-contained.

Failure Mode

The equality holds only for 0-1 loss on binary classifiers. For real-valued loss functions or multi-class classifiers, the effective class can differ from the binary trace; the bridge does not generalize without additional structure (e.g., a contraction argument for Lipschitz losses).

Proof Ideas and Templates Used

The VC bound proof introduces two major techniques:

-

Symmetrization (ghost sample trick): replace the unknown population risk with the empirical risk on a second independent sample. This converts a probability-vs-expectation gap into an empirical-vs-empirical gap, which is easier to control.

-

Effective hypothesis counting: on any fixed set of points, the infinite class induces at most distinct labelings. Sauer-Shelah bounds this by . Now you have a finite union bound with polynomial (not infinite) cost.

These two ideas recur throughout learning theory, and are also the foundation for Rademacher complexity bounds.

Canonical Examples

Thresholds on the real line: VC dimension 1

Let be the class of one-sided thresholds.

Lower bound (): A single point is shattered: take for the labeling and for the labeling .

Upper bound (): Take any two points . The labeling would require a threshold with and , which forces , contradicting . So no two points are shattered.

Therefore . The growth function matches the Sauer-Shelah bound exactly: , equal to .

Halfplanes in R^2 have VC dimension 3

Let be the set of all halfplanes in : .

Lower bound (): Take three non-collinear points, e.g., the vertices of an equilateral triangle. Non-collinearity is essential: for three collinear points, the middle point cannot be separated from both endpoints by a halfplane, so is unachievable.

For non-collinear points, check all labelings: and are achieved by halfplanes excluding or containing all three; the three singleton-positive labelings each isolate one vertex on one side of a line (possible by the symmetry of the equilateral triangle); the three singleton-negative labelings follow by flipping the halfplane orientation. All 8 labelings are achievable.

Upper bound (): Take any 4 points in . If one point lies inside the convex hull of the other three, the labeling that assigns the opposite label from all others cannot be achieved by a halfplane (because is a convex combination of points with the opposite label). If no point is in the convex hull of the others (the 4 points form a convex quadrilateral), the "alternating" labeling on consecutive vertices cannot be achieved. Either way, no set of 4 points is shattered.

Therefore . More generally, halfspaces in have VC dimension .

General via Radon's theorem. The clean proof of the upper bound for arbitrary uses Radon's theorem (Radon 1921): any points in can be partitioned into two disjoint sets whose convex hulls intersect. If a halfspace labels as and as , a point in would lie on both sides, a contradiction. So no points are shattered. See Shalev-Shwartz and Ben-David, Understanding Machine Learning, Theorem 9.2.

Intervals on the real line: VC dimension 2

.

Shatters any 2 points : use for ; for ; for ; for (a bounded interval disjoint from both points).

Cannot shatter any 3 points : the labeling requires an interval containing and but not , which is impossible for a single interval. So .

The sin-classifier: one parameter, infinite VC dimension

Consider . Despite having only one parameter , this class has infinite VC dimension.

Infinite VC dimension means arbitrarily large shattered sets exist, not that every point configuration is shatterable. For every , there exist -point configurations that are shattered by this class. The classical construction takes for : for any target labeling , one can choose large enough so that matches at each sample point. This shows VC dimension can be much larger than the number of parameters. See Vapnik, Statistical Learning Theory (1998), §4.8, or Anthony and Bartlett, Neural Network Learning, Theorem 7.8.

Extensions to Real-Valued Function Classes

VC dimension is defined for binary function classes. For real-valued function classes (regression, scoring functions, neural network outputs before thresholding), two standard extensions apply:

- Pseudo-dimension (Pollard 1984; Kearns and Schapire 1994): the VC dimension of the class of thresholded functions . Used for uniform convergence bounds on real-valued losses.

- Fat-shattering dimension (Kearns and Schapire 1994; Bartlett, Long, and Williamson 1996): a scale-sensitive refinement requiring the threshold separation to exceed a margin . Useful for margin bounds and continuous classes where pseudo-dimension is too coarse.

Bartlett, Harvey, Liaw, and Mehrabian (2019), cited in the references, actually proves pseudo-dimension bounds for piecewise-linear networks; the same bounds transfer to VC dimension for thresholded classifiers.

PAC Learnability Characterization

The fundamental theorem of statistical learning states that for a binary class with 0-1 loss:

- is realizable-PAC-learnable iff iff ERM on is a realizable PAC learner (Shalev-Shwartz and Ben-David, Understanding Machine Learning, Theorem 6.7).

- is agnostic-PAC-learnable iff iff ERM on is an agnostic PAC learner (Shalev-Shwartz and Ben-David, Theorem 6.8).

Finite VC dimension characterizes learnability in both the realizable setting (where some has zero risk) and the agnostic setting (no such assumption). In both cases the same combinatorial quantity, , governs sample complexity.

Common Confusions

VC dimension is not the number of parameters

A common misconception is that VC dimension equals the number of parameters. While this happens to be true for halfspaces ( parameters, VC dimension ), it is not true in general. The sin-classifier above has 1 parameter but infinite VC dimension. Conversely, there exist high-dimensional parameterizations with low VC dimension due to structural constraints. The relationship between parameters and VC dimension is nuanced and class-specific.

Shattering requires some set, not every set

means there exists a set of size that is shattered, not that every set of size is shattered. When proving a lower bound, you only need to find one shatterable set. When proving an upper bound, you must show that no set of size can be shattered. this requires a universal argument, which is typically harder.

VC dimension is worst-case; Rademacher complexity is data-dependent

VC dimension is a combinatorial worst-case measure: it is a single number that depends only on , not on the data distribution. Rademacher complexity is an average-case measure: it measures how well the class correlates with random noise on the specific data at hand. When the data has structure (e.g., large margin), Rademacher complexity often captures this while VC dimension does not. In typical regimes — favorable distributions, margin structure, or rich function classes — Rademacher bounds are tighter, and worst-case Rademacher complexity is in fact bounded by the VC-based growth function via Massart's lemma, so the standard Rademacher route gives bounds at least as good as the symmetrization-based VC route. But "always at least as loose" is too strong: the two bound paths use different proof routes, different absolute constants, and different factors, so for specific a particular VC inequality can come out smaller than a particular Rademacher inequality. The right phrasing is that Rademacher complexity is the more refined measure, and its bounds typically dominate; "always tighter" requires fixing the proof template.

Summary

- VC dimension = size of the largest set the class can shatter

- Sauer-Shelah: once past the VC dimension, growth is polynomial (), not exponential ()

- VC generalization bound: sample complexity for uniform convergence

- Finite VC dimension realizable-PAC-learnability agnostic-PAC-learnability (for binary classification with 0-1 loss), and in both cases ERM is a valid learner

- Halfspaces in :

- VC dimension does not equal the number of parameters in general

- VC dimension is worst-case and distribution-free; Rademacher complexity is distribution-aware and often tighter

Exercises

Problem

Compute the VC dimension of the class of all unions of intervals on the real line: where each .

Problem

Let and be hypothesis classes with VC dimensions and . Show that .

Problem

A deep ReLU neural network with total weights and layers has VC dimension (Bartlett, Harvey, Liaw, and Mehrabian 2019, arXiv:1703.02930). The form applies to shallow (constant-depth) networks and underestimates the VC dimension of deep networks. If a network has parameters, layers, and is trained on examples, what does the VC bound say about generalization? Why is this problematic given that such networks often achieve low test error in practice?

Related Comparisons

References

Canonical:

- Vapnik & Chervonenkis, "On the Uniform Convergence of Relative Frequencies of Events to their Probabilities" (1971). Proved the growth-function bound before Sauer and Shelah.

- Sauer, "On the Density of Families of Sets" (1972). Independent proof of the growth-function bound.

- Shelah, "A Combinatorial Problem; Stability and Order for Models and Theories in Infinitary Languages" (1972). Independent proof from model theory. The result is commonly called the Sauer-Shelah lemma (sometimes Sauer-Shelah-Perles, with Perles credited for an unpublished earlier proof).

- Radon, "Mengen konvexer Körper, die einen gemeinsamen Punkt enthalten" (1921). Radon's theorem, used for the halfspace VC upper bound.

- Shalev-Shwartz & Ben-David, Understanding Machine Learning, Chapters 6-7 and Theorem 9.2

Current:

- Zhang, Bengio, Hardt, Recht, Vinyals, "Understanding deep learning requires rethinking generalization" (ICLR 2017)

- Bartlett, Harvey, Liaw, Mehrabian, "Nearly-tight VC-dimension and pseudodimension bounds for deep neural networks" (JMLR 2019, arXiv:1703.02930). Gives pseudo-dimension bounds for deep piecewise-linear networks.

Supporting:

- Vapnik, Statistical Learning Theory (1998), §4.8. Sin-classifier example and explicit VC constants.

- Anthony & Bartlett, Neural Network Learning: Theoretical Foundations (1999), Theorem 7.8.

- Mohri, Rostamizadeh, Talwalkar, Foundations of Machine Learning (2018), Chapters 2-4 and Theorem 3.22 for explicit VC constants.

- Pollard, Convergence of Stochastic Processes (1984). Pseudo-dimension.

- Kearns & Schapire, "Efficient Distribution-Free Learning of Probabilistic Concepts" (1994). Fat-shattering dimension.

- Alon, Ben-David, Cesa-Bianchi, Haussler, "Scale-sensitive dimensions, uniform convergence, and learnability" (JACM 1997). Rigorous trace-based proofs.

- Pajor, Sous-espaces des espaces de Banach (1985). Trace argument for Sauer-Shelah.

Next Topics

From VC dimension, the natural next steps are:

- Rademacher complexity: data-dependent complexity that can give tighter, distribution-aware bounds

- Algorithmic stability: an alternative to uniform convergence that can explain generalization when VC bounds are vacuous

Last reviewed: April 26, 2026

Claim evidence

Selected claims on this topic have machine-checked support.

Collapsed by default because this is audit material. Open it to see exact theorem names, claim scopes, and source roles.

Click to expand1

claim matches

1

dependency proofs

0

incomplete markers

Claim evidence

Selected claims on this topic have machine-checked support.

Collapsed by default because this is audit material. Open it to see exact theorem names, claim scopes, and source roles.

Click to expand1

claim matches

1

dependency proofs

0

incomplete markers

This is claim-level evidence, not a whole-page badge. The checked theorem must match the recorded claim scope. Supporting lemmas stay labeled as dependency proofs, not full claim matches.

Binary Vc Zero One Loss Bridge

Formal support is recorded for this governed claim.

Formal record

- Checked theorem

- TheoremPath.LearningTheory.BinaryVCBridge.effectiveClass_zeroOneLoss_card_eq_binaryClassTrace

- Claim scope

- effectiveClass(zeroOneLoss h, x).card = binaryClassTrace(h, x).card AND effectiveClass.card ≤ Σ {k≤vcDim} C(n,k)

- Proof scope

- binary vc bridge zero one loss equality and sauer shelah corollary

Sauer Shelah Lemma

Formal support is recorded for this governed claim.

Formal record

- Checked theorem

- TheoremPath.LearningTheory.VCDimension.sauerShelahFiniteSetFamily

- Claim scope

- finite set family sauer shelah binomial sum

- Proof scope

- exact mathlib wrapper for sauer shelah binomial sum

- Mathlib theorem

- Finset.card_le_card_shatterer, Finset.card_shatterer_le_sum_vcDim

See the public evidence page for the display rules and representative Lean mapping examples.

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

12- Sets, Functions, and Relationslayer 0A · tier 1

- Concentration Inequalitieslayer 1 · tier 1

- PAC Learning Frameworklayer 1 · tier 1

- Understanding Machine Learning (Shalev-Shwartz, Ben-David)layer 1 · tier 1

- Empirical Risk Minimizationlayer 2 · tier 1

Derived topics

5- Sample Complexity Boundslayer 2 · tier 1

- Algorithmic Stabilitylayer 3 · tier 1

- Rademacher Complexitylayer 3 · tier 1

- Implicit Bias and Modern Generalizationlayer 4 · tier 1

- Glivenko-Cantelli Theoremlayer 2 · tier 2