ML Methods

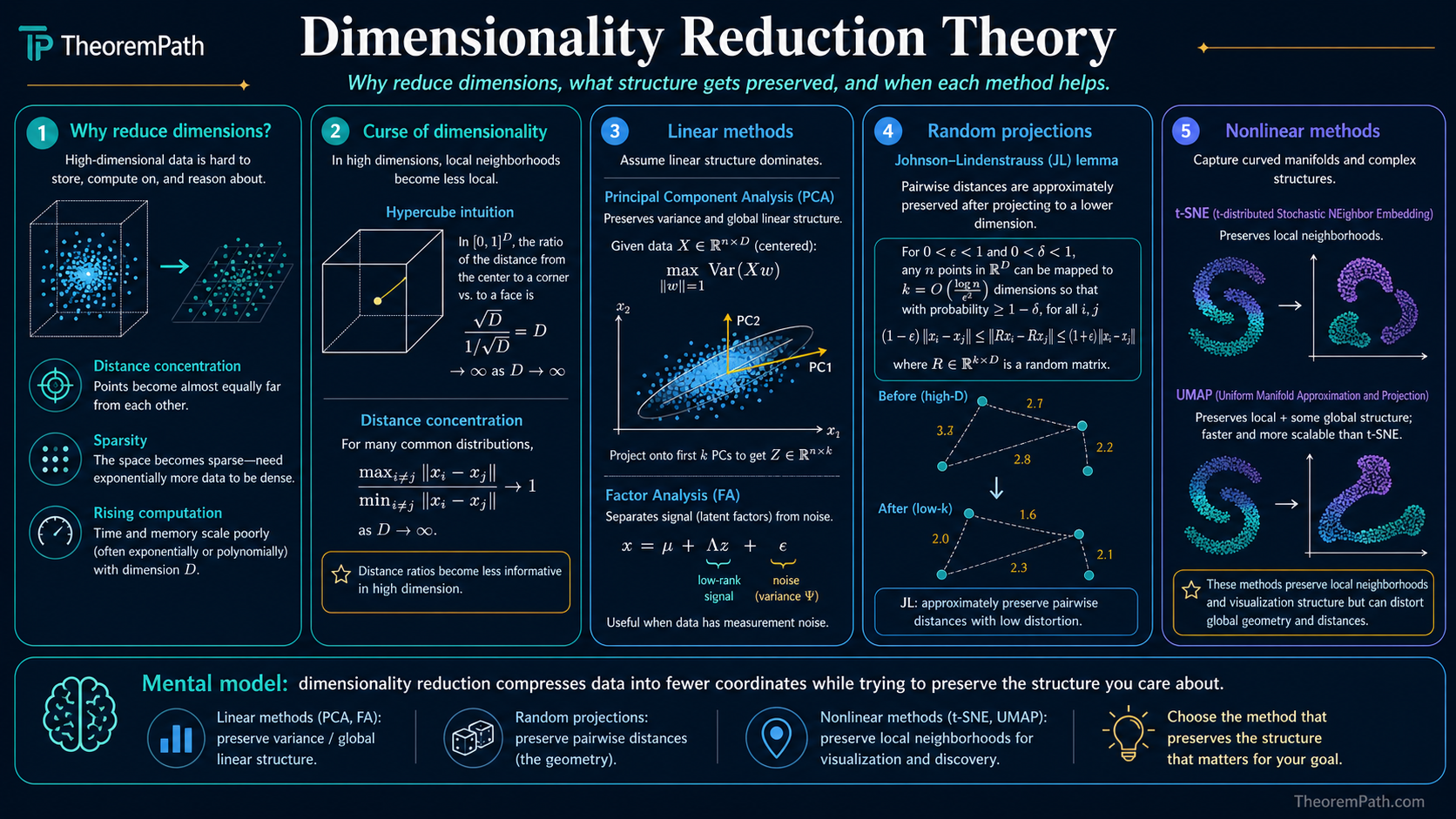

Dimensionality Reduction Theory

Why and how to reduce dimensions: the curse of dimensionality, PCA, random projections (JL lemma), t-SNE, UMAP, and when each method preserves the structure you care about.

Prerequisites

Why This Matters

High-dimensional data creates three problems. First, distance metrics become meaningless: in high dimensions, the ratio of nearest-neighbor to farthest-neighbor distance converges to 1. Second, the volume of the space grows exponentially, so data becomes sparse and density estimation requires exponentially more samples. Third, computation scales with dimension. Dimensionality reduction addresses all three by mapping data to a lower-dimensional space while preserving relevant structure.

Hide overviewShow overview

The Curse of Dimensionality

Curse of Dimensionality

As the dimension increases while the number of samples stays fixed, data becomes increasingly sparse. For a unit hypercube in , the fraction of volume within distance of the boundary is , which approaches 1 as . Nearly all data points are near the boundary. Neighborhoods that are "local" in low dimensions become global in high dimensions.

Concretely: to maintain the same density of samples in a -dimensional space as in a 1-dimensional space with points, you need points. With 100 samples, a 10-dimensional space already feels empty.

Linear Methods

PCA (Review)

PCA projects onto the top eigenvectors of the covariance matrix, maximizing preserved variance. It is optimal among linear projections for reconstruction error (Eckart-Young theorem). PCA preserves global structure (large distances, principal directions of variance) but can miss nonlinear structure (curved manifolds).

Factor Analysis

Factor analysis models where is a low-dimensional latent and with diagonal . Unlike PCA, factor analysis separates signal variance () from noise variance (), making it more appropriate when features have different noise levels.

Random Projections

Johnson-Lindenstrauss Lemma

Statement

For any set of points in and any , there exists a linear map with such that for all pairs :

Moreover, a random matrix with entries drawn i.i.d. from satisfies this with high probability.

Intuition

You can compress points to dimensions while preserving all pairwise distances up to a multiplicative factor. The target dimension depends on and , not on the original dimension . A random Gaussian matrix works: you do not need to look at the data.

Proof Sketch

For a fixed pair , the projected distance is a sum of independent chi-squared random variables (after normalizing). By concentration (sub-exponential tail bounds), this sum stays within of its mean with probability at least . Set and take a union bound over all pairs.

Why It Matters

The JL lemma justifies random projections as a dimensionality reduction technique. It is data-independent (no training), fast ( matrix-vector multiply, or with sparse/structured matrices), and provides provable guarantees. It is the theoretical backbone of locality sensitive hashing and compressed sensing.

Failure Mode

The JL lemma preserves pairwise distances, not cluster structure, manifold geometry, or class boundaries. The reduced dimension can still be large for big datasets. Also, the constant in matters: for and , you need dimensions, which may not be low enough for visualization.

Nonlinear Methods

t-SNE

t-SNE Cost Function

Statement

t-SNE minimizes the KL divergence between the high-dimensional similarity distribution and the low-dimensional similarity distribution :

where (symmetrized) in high dimensions and in low dimensions, using a Student-t kernel with one degree of freedom.

Intuition

t-SNE converts distances to probabilities. Nearby points in high-D get high ; the algorithm finds low-D positions where the match. The heavy-tailed Student-t kernel in low-D addresses the crowding problem: it allows moderate-distance points in high-D to be placed far apart in low-D without penalty, making room for clusters to separate.

Proof Sketch

The KL divergence penalizes cases where is large but is small (nearby points in high-D placed far apart in low-D). It does not penalize the reverse as strongly (far points placed nearby). This asymmetry preserves local structure but not global distances.

Why It Matters

t-SNE produces visually striking 2D/3D embeddings that reveal cluster structure. It is the standard visualization tool for high-dimensional data in biology (single-cell RNA-seq), NLP (word embeddings), and computer vision.

Failure Mode

t-SNE does not preserve global structure: distances between clusters are meaningless. Different random initializations produce different layouts. Cluster sizes in the visualization do not reflect true cluster sizes. Perplexity (effectively, the neighborhood size) must be tuned, and different values can produce qualitatively different visualizations. t-SNE is for visualization, not for downstream ML tasks.

UMAP

UMAP (Uniform Manifold Approximation and Projection) builds a weighted -nearest-neighbor graph in high-D, then optimizes low-D positions to preserve the graph structure using a cross-entropy-like loss. UMAP tends to preserve global structure better than t-SNE (the graph-based approach maintains some inter-cluster relationships), runs faster on large datasets, and can embed into dimensions higher than 2 or 3 for downstream use.

The theoretical justification via Riemannian geometry and fuzzy simplicial sets is less straightforward than t-SNE's probabilistic framing, and recent work (Chari & Pachter, 2023) argues UMAP's theoretical claims are overstated. In practice, UMAP often produces useful embeddings, but treat it as a heuristic rather than a principled algorithm.

Kernel PCA

Apply PCA in a reproducing kernel Hilbert space (RKHS) defined by kernel . This captures nonlinear structure while retaining the eigenvalue framework of PCA. The kernel matrix serves as the Gram matrix; the top eigenvectors of the centered kernel matrix give the embedding. Common kernels: RBF (Gaussian), polynomial.

Autoencoders

Learn and by minimizing reconstruction error . With linear activations and , this recovers PCA. With nonlinear activations, it learns nonlinear manifolds. Variational autoencoders add a probabilistic structure to the latent space.

When to Use Which

| Method | Preserves | Speed | Downstream use | Deterministic |

|---|---|---|---|---|

| PCA | Global variance | Fast | Yes | Yes |

| Random projection | Pairwise distances | Fast | Yes | No |

| t-SNE | Local neighborhoods | Slow | No (visualization only) | No |

| UMAP | Local + some global | Moderate | Yes (with care) | No |

| Kernel PCA | Nonlinear kernel structure | Moderate | Yes | Yes |

| Autoencoder | Learned reconstruction | Slow (training) | Yes | Yes (given weights) |

Common Confusions

t-SNE cluster distances are meaningless

Two clusters that are far apart in a t-SNE plot may or may not be far apart in the original space. The KL divergence objective prioritizes preserving local structure; global distances are sacrificed. Never interpret inter-cluster distance or relative cluster size from a t-SNE plot.

PCA components are not features

The first principal component is a linear combination of all original features. It is not a single feature. Interpreting PC1 as "the most important feature" is incorrect. You must examine the loadings (coefficients) to understand what each component represents.

Explained variance does not mean explained signal

PCA maximizes explained variance, but variance is not the same as task-relevant information. A high-variance direction could be noise. LDA (Linear Discriminant Analysis) maximizes class separability instead, which is more relevant for classification tasks.

Summary

- The curse of dimensionality makes high-dimensional data sparse and distances meaningless; reduction is often necessary

- PCA: linear, global, fast, preserves variance; optimal for reconstruction

- JL lemma: random projections preserve pairwise distances in dimensions with no data dependence

- t-SNE: nonlinear, local, for visualization only; do not interpret global structure

- UMAP: faster than t-SNE, preserves more global structure, usable for downstream tasks

- Choose the method based on what structure you need to preserve and whether the output is for visualization or for further computation

Exercises

Problem

You have points in . Using the Johnson-Lindenstrauss lemma with , what is the minimum target dimension for random projection? Use .

Problem

Explain why t-SNE with high perplexity (e.g., perplexity = ) produces embeddings that look more like PCA, while low perplexity (e.g., perplexity = 5) produces tighter, more separated clusters. Connect this to the bandwidth in the Gaussian kernel.

Classical Manifold Learning Methods

These methods predate t-SNE and UMAP. They assume data lies on or near a smooth low-dimensional manifold embedded in high-dimensional space.

Isomap (Tenenbaum et al., 2000) approximates geodesic distances on the manifold using shortest paths in a k-nearest-neighbor graph, then applies classical multidimensional scaling (MDS) to the geodesic distance matrix. It preserves global geometry well when the manifold is convex (no "holes"). Failure mode: short-circuit edges in the graph (connecting points that are close in ambient space but far along the manifold) destroy the distance estimates.

Locally Linear Embedding (LLE) (Roweis & Saul, 2000) assumes each point can be reconstructed as a linear combination of its neighbors. It finds reconstruction weights by solving subject to . Then it finds low-dimensional coordinates that preserve these weights: . The second step is an eigenvalue problem. LLE captures local geometry but does not preserve global distances.

Laplacian Eigenmaps (Belkin & Niyogi, 2003) builds a weighted graph from the data (weights from a Gaussian kernel), computes the graph Laplacian , and embeds using the smallest non-trivial eigenvectors of the generalized eigenvalue problem . This is equivalent to finding coordinates that minimize , placing connected points close together. The connection to spectral clustering is direct: the same eigenvectors that give good embeddings also give good cluster assignments.

Diffusion Maps (Coifman & Lafon, 2006) use a random walk on the data graph to define a diffusion distance that captures the intrinsic geometry of the manifold. The diffusion distance between two points measures connectivity through many paths, not just the shortest path (unlike Isomap). This makes diffusion maps robust to noise and sampling density variation. The embedding coordinates are the top eigenvectors of the diffusion operator , where controls the scale of the geometry captured.

| Method | Preserves | Assumes | Cost | Weakness |

|---|---|---|---|---|

| PCA | Global variance | Linear subspace | Misses nonlinear structure | |

| Isomap | Geodesic distances | Convex manifold | Short-circuit edges | |

| LLE | Local linear structure | Locally linear manifold | No global guarantees | |

| Laplacian Eigenmaps | Graph connectivity | Smooth manifold | Scale-dependent | |

| Diffusion Maps | Multi-scale geometry | Smooth manifold | Bandwidth selection | |

| t-SNE | Local neighborhoods | None (heuristic) | Distances unreliable | |

| UMAP | Local + some global | Manifold (contested) | Theory incomplete |

Kernel PCA

Kernel PCA extends PCA to nonlinear feature spaces using the kernel trick. Instead of computing eigenvectors of the covariance matrix , it computes eigenvectors of the kernel matrix .

The kernel matrix is the Gram matrix in the feature space : . The top eigenvectors of give the principal components in feature space, projected back to kernel evaluations. For a Gaussian kernel, this captures nonlinear structure that linear PCA misses.

Kernel PCA is exact (not heuristic) and has a clear connection to the RKHS theory. Its limitation is the cost of computing and storing the kernel matrix, and the cost of the eigendecomposition. For , approximate methods (Nystrom, random features) are needed.

References

Canonical:

- Johnson & Lindenstrauss, "Extensions of Lipschitz mappings into a Hilbert space" (1984)

- van der Maaten & Hinton, "Visualizing Data using t-SNE" (JMLR 2008), Sections 2-3

Manifold learning:

- Tenenbaum, de Silva, Langford, "A Global Geometric Framework for Nonlinear Dimensionality Reduction" (Science, 2000). Isomap.

- Roweis & Saul, "Nonlinear Dimensionality Reduction by Locally Linear Embedding" (Science, 2000). LLE.

- Belkin & Niyogi, "Laplacian Eigenmaps for Dimensionality Reduction and Data Representation" (Neural Computation, 2003)

- Coifman & Lafon, "Diffusion Maps" (Applied and Computational Harmonic Analysis, 2006)

- Scholkopf, Smola, Muller, "Kernel Principal Component Analysis" (Neural Computation, 1998)

Current:

- McInnes et al., "UMAP: Uniform Manifold Approximation and Projection" (2018)

- Vershynin, High-Dimensional Probability (2018), Chapter 5 (JL lemma)

- Chari & Pachter, "The specious art of single-cell genomics" (2023)

Next Topics

- Kernels and RKHS: the theoretical framework behind kernel PCA and nonlinear embeddings

- Autoencoders: learning nonlinear dimensionality reduction with neural networks

- Riemannian optimization: when the embedding space itself has manifold structure

Last reviewed: April 14, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

3- Eigenvalues and Eigenvectorslayer 0A · tier 1

- Principal Component Analysislayer 1 · tier 1

- Measure Concentration and Geometric Functional Analysislayer 3 · tier 1

Derived topics

2- Autoencoderslayer 2 · tier 2

- Kernels and Reproducing Kernel Hilbert Spaceslayer 3 · tier 2

Graph-backed continuations