Optimization Function Classes

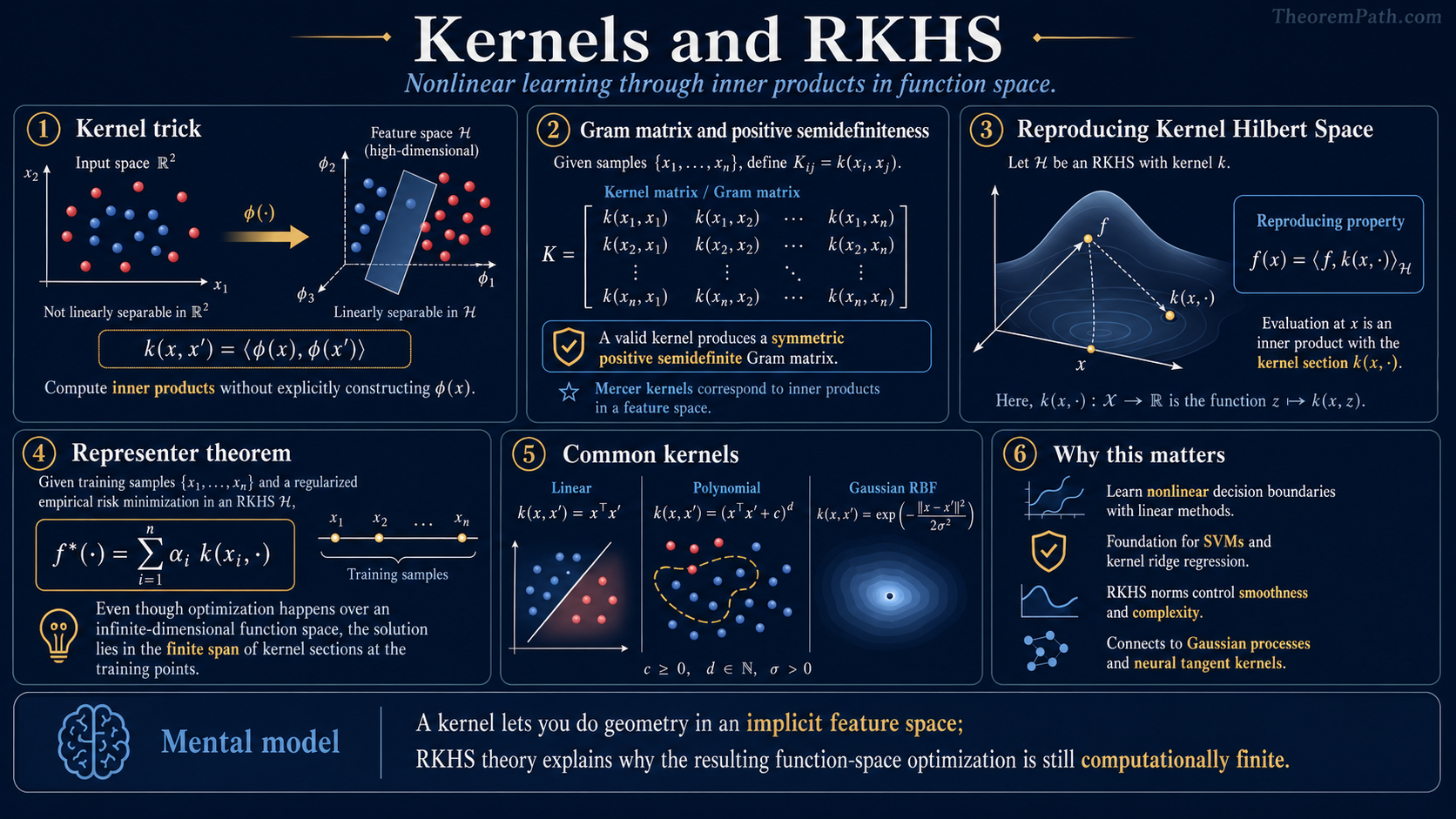

Kernels and Reproducing Kernel Hilbert Spaces

Kernel functions, Mercer's theorem, the RKHS reproducing property, and the representer theorem: the mathematical framework that enables learning in infinite-dimensional function spaces via finite-dimensional computations.

Why This Matters

Kernel methods solved one of the earliest fundamental problems in machine learning: how to learn nonlinear decision boundaries using algorithms designed for linear models. The kernel trick replaces inner products with kernel evaluations , implicitly mapping data into a high-dimensional (potentially infinite-dimensional) feature space without ever computing the feature vectors.

Hide overviewShow overview

But kernels are more than a computational trick. The theory of Reproducing Kernel Hilbert Spaces (RKHS) provides a rigorous framework for function-space learning: instead of optimizing over a finite-dimensional parameter vector, you optimize over an infinite-dimensional space of functions, and the representer theorem guarantees that the solution is finite-dimensional anyway.

Understanding RKHS is also essential for modern theory: Gaussian processes are the Bayesian counterpart of kernel methods, neural tangent kernels connect deep learning to kernel regression, and RKHS norms appear in interpolation theory and approximation bounds.

Mental Model

Imagine you want to learn a nonlinear function of . One approach: map to a feature vector in a higher-dimensional space and learn a linear function there. If includes all monomials of degree , then a linear function in feature space is a polynomial of degree in the original space.

The problem: can be enormous (or infinite-dimensional). The kernel trick observes that many algorithms only access the data through inner products . If you can compute directly (without constructing ), you get nonlinear learning at linear cost.

An RKHS is the function space associated with a kernel. It is the space of functions that "the kernel can represent," equipped with a norm that measures function complexity.

Formal Setup

Positive Definite Kernel

A function is a positive definite (p.d.) kernel if and only if it is symmetric () and for any points and any :

Equivalently, the Gram matrix is positive semidefinite for every finite collection of points.

Reproducing Kernel Hilbert Space (RKHS)

Given a p.d. kernel , the RKHS is the unique Hilbert space of functions satisfying:

- Containment: for all

- Reproducing property: for all

The reproducing property says: evaluating at is the same as taking an inner product with the "kernel function centered at ." This is what makes the kernel trick work.

RKHS Norm

The RKHS norm measures the complexity of within the function space. For :

More generally, in the Mercer expansion , we have (see Mercer's Theorem below). The finite-span formula is the specialization when lies in .

Functions with small RKHS norm are "smooth" relative to the kernel. The RKHS norm plays the role of the regularizer in kernel methods.

Main Theorems

Mercer's Theorem

Statement

Let be a continuous positive definite kernel on a compact set . Then there exist orthonormal eigenfunctions and non-negative eigenvalues (with ) such that:

The convergence is absolute and uniform on . The RKHS consists of functions with .

Intuition

Mercer's theorem says every (continuous, p.d.) kernel has a spectral decomposition. a "basis" of features ordered by importance. The feature map explicitly constructs the (possibly infinite-dimensional) feature space in which .

The eigenvalues control the "effective dimensionality" of the kernel. Fast eigenvalue decay (e.g., exponential for the Gaussian kernel) means the kernel effectively lives in a low-dimensional space despite having infinitely many features.

Proof Sketch

Define the integral operator by . By positive definiteness, is a positive, self-adjoint, compact operator (compactness follows from continuity of and compactness of ). By the spectral theorem for compact self-adjoint operators, has a countable set of non-negative eigenvalues with orthonormal eigenfunctions . The kernel expansion follows from the spectral decomposition of .

Why It Matters

Mercer's theorem justifies the "implicit feature space" interpretation of kernels. It also connects kernel methods to approximation theory: the eigenvalue decay rate of determines the approximation power of the RKHS, which in turn determines the generalization rate of kernel learning. Faster decay means the effective dimension is lower, so fewer samples are needed.

Failure Mode

Mercer's theorem requires compactness of and continuity of . For non-compact domains, you need the more general theory of positive definite functions and the Moore-Aronszajn theorem, which guarantees the existence of an RKHS for any p.d. kernel, not just continuous ones on compact sets.

The Representer Theorem

Statement

Consider the regularized empirical risk minimization problem over an RKHS :

where is a strictly increasing function. Then every minimizer has the finite representation:

for some coefficients .

Intuition

Even though is infinite-dimensional, the optimal function is a finite linear combination of kernel evaluations at the training points. The regularizer penalizes complexity (RKHS norm), so any component of orthogonal to the span of contributes to the norm without affecting the training loss. A strictly increasing regularizer therefore "kills" this orthogonal component at optimality.

This reduces an infinite-dimensional optimization problem to a finite-dimensional one: find minimizing .

Proof Sketch

Decompose any as where is in the span of the kernel functions at training points and is orthogonal.

By the reproducing property: . So does not affect any and hence does not affect the loss.

By Pythagorean theorem: . Since is strictly increasing, with equality only when .

Therefore, setting does not increase the regularizer (strictly decreases it whenever ) while keeping the loss unchanged; therefore every minimizer satisfies .

Why It Matters

The representer theorem is what makes kernel methods computationally feasible. Without it, optimizing over an RKHS would require searching through an infinite-dimensional space. With it, you solve an problem (or linear system for squared loss). The cost is for exact methods, which is why kernel methods scale poorly to large (a separate problem from the theoretical power of the method).

Failure Mode

The representer theorem as stated requires a strictly increasing regularizer. Without regularization (), the standard argument no longer forces : minimizers with nonzero orthogonal components may also attain the optimum, so the conclusion "every minimizer lies in " weakens to "some minimizer lies in that span" (the empirical loss is invariant to on the training points). For squared loss with there is still a representer-style solution via the normal equations; the failure is uniqueness, not existence. Also, the representation involves terms, so the model complexity grows with the dataset size. This is both a feature (the model adapts) and a bug (prediction cost is per test point).

Standard Kernels

The most common kernels and their properties:

Linear kernel: . RKHS = linear functions. Mercer eigenvalues depend on the data distribution. No nonlinearity.

Polynomial kernel: with . For , the RKHS on a compact set equals the polynomials of degree in variables (dimension ), equipped with a specific weighted-coefficient norm derived from multinomial coefficients (Steinwart & Christmann 2008, Example 4.11). For the kernel is homogeneous and the RKHS consists of homogeneous polynomials of degree exactly .

Gaussian (RBF) kernel: . The RKHS is infinite-dimensional. On compact domains or under Gaussian input measure the Mercer eigenvalues decay geometrically ( for some ; see Rasmussen-Williams 2006 Section 4.3 eq. 4.42, Zhu et al. 1998). Universal (Steinwart 2001; Micchelli-Xu-Zhang 2006): the RKHS is dense in in the sup-norm, so any continuous function on a compact can be approximated arbitrarily well by RKHS elements. Universal does not mean the RKHS contains every continuous function. Bandwidth controls smoothness.

Laplacian kernel : this is the Matern kernel with up to rescaling. The RKHS on is the Sobolev space (up to norm equivalence; Wendland 2005 Ch. 10, Fasshauer 2011). Sample functions are continuous but generally non-differentiable in the classical sense; they have square-integrable weak derivatives of fractional order. Used when the target function is rough.

Connection to SVMs

The support vector machine (SVM) is regularized ERM with hinge loss in an RKHS:

By the representer theorem, . The dual problem (via Lagrangian duality from convex optimization) produces the "support vector" formulation: at optimality, the solution is typically sparse, with most . The nonzero correspond to the support vectors. For soft-margin SVM with box constraint , KKT conditions split these into (i) margin points with lying exactly on the margin (), and (ii) margin violators with lying inside the margin or misclassified (). The "closest to the decision boundary" picture applies only to hard-margin SVM on separable data (Cortes & Vapnik 1995). Sparsity of the dual solution is a consequence of the hinge loss's non-differentiable kink at margin and the KKT complementary-slackness conditions (Steinwart-Christmann 2008 Thm. 5.28).

This sparsity is a bonus: prediction cost is rather than , where is the number of support vectors.

Common Confusions

The representer theorem is structural, not a recommendation to use kernel methods

The representer theorem says: "if you regularize with RKHS norm and your loss only depends on function values at training points, then the optimum is a kernel expansion." It does not say kernel methods are the best approach. In high dimensions with large datasets, neural networks typically outperform kernel methods despite lacking a representer theorem. The theorem is about the structure of the solution, not its quality.

Confusing "the solution has a nice form" with "the method is good" is a common error. The representer theorem is a mathematical fact, not practical advice.

The kernel trick is not about avoiding computation of phi(x)

A common oversimplification: "the kernel trick avoids computing the high-dimensional feature map." More precisely, the kernel trick avoids the explicit dependence on feature dimension. The cost instead depends on (number of training points) through the Gram matrix. For large , this can be worse than working with explicit features when the feature dimension is moderate. The kernel trick trades feature dimension for sample size.

RKHS norm is not just any smoothness measure

The RKHS norm measures smoothness relative to the kernel. For the Gaussian kernel, large RKHS norm means the function has high-frequency content. For the polynomial kernel, it means large coefficients on high-degree monomials. Different kernels induce different notions of "complexity." Choosing the wrong kernel means the RKHS norm does not correspond to the relevant notion of smoothness for your problem.

Canonical Examples

Kernel ridge regression

Kernel ridge regression minimizes . By the representer theorem, and . Substituting:

Taking the gradient and setting to zero: . This is solvable in time. The solution is the Bayesian posterior mean under a Gaussian process prior with covariance kernel .

Polynomial kernel for quadratic features

With on , the feature map is . Computing costs (one inner product), while computing explicitly costs . For degree- polynomial kernel in dimensions, the feature space has dimension , which grows rapidly, but always costs .

Exercises

Problem

Verify the reproducing property for the linear kernel . What is the RKHS? What is the RKHS norm of ?

Problem

Show that the Gram matrix of a p.d. kernel is positive semidefinite. Why does this imply for all ?

Problem

Prove that without a regularizer (), the representer theorem does not hold. Construct a loss function and kernel where the minimizer of over is not in the span of .

Related Comparisons

References

Canonical:

- Scholkopf & Smola, Learning with Kernels (2002), Chapters 1-4

- Aronszajn, "Theory of Reproducing Kernels" (1950), Trans. AMS

- Kimeldorf & Wahba, "Some Results on Tchebycheffian Spline Functions" (J. Math. Anal. Appl., 1971). Original representer theorem for spline smoothing.

- Scholkopf, Herbrich, Smola, "A Generalized Representer Theorem" (COLT 2001). Extension to arbitrary strictly monotonic regularizers and pointwise-dependent losses: the form used in this page.

- Cortes & Vapnik, "Support-Vector Networks" (1995), Machine Learning

- Rasmussen & Williams, Gaussian Processes for Machine Learning (2006), Section 4.3

- Wendland, Scattered Data Approximation (2005), Chapter 10

Current:

-

Steinwart & Christmann, Support Vector Machines (2008), Chapters 4-5 (Example 4.11, Theorem 5.28)

-

Steinwart, "On the influence of the kernel on the consistency of support vector machines" (2001), JMLR

-

Micchelli, Xu & Zhang, "Universal Kernels" (2006), JMLR

-

Fasshauer, "Positive Definite Kernels: Past, Present and Future" (2011), Dolomites Research Notes on Approximation

-

Zhu, Williams, Rohwer & Morciniec, "Gaussian regression and optimal finite dimensional linear models" (1998), Neural Networks and Machine Learning

-

Bartlett & Mendelson, "Rademacher and Gaussian Complexities" (2002), JMLR. for RKHS Rademacher bounds

-

Boyd & Vandenberghe, Convex Optimization (2004), Chapters 2-5

-

Nesterov, Introductory Lectures on Convex Optimization (2004), Chapters 1-3

Next Topics

The natural next step from kernels and RKHS:

- Implicit bias and modern generalization: how minimum-norm solutions in RKHS connect to the implicit bias of gradient descent in deep learning

Last reviewed: April 13, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

14- Characteristic Functionslayer 1 · tier 1

- Convex Optimization Basicslayer 1 · tier 1

- Gram Matrices and Kernel Matriceslayer 1 · tier 1

- Ridge Regressionlayer 1 · tier 1

- Convex Dualitylayer 2 · tier 1

Derived topics

12- Kernel Density Estimationlayer 2 · tier 1

- Local Polynomial Regressionlayer 2 · tier 1

- Nadaraya-Watson Kernel Regressionlayer 2 · tier 1

- Smoothing Splineslayer 2 · tier 1

- Thin-Plate Splineslayer 2 · tier 1

+7 more on the derived-topics page.