LLM Construction

Document Intelligence

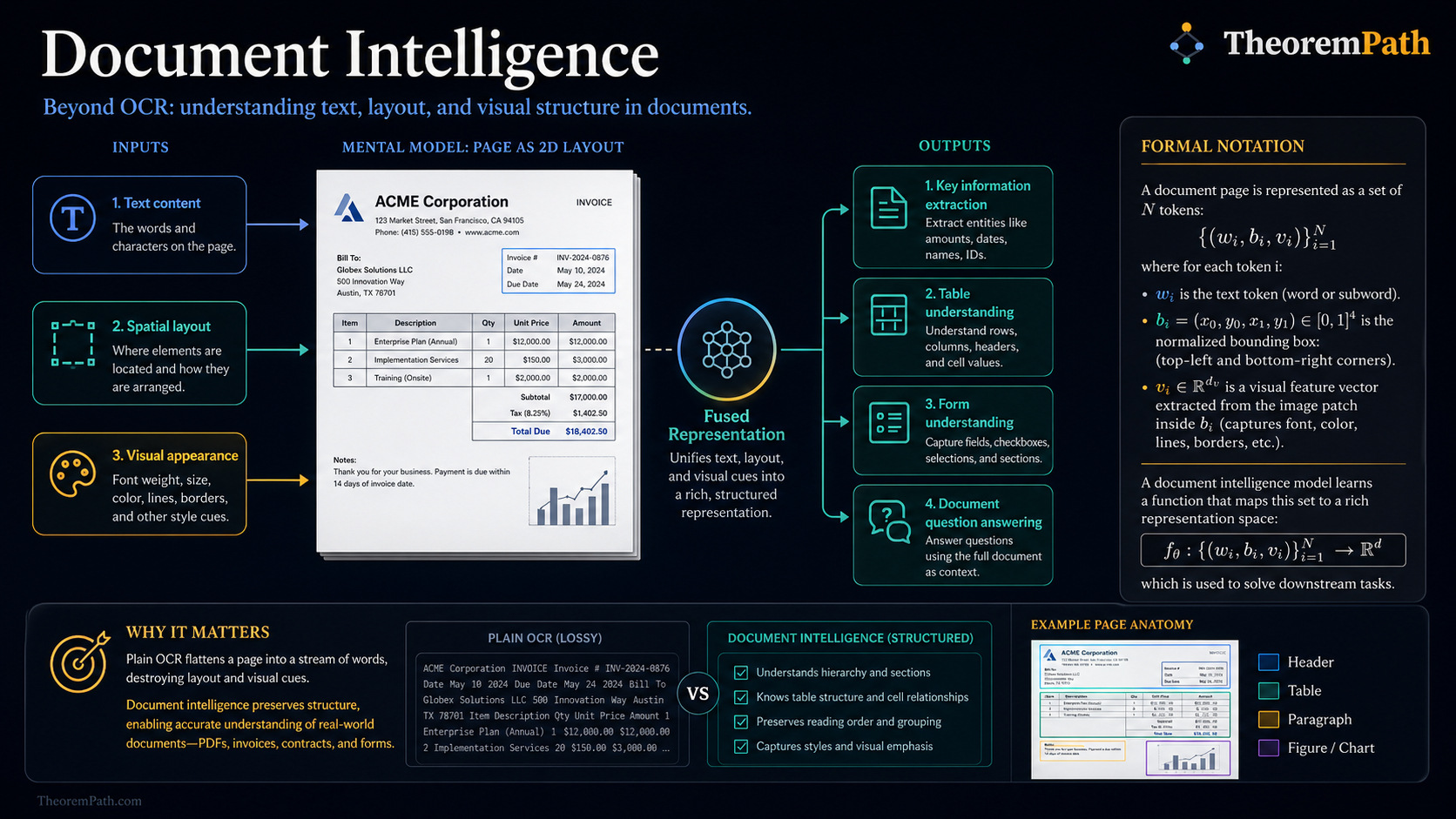

Beyond OCR: understanding document layout, tables, figures, and structure using models that combine text, spatial position, and visual features to extract structured information from PDFs, invoices, and contracts.

Prerequisites

Why This Matters

Most enterprise data lives in documents: PDFs, scanned invoices, legal contracts, financial reports, medical records. These documents have rich spatial structure (headers, tables, columns, figures) that plain text extraction destroys. Document intelligence recovers this structure and extracts typed, queryable information from unstructured documents.

Hide overviewShow overview

OCR converts images to text. Document intelligence goes further: it understands what the text means based on where it appears, how it is formatted, and what surrounds it. A number in a table cell means something different from the same number in a page header.

Mental Model

A document page is a 2D spatial arrangement of text, images, and graphical elements. Understanding a document requires three types of information:

- Text content: what words appear on the page

- Spatial layout: where each word is positioned (bounding boxes)

- Visual appearance: font size, boldness, color, surrounding lines and borders

Document intelligence models fuse all three modalities to produce a unified representation of the document.

Formal Setup and Notation

Document Representation

A document page is represented as a set of tokens where:

- is the text token (from OCR or digital extraction)

- is the normalized bounding box

- is a visual feature vector from the image patch containing token

A document model learns mapping each token to a contextualized representation that incorporates text, position, and visual context.

Key Information Extraction (KIE)

Given a document with tokens and a predefined schema of field types (e.g., invoice number, date, total amount), KIE assigns each token to a field or to "none":

This is sequence labeling with spatial context. Standard BIO tagging applies, but the "sequence" is a 2D spatial arrangement, not a 1D text stream. The loss function for this classification is typically cross-entropy.

Core Definitions

The layout analysis step segments a document page into regions: text blocks, tables, figures, headers, footers. Each region is classified by type. This is an object detection problem applied to document images.

Table structure recognition identifies rows, columns, and cells within a detected table region. This is harder than it appears: tables can have merged cells, implicit borders (no visible lines), and nested headers.

Reading order determines the sequence in which text regions should be read. For single-column documents this is trivial (top to bottom). For multi-column layouts, magazine-style pages, or documents with sidebars, reading order detection is a nontrivial graph problem.

Main Theorems

Layout-Aware Masked Language Modeling

Statement

LayoutLM-style models extend masked language modeling (MLM) to incorporate spatial position, building on the transformer architecture. The pretraining objective is:

where is the set of masked token positions. The model must predict masked tokens using both textual context (surrounding words) and spatial context (bounding box positions of all tokens).

The spatial embedding maps each coordinate to a learned embedding: , where are learned embedding tables over discretized coordinate bins.

Intuition

By conditioning on spatial position during pretraining, the model learns that tokens at the top of a page are likely headers, tokens aligned in columns are likely table entries, and tokens in the upper-right corner of an invoice are likely dates or invoice numbers. This spatial prior transfers to downstream extraction tasks.

Proof Sketch

No formal proof. This is an architectural design choice motivated by the observation that document understanding requires spatial reasoning. The empirical validation is that LayoutLM models outperform text-only models on document understanding benchmarks by 5-15% F1 on standard KIE tasks.

Why It Matters

This pretraining objective is what separates document AI from standard NLP. By encoding position as a first-class input, the model can distinguish between identical text in different spatial contexts. The word "Total" at the bottom of a column means something different from "Total" in a paragraph heading.

Failure Mode

The spatial embedding assumes a fixed page coordinate system. Documents with unusual layouts (foldouts, rotated pages, free-form designs) break the spatial prior. The model also assumes OCR bounding boxes are accurate; noisy OCR with incorrect positions degrades performance significantly.

Key Approaches

LayoutLM Family

LayoutLM (v1, v2, v3) progressively integrates more modalities:

- LayoutLM v1: Text embeddings + 2D position embeddings, pretrained with spatial MLM

- LayoutLM v2: Adds visual tokens from document image via CNN backbone

- LayoutLM v3: Unified multimodal pretraining with text, layout, and image objectives

Each version improves on KIE, document classification, and document VQA benchmarks. The v3 model uses a shared transformer for all three modalities rather than separate encoders.

Table Extraction Pipeline

A practical table extraction system operates in stages:

- Table detection: Locate tables in the page (object detection)

- Structure recognition: Identify rows, columns, merged cells

- Cell content extraction: OCR or text extraction within each cell

- Semantic typing: Determine header rows, data types, relationships

Each stage introduces errors that propagate. End-to-end models that jointly detect and parse tables (e.g., TableFormer) reduce this cascading error.

LLM-Based Document Understanding

Recent systems bypass the traditional pipeline entirely. A vision-language model (GPT-4V, Gemini) receives the document image directly and answers questions about it. This is simpler to deploy but less controllable: you cannot easily guarantee structured output or audit which parts of the document informed the answer.

Unified Generative Document Models

A second generation of OCR-free systems collapses detection, OCR, layout parsing, and KIE into a single generative model that emits structured text (Markdown, HTML, or JSON) directly from a page image:

- UDOP (Tang et al., 2023) unifies vision, text, and layout under a prompt-conditioned encoder-decoder that generates structured output.

- Pix2Struct (Lee et al., 2023) pretrains by predicting screenshot HTML, transferring to charts, infographics, UIs, and documents.

- Kosmos-2.5 (Lv et al., 2023) targets text-rich images with both Markdown and grounded text outputs.

- GOT-OCR 2.0 (Wei et al., 2024) treats OCR as a general "image to arbitrary-format text" generation task, supporting plain text, Markdown, LaTeX, and table HTML in a single decoder.

- olmOCR (Allen AI, 2024) is an open weight OCR-free model trained on millions of academic and web pages, optimized for high-throughput pretraining-data extraction.

- DocLLM (Wang et al., 2024) extends a decoder-only LLM with disentangled spatial attention over text bounding boxes, producing layout-aware language modeling without a separate vision encoder.

This pattern, amortizing the entire document understanding pipeline by pretraining a single conditional model, is structurally identical to the amortized-inference-via-pretraining idea behind tabular foundation models: spend compute upfront on a synthetic-or-scraped distribution so that a single forward pass replaces a multi-stage pipeline at deployment time.

Open-Source Document Conversion Stacks

Production systems increasingly ship as full conversion pipelines rather than single models:

- Marker (Datalab, 2024) converts PDFs to Markdown using layout detection, OCR fallbacks, and equation/table sub-models.

- Docling (IBM Research, 2024) is an open Python toolkit producing unified Markdown/JSON output from PDF, DOCX, HTML, and images, with TableFormer-style structure recognition.

- MinerU (Shanghai AI Lab, 2024) targets scientific PDFs with strong formula and table extraction, designed for LLM pretraining-data prep.

Canonical Examples

Invoice processing

An invoice has a predictable schema: vendor name, invoice number, date, line items (description, quantity, unit price, amount), subtotal, tax, total. A KIE model trained on labeled invoices can extract these fields with 90-95% F1. The spatial structure (line items in a table, total at the bottom) provides strong signal beyond the text alone.

Common Confusions

OCR accuracy is not the bottleneck

Modern OCR (from Google, AWS, Azure) achieves over 99% character accuracy on clean documents. The bottleneck is understanding structure: which text belongs to which table cell, which lines form a logical paragraph, what the reading order is. Document intelligence is about layout and semantics, not character recognition.

Digital PDFs are not easier than scanned documents

Digital PDFs contain embedded text, so OCR is unnecessary. But extracting structure from digital PDFs is still hard: text is stored as positioned characters with no semantic markup, tables have no explicit structure (just positioned strings), and multi-column layouts require non-trivial reading order inference. The PDF format was designed for display, not for information extraction.

LLMs do not replace document AI pipelines (yet)

Vision-language models can answer questions about documents, but they struggle with precise extraction tasks: exact dollar amounts, complete table parsing, consistent field extraction across thousands of documents. For enterprise-scale processing where accuracy and consistency matter, specialized document AI models still outperform general-purpose LLMs on structured extraction.

Exercises

Problem

A LayoutLM model uses discretized bounding box coordinates with 1000 bins per axis. How many total position embedding parameters does the model learn, assuming separate embedding tables for , , , , width, and height, each of dimension ?

Problem

Why does a text-only model (ignoring layout) fail on key information extraction from invoices? Give a concrete example where two tokens have identical text context but different meanings due to spatial position.

References

Canonical:

- Xu et al., LayoutLM: Pre-training of Text and Layout for Document AI (2020), KDD

- Xu et al., LayoutLMv3: Pre-training for Document AI with Unified Text and Image Masking (2022), ACM MM

- Huang et al., A Comprehensive Survey on Document AI (2023), arXiv:2305.08098

- Smock et al., PubTables-1M: Towards Comprehensive Table Extraction from Unstructured Documents (2022), CVPR

Unified generative models:

- Tang et al., Unifying Vision, Text, and Layout for Universal Document Processing (UDOP) (2023), CVPR, arXiv:2212.02623

- Lee et al., Pix2Struct: Screenshot Parsing as Pretraining for Visual Language Understanding (2023), ICML, arXiv:2210.03347

- Lv et al., Kosmos-2.5: A Multimodal Literate Model (2023), arXiv:2309.11419

- Wei et al., General OCR Theory: Towards OCR-2.0 via a Unified End-to-end Model (GOT-OCR 2.0) (2024), arXiv:2409.01704

- Wang et al., DocLLM: A Layout-Aware Generative Language Model for Multimodal Document Understanding (2024), arXiv:2401.00908

- Poznanski et al., olmOCR: Unlocking Trillions of Tokens in PDFs with Vision Language Models (Allen AI, 2024), arXiv:2502.18443

Open-source conversion stacks:

- Datalab, Marker: Convert PDFs to Markdown Quickly and Accurately (2024), github.com/VikParuchuri/marker

- IBM Research, Docling: Document Conversion Toolkit (2024), arXiv:2408.09869, github.com/DS4SD/docling

- Shanghai AI Lab, MinerU: A Comprehensive Tool for High-Quality PDF Content Extraction (2024), arXiv:2409.18839

Last reviewed: April 26, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

1- Multimodal RAGlayer 5 · tier 2

Derived topics

3- PaddleOCR and Practical OCRlayer 5 · tier 2

- Donut and OCR-Free Document Understandinglayer 5 · tier 3

- Table Extraction and Structure Recognitionlayer 5 · tier 3