LLM Construction

Table Extraction and Structure Recognition

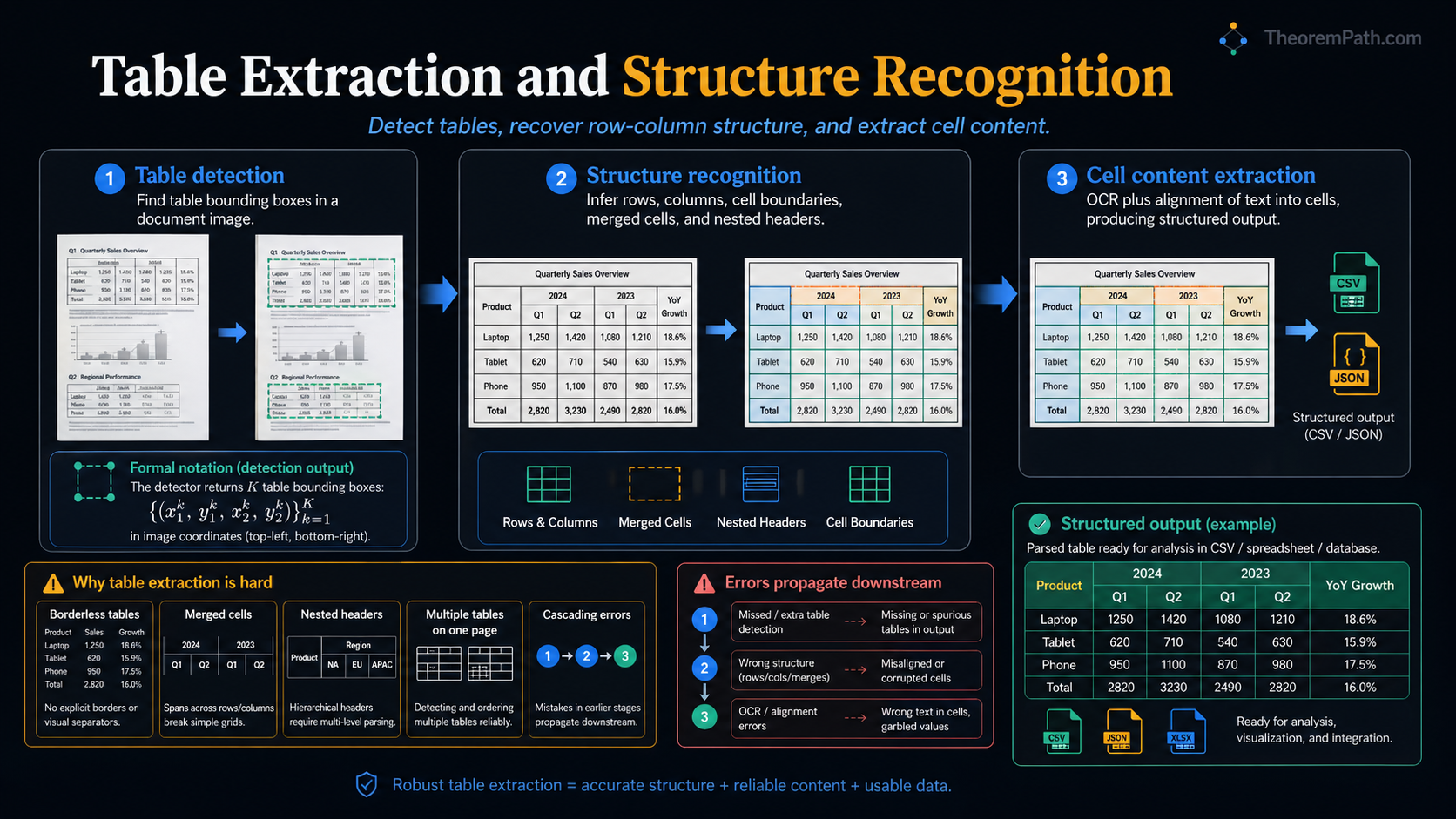

Detecting tables in documents, recognizing row and column structure, and extracting cell content. Why tables are hard: merged cells, borderless layouts, nested headers, and cascading pipeline errors.

Prerequisites

Why This Matters

Tables encode structured data in a spatial format. Financial reports, research papers, invoices, and government forms all use tables to present information that is inherently relational: rows are records, columns are fields, cells are values. Extracting this structure programmatically is required for any document intelligence pipeline that needs to convert documents into queryable databases.

Hide overviewShow overview

The problem is harder than it appears. Tables vary wildly in style: some have visible borders, some have none. Cells can span multiple rows or columns. Headers can be nested. A single page can contain multiple tables mixed with body text. Each of these variations breaks naive extraction approaches.

Mental Model

Table extraction is a three-stage pipeline:

- Table detection: where on the page is the table? (object detection)

- Structure recognition: what is the row/column grid? (graph prediction)

- Cell content extraction: what text is in each cell? (OCR + alignment)

Each stage passes information to the next. Errors at stage 1 (missed table or wrong boundary) make stages 2 and 3 impossible to recover. Errors at stage 2 (wrong row/column split) produce garbage in the output spreadsheet even if every character is read correctly.

Formal Setup and Notation

Table Detection

Given a document image , table detection produces bounding boxes for tables in the image. This is a standard object detection problem. The detection model (typically DETR or Faster R-CNN) is trained on annotated document images where tables are labeled with bounding boxes.

Evaluation uses standard detection metrics: IoU-thresholded precision and recall. A detection is correct if and only if its IoU with a ground-truth table exceeds a threshold (typically 0.5 or 0.75).

Table Structure

A table structure is a tuple where:

- is a set of row separators (horizontal lines)

- is a set of column separators (vertical lines)

- is a set of spanning cells, where means a single cell spans from row to row and column to column .

A simple table without spanning cells has and cells.

Core Definitions

Bordered tables have visible lines separating rows and columns. These are easier to parse: line detection (Hough transform or CNN-based) identifies the grid directly.

Borderless tables use whitespace alignment instead of lines. The challenge is inferring implicit column boundaries from text positions. Slight misalignment or inconsistent spacing breaks column detection.

Spanning cells (merged cells) occupy multiple rows, columns, or both. A header reading "Financial Results Q1-Q4" might span four columns. Detecting which cells are merged requires understanding both the visual layout and the semantic grouping.

Nested headers have multiple levels: a top-level header ("Revenue") spanning sub-headers ("Q1", "Q2", "Q3", "Q4"). Representing this requires a tree structure, not a flat grid.

Main Theorems

Table Structure Recognition as Cell Adjacency Prediction

Statement

Table structure can be formulated as a graph prediction problem. Given detected text segments (from OCR) within a table region, define a graph where:

- are text segments

- are adjacency edges

The model predicts, for each pair :

using features derived from text content, bounding box geometry (distance, alignment, relative position), and visual context. The predicted edges define equivalence classes: text segments sharing a same-row edge belong to the same row, and segments sharing a same-column edge belong to the same column.

The full table grid is reconstructed by finding connected components under each relation type. This handles spanning cells naturally: a merged cell's text has same-row edges to all rows it spans and same-column edges to all columns it spans.

Intuition

Instead of predicting the grid directly (which requires knowing the number of rows and columns in advance), predict pairwise relationships between text segments. Two text chunks are "in the same row" if they are horizontally aligned, and "in the same column" if they are vertically aligned. This local prediction is easier than global grid prediction, and it handles irregular tables where the grid is not uniform.

Proof Sketch

No formal proof. This is an architectural formulation. The empirical validation is that adjacency-based methods (e.g., in the PubTables-1M benchmark) achieve 95%+ cell adjacency F1 on tables with regular structure, but performance drops to 75-85% on tables with heavy spanning and nested headers.

Why It Matters

This formulation decouples structure recognition from the number of rows and columns. A model trained on tables with 3-10 rows can generalize to tables with 50 rows because it only predicts local pairwise relationships. The graph-based approach also provides interpretable intermediate outputs: you can visualize which text segments the model believes belong to the same row or column and debug errors.

Failure Mode

Pairwise prediction has complexity in the number of text segments. Large tables with hundreds of cells become expensive. The approach also assumes OCR has correctly segmented text into meaningful units (words or lines). If OCR merges two columns into one text segment or splits a single cell into multiple segments, the graph structure is corrupted at the input level.

Tools and Approaches

TableTransformer (DETR-based)

TableTransformer (Microsoft, 2022) uses a DETR architecture for both table detection and structure recognition. For structure recognition, it detects rows, columns, and spanning cells as separate object classes within the table image. The model is trained on the PubTables-1M dataset (1 million tables from scientific papers).

Strengths: end-to-end, handles spanning cells explicitly, pretrained model available. Weaknesses: trained primarily on scientific papers, so it struggles with invoices, financial documents, and other non-academic layouts.

PaddleOCR Table Module

PaddleOCR includes a table recognition module that combines SLANet

(Structure and Layout Analysis Network) for structure prediction with

its standard OCR pipeline for cell content extraction. SLANet predicts

HTML table tags directly, producing output like

<table><tr><td>...</td></tr></table>. This bypasses explicit grid

prediction by generating the table markup as a sequence.

TableFormer

TableFormer (Nassar et al., 2022) treats structure recognition as a sequence-to-sequence problem: given a cropped table image, generate a serialized HTML representation that encodes rows, columns, and spanning cells. The decoder produces both the structural tags and pointers to cell bounding boxes, allowing direct alignment to OCR text. TableFormer is the backbone of IBM's Docling document conversion stack.

UniTable

UniTable (Peng et al., 2024) unifies the three table tasks (structure recognition, cell detection, and cell-content extraction) under a single self-supervised pretraining objective on rendered HTML tables. The unification reduces the cascading-error problem that plagues stage-wise pipelines and achieves state-of-the-art on PubTabNet and FinTabNet.

Camelot (for Digital PDFs)

Camelot is a Python library that extracts tables from digital PDFs (where text is embedded, not scanned). It uses two strategies:

- Lattice mode: detects visible lines using image processing, then parses the grid formed by intersecting lines.

- Stream mode: detects columns from whitespace gaps in text positioning.

Camelot does not work on scanned documents (it needs embedded text coordinates). For digital PDFs with clear table formatting, it is fast and accurate. For complex layouts, it fails silently.

Why Tables Are Hard

Specific failure cases that break table extraction:

- Multi-line cells: a cell contains a paragraph of text wrapping across multiple lines. OCR sees multiple text segments that should be one cell.

- Rotated text: column headers rotated 90 degrees to save space. Standard OCR and structure models do not expect rotated text.

- Tables spanning pages: a table starts on page 3 and continues on page 4. Each page is processed independently, so the system produces two separate tables with inconsistent structure.

- Tables within tables: a cell contains a nested sub-table. Most models detect only one level of table structure.

- Color-coded structure: rows are distinguished by alternating background colors with no border lines. Models relying on line detection miss the structure entirely.

Common Confusions

Table detection is not table structure recognition

Finding a table on a page (detection) tells you where the table is. It says nothing about the internal structure: how many rows and columns, which cells are merged, where headers end and data begins. These are separate problems requiring separate models. High detection accuracy does not imply high structure recognition accuracy.

HTML output does not mean correct structure

Models that generate HTML table tags (like SLANet) can produce valid HTML that encodes the wrong structure. A <td colspan="3"> is correct syntax but wrong if the cell actually spans 2 columns. Always evaluate against ground-truth cell adjacency, not HTML validity.

Digital PDFs are not easy

Embedded text in digital PDFs provides character positions but not table structure. Characters are stored as positioned glyphs with no semantic grouping into cells, rows, or columns. Reconstructing the table from a soup of positioned characters is a non-trivial spatial clustering problem.

Exercises

Problem

A table has 5 rows and 4 columns with no spanning cells. A pairwise cell adjacency model must predict same-row and same-column relationships for all pairs of cells. How many pairwise predictions does the model make?

Problem

A table has a header row where one cell spans columns 2 through 4 (colspan=3). Below this header, columns 2, 3, and 4 each have separate data cells. Explain how the cell adjacency graph represents this spanning cell and why naive row/column counting would fail.

Evaluation

Standard evaluation moved away from raw HTML diff metrics toward structure-aware ones. GriTS (Smock et al., 2023) compares predicted and ground-truth tables as 2D grids, scoring location, content, and topology jointly via a grid-based extension of tree edit distance. It is now the default metric on PubTables-1M and FinTabNet.

For end-to-end document parsing (not tables alone), OmniDocBench (Ouyang et al., 2024) provides a unified benchmark across nine document types with fine-grained annotations for layout, tables, formulas, and reading order.

References

Canonical:

- Smock et al., PubTables-1M: Towards Comprehensive Table Extraction from Unstructured Documents (2022), CVPR

- Zhong et al., Image-based Table Recognition: Data, Model, and Evaluation (2020), ECCV

- Nassar et al., TableFormer: Table Structure Understanding with Transformers (2022), CVPR, arXiv:2203.01017

Current:

- Smock et al., GriTS: Grid Table Similarity Metric for Table Structure Recognition (2023), ICDAR, arXiv:2203.12555

- Peng et al., UniTable: Towards a Unified Framework for Table Recognition via Self-Supervised Pretraining (2024), arXiv:2403.04822

- Ouyang et al., OmniDocBench: Benchmarking Diverse PDF Document Parsing with Comprehensive Annotations (2024), arXiv:2412.07626

- PaddleOCR Table Recognition documentation, github.com/PaddlePaddle/PaddleOCR

- Ly et al., Camelot: PDF Table Extraction for Humans (2019), github.com/camelot-dev/camelot

Last reviewed: April 26, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

2- Document Intelligencelayer 5 · tier 2

- PaddleOCR and Practical OCRlayer 5 · tier 2

Derived topics

0No published topic currently declares this as a prerequisite.