Concentration Probability

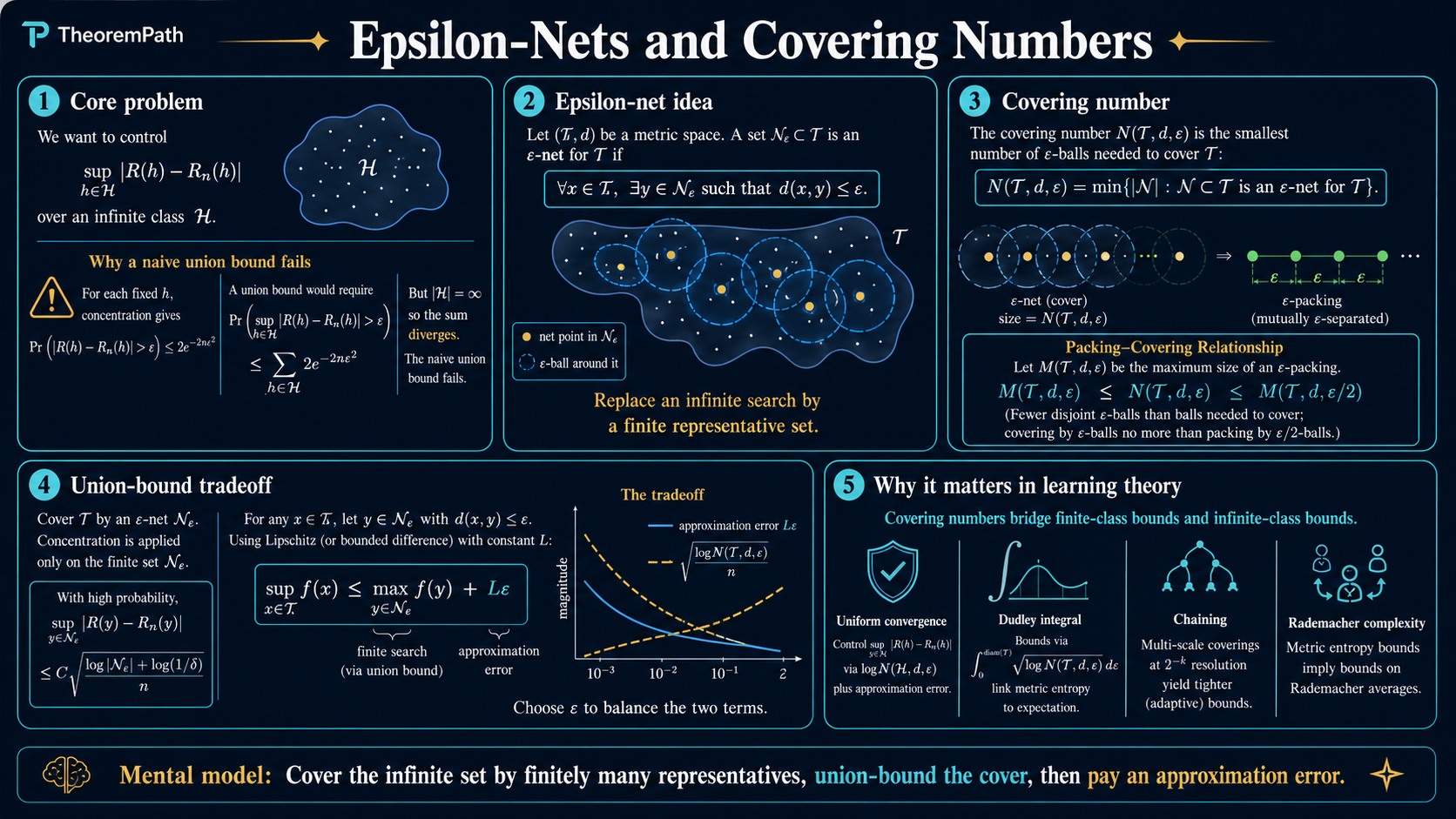

Epsilon-Nets and Covering Numbers

Discretizing infinite sets for concentration arguments: epsilon-nets, covering numbers, packing numbers, the Dudley integral, and the connection to Rademacher complexity.

Prerequisites

Why This Matters

The fundamental challenge in learning theory is controlling the supremum of a random process over an infinite set:

Hide overviewShow overview

For a finite class , you apply a concentration inequality to each and take a union bound. For an infinite class, the naive union bound over uncountably many hypotheses is useless.

Epsilon-nets solve this by discretizing: approximate the infinite set by a finite set of points (the epsilon-net), apply the union bound to the finite set, and bound the error of the approximation. The covering number measures how large this finite set needs to be. It is the bridge between "finite class" bounds and "infinite class" bounds.

Mental Model

Imagine you want to bound where is an infinite set and is "roughly continuous." Strategy:

- Pick a finite set of points such that every point in is within of some point in .

- Bound using a union bound over points.

- Bound for nearby points (Lipschitz continuity or similar).

- Combine: .

The covering number tells you how many points you need in step 1. Steps 2-4 trade the "approximation error" against the "union bound cost" .

Formal Definitions

Epsilon-Net (Covering)

An -net (or -covering) of a set in a metric space is a finite set such that for every , there exists with .

Equivalently, where is the closed ball of radius centered at .

Covering Number

The covering number is the minimum size of an -net of with respect to metric :

Smaller requires more points. As , the covering number grows. The rate of growth captures the "metric complexity" of .

Packing Number

The -packing number is the maximum size of a subset of whose points are mutually -separated:

A maximal packing is always a covering (if the packing is maximal, every point in is within of some packing point). This gives the fundamental relationship between covering and packing numbers.

Main Theorems

Covering-Packing Duality

Statement

For any set in a metric space and any :

That is, the covering number at scale is sandwiched between packing numbers at scales and .

Intuition

Upper bound (): A maximal -packing is automatically an -covering. If you cannot add any more -separated points, then every point in must be within of an existing packing point.

Lower bound (): If you have points that are pairwise -separated, then each requires its own "representative" in any -net (two -separated points cannot share the same -net point). So the covering must have at least points.

Proof Sketch

Upper bound: Take a maximal -packing . If some has for all , then is a larger -packing, contradicting maximality. So is an -covering and .

Lower bound: Let be a -packing with . Any -covering must contain a distinct point within of each (since points in are -apart, their -balls are disjoint). So .

Why It Matters

This duality is why covering and packing numbers are interchangeable (up to a factor of 2 in ) in most applications. You can use whichever is easier to compute. Packing numbers are often easier to bound by volume arguments; covering numbers are what you directly need in discretization arguments.

Failure Mode

The factor of 2 between the scales matters in precise calculations. In some information-theoretic lower bounds (Fano, Le Cam), you need packing numbers specifically, and the factor of 2 affects the final constants. For upper bounds in learning theory, the factor of 2 is absorbed into constants.

Volume-Based Covering Number Bounds

Covering Numbers for Euclidean Balls

For the unit ball in metric:

Proof idea (upper bound): Take a maximal -packing of (this is also an -covering, so ). By the packing property, the open balls of radius around points of are disjoint, and by the triangle inequality they are all contained in . Volume comparison gives , so:

where the final inequality uses (equivalently, iff ).

Proof idea (lower bound): A packing argument. Each -ball centered at a packing point has volume , and they are disjoint within . So the packing size is at most .

The logarithm of the covering number for Euclidean balls is : it grows linearly in the dimension and logarithmically in .

The Discretization Argument

The standard template for using covering numbers in learning theory:

Setup: Let be a random process indexed by , where each is centered and sub-Gaussian with parameter . We want to bound .

Step 1 (Discretize): Fix and let be an -net of .

Step 2 (Union bound on net): For each , sub-Gaussian concentration gives . By union bound over points:

Setting gives the bound with high probability.

Step 3 (Approximation): For any , let be its nearest net point. If (Lipschitz condition), then:

Step 4 (Optimize ): Balance the union bound cost against the approximation error .

The Dudley Integral

Instead of using a single epsilon-net at one scale, chaining uses nets at all scales simultaneously. This is Dudley's entropy integral.

Dudley Entropy Integral Bound

Statement

Let be a centered random process such that is sub-Gaussian with parameter for all . Then:

where is a universal constant and .

Intuition

The Dudley integral sums contributions from all scales . At coarse scales (large ), the covering number is small but the approximation captures only the rough structure. At fine scales (small ), the covering number is large but the approximation is precise. The integral optimally balances these scales.

The at each scale comes from the sub-Gaussian union bound: to control over sub-Gaussian variables, you pay . Integrating this across scales gives the Dudley bound.

Proof Sketch

Chaining construction: Choose epsilon-nets at geometrically decreasing scales: for Let be the nearest point to in the -net.

For any , telescope:

Each increment is sub-Gaussian with parameter .

At scale , the increment takes at most possible values. The sub-Gaussian maximum over these values is controlled by .

Summing over all scales and converting the sum to an integral gives the Dudley bound.

Why It Matters

The Dudley integral is the main tool for bounding the expected supremum of empirical processes. In learning theory, the empirical process is of this form. The Dudley integral converts the problem of bounding this supremum into computing (or bounding) covering numbers of the hypothesis class.

For the -dimensional unit ball: , so the Dudley integral gives . recovering the rates of VC theory.

Failure Mode

The Dudley integral can be loose by a factor compared to the tightest possible bound (given by the generic chaining / majorizing measures theorem of Talagrand). The Dudley integral uses a "worst-case" analysis at each scale, while generic chaining adapts to the local geometry of . For most applications in learning theory, Dudley is sufficient.

Connection to Rademacher Complexity

The Rademacher complexity of a function class on a sample is:

where are i.i.d. Rademacher () variables.

The process is sub-Gaussian with the metric on the function class (viewed as vectors in ).

Applying Dudley:

where is the restriction of to the sample. This connects covering numbers to generalization bounds via Rademacher complexity.

Canonical Examples

Covering number of a finite set

If (a finite set), then for all (the set is its own covering). For less than the minimum pairwise distance, . The Dudley integral reduces to . recovering the finite-class union bound.

Covering the unit sphere in R^d

The unit sphere has:

and . This is used in bounding the operator norm of random matrices: to control , discretize the sphere and union-bound.

Covering linear function classes

Let with data . The restriction to a sample gives vectors in with norm at most .

The covering number is: .

Plugging into Dudley gives Rademacher complexity , illustrating the chaining method on a parametric class.

Caveat — covering vs.\ direct bound. This rate has a spurious dimension dependence. A direct calculation gives the sharper, dimension-free bound: by Cauchy-Schwarz,

So the canonical Rademacher rate for norm-bounded linear classes is , independent of . The covering-number route is valid but loose in this example — useful for parametric classes whose geometry is otherwise opaque, but not the right benchmark for what is known about linear predictors. State Dudley as a general tool whose output may be improved by direct calculations when more structure is available.

Common Confusions

Covering numbers depend on the metric

The same set can have vastly different covering numbers under different metrics. Covering the ball in metric vs. metric vs. metric gives different numbers. Always specify the metric. In learning theory, the metric is usually the empirical norm on the sample.

The net does not have to be a subset of T

Some definitions require (internal covering), others allow (external covering, where is the ambient metric space). The difference is at most a factor of 2 in (an internal -net is an external -net, and an external -net of can be projected to an internal -net). Most learning theory results hold with either definition.

Covering number growth rate, not the number itself, is what matters

A covering number of looks huge, but if the log is , then the union bound cost is modest. What matters is as a function of and the dimension/complexity of , not the raw number.

Summary

- -net: finite set that approximates all of within

- Covering number : minimum epsilon-net size

- Packing number : maximum epsilon-separated subset

- Duality:

- Euclidean ball:

- Discretization argument: union bound on net + Lipschitz approximation

- Dudley integral:

- Connects to Rademacher complexity via covering numbers of

Exercises

Problem

Compute the covering number for the unit interval under the absolute value metric. What is its logarithm?

Problem

Use a covering number argument to bound where is a finite set and each is sub-Gaussian with parameter . Recover the standard result .

Problem

Let be the unit ball in with metric. Using the volume argument, prove that . Then compute the Dudley integral and show it is .

Related Comparisons

References

Canonical:

- Vershynin, High-Dimensional Probability (2018), Chapter 4

- van der Vaart & Wellner, Weak Convergence and Empirical Processes (1996), Chapter 2.5

- Dudley, Uniform Central Limit Theorems (1999)

Current:

-

Wainwright, High-Dimensional Statistics (2019), Chapter 5

-

Talagrand, Upper and Lower Bounds for Stochastic Processes (2014)

-

Boucheron, Lugosi, Massart, Concentration Inequalities (2013), Chapters 2-6

Next Topics

Building on epsilon-nets and covering numbers:

- Rademacher complexity: the data-dependent complexity measure that uses covering numbers

- VC dimension: combinatorial complexity connected to covering numbers via Sauer's lemma

- Generic chaining: Talagrand's refinement of the Dudley integral

Last reviewed: April 26, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

5- Concentration Inequalitieslayer 1 · tier 1

- Sub-Exponential Random Variableslayer 2 · tier 1

- Sub-Gaussian Random Variableslayer 2 · tier 1

- Symmetrization Inequalitylayer 3 · tier 1

- Contraction Inequalitylayer 3 · tier 2

Derived topics

3- Measure Concentration and Geometric Functional Analysislayer 3 · tier 1

- Empirical Processes and Chaininglayer 3 · tier 2

- Random Matrix Theory Overviewlayer 4 · tier 2