Sampling MCMC

Gibbs Sampling

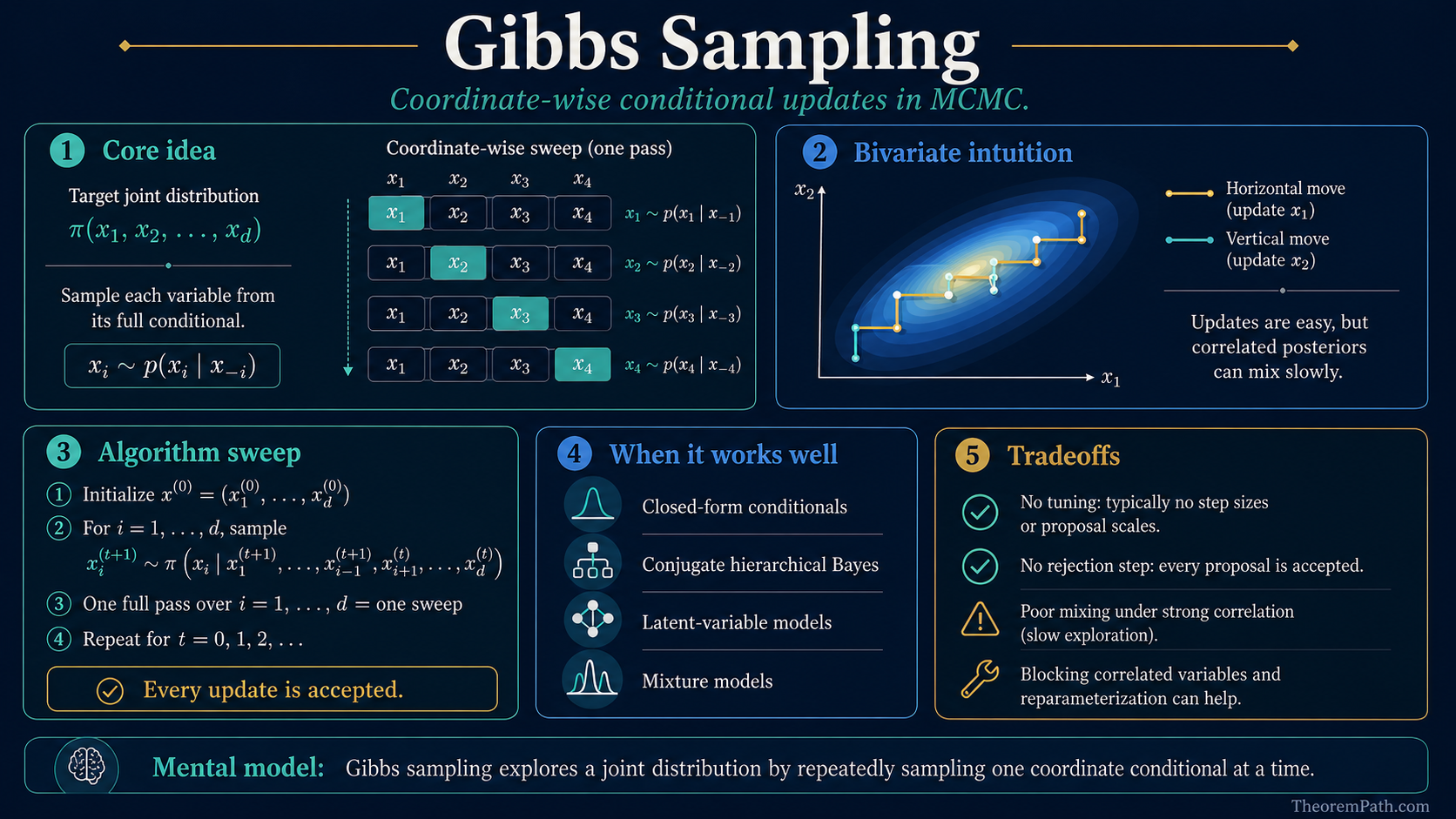

The classical conditional-update MCMC algorithm: sample each variable from its full conditional, accept every proposal, and then pay close attention to mixing, blocking, and hierarchical parameterization.

Prerequisites

Why This Matters

Gibbs sampling is a foundational MCMC algorithm with a long history in applied Bayesian statistics. It powered earlier-generation tools like BUGS and JAGS, and remains standard whenever a model has full conditionally conjugate structure. Modern general-purpose Bayesian workflows more often reach for Hamiltonian Monte Carlo (Stan defaults to NUTS) because Gibbs mixes poorly in high-dimensional or strongly correlated posteriors. Gibbs still wins when the conditionals are closed-form: hierarchical models with conjugate priors, mixture models, LDA-style latent-variable models, and any setting where sampling from is cheap.

Hide overviewShow overview

Its appeal is simplicity: instead of designing a and tuning acceptance rates, you just sample each variable from its conditional distribution given the rest. No tuning parameters, no rejections.

Mental Model

The target is a joint distribution that is hard to sample from directly. The are often tractable when the joint is not, for example in Bayesian models with conjugate priors, where each reduces to a standard distribution.

Gibbs sampling cycles through the coordinates, drawing each from its full conditional given the current values of the others. The resulting Markov chain has as its .

Formal Setup and Notation

Let be the target joint distribution. We assume we can sample from each full conditional distribution.

Full Conditional Distribution

The full conditional distribution of variable given all other variables is:

where denotes all variables except . In practice, to derive the full conditional for , you write out the full joint density, treat everything that does not involve as a constant, and recognize the resulting kernel as a known distribution.

Gibbs Sampler (Systematic Scan)

Given target and initial state :

At iteration , update each component in sequence:

- Draw

- Draw

- Continue through all variables...

- Draw

Note: each update uses the most recent values of all other variables.

Random Scan Gibbs Sampler

An alternative to systematic scan: at each iteration, choose an index uniformly at random (or with specified probabilities), and update only from .

Random scan has cleaner theoretical properties (it satisfies detailed balance directly), while systematic scan is more commonly used in practice because it updates all variables every iteration.

Gibbs as a Special Case of Metropolis-Hastings

The central theoretical result about Gibbs sampling is that it is a special case of MH where the acceptance probability is always 1.

Gibbs Sampling Has Acceptance Probability 1

Statement

Consider a single Gibbs update for variable : propose from . This is equivalent to a Metropolis-Hastings step with proposal and acceptance probability:

Every proposed move is accepted.

Intuition

The proposal distribution is exactly the full conditional. The optimal distribution for given everything else. There is no mismatch between proposal and target to correct for, so the acceptance ratio evaluates to 1. You are proposing from exactly the right distribution.

Proof Sketch

By definition of the full conditional: .

The acceptance ratio becomes:

The and terms cancel perfectly.

Why It Matters

A single Gibbs update is a valid MH step with acceptance probability 1, so it preserves as the stationary distribution and avoids proposal tuning and rejected samples. The full guarantees, however, depend on the scan type: random-scan Gibbs is reversible (satisfies detailed balance with respect to ) and is most directly an MH kernel; systematic-scan Gibbs preserves as a composition of MH kernels but is generally only -invariant and not reversible. Convergence in either case still requires the standard Markov-chain assumptions on top of -invariance: -irreducibility, Harris recurrence on general state spaces, aperiodicity where relevant, and connected positive-density support of . Compatibility of the full conditionals (a single joint that realizes them all) is implicit and matters in practice.

Failure Mode

The acceptance rate of 1 does not mean Gibbs sampling is always efficient. The chain can still mix slowly if the variables are highly correlated. When and are strongly correlated, updating one at a time while fixing the other leads to slow, diffusive exploration. The chain takes small steps along the correlation ridge. This is the main weakness of component-wise Gibbs sampling.

Convergence of the Gibbs Sampler

Statement

Under mild regularity conditions, the Gibbs sampler is ergodic: starting from any initial state , the distribution of converges to in total variation:

For random scan, the chain satisfies detailed balance with respect to . For systematic scan, the chain satisfies the weaker condition of -invariance (but not necessarily detailed balance).

Intuition

Each Gibbs update preserves as the stationary distribution (since it is a valid MH step). As long as the chain can reach any state, guaranteed when the support is connected and the full conditionals have positive density, the chain converges. Random scan gives reversibility automatically; systematic scan gives faster practical convergence but sacrifices reversibility.

Proof Sketch

For random scan: the transition kernel is a mixture of MH kernels, each satisfying detailed balance, so the mixture does too. Irreducibility follows from the positivity of full conditionals on the support.

For systematic scan: the transition kernel is a composition (not mixture) of MH kernels. Each kernel preserves , so the composition does too. Detailed balance may fail, but -invariance plus irreducibility and aperiodicity suffice for convergence.

Why It Matters

This theorem ensures that Gibbs sampling produces valid samples from the target distribution, justifying its use in Bayesian computation. The distinction between random and systematic scan matters for theoretical analysis (e.g., proving CLTs for MCMC estimators) but rarely affects practice.

Failure Mode

Convergence can be extremely slow when variables are strongly correlated or when the posterior has multiple well-separated modes. In the latter case, the chain may get trapped in one mode for a very long time. Blocking (grouping correlated variables and updating them jointly) can help with correlations; tempering or other advanced methods are needed for multimodality.

Blocking for Correlated Variables

When variables and are highly correlated, updating them one at a time leads to slow mixing. Blocking (or collapsed Gibbs) groups correlated variables together and samples them jointly:

Instead of updating then , update as a block. This requires sampling from the joint conditional , which may itself require specialized methods, but the resulting chain mixes much faster when and are correlated.

The general principle: block together variables that are strongly correlated a posteriori.

Diagnostics Matter Even When Every Proposal Is Accepted

The most seductive Gibbs-sampling mistake is to see a 100% acceptance rate and infer that the sampler must be efficient. It is not. Gibbs can accept every single update and still produce a terrible effective sample size.

That happens when the conditional updates move only a tiny distance along a strong posterior ridge. The bivariate normal example above shows the core mechanism: when is close to 1, the conditional variance shrinks and each update becomes a small creep rather than a meaningful exploration move. The result is high , which makes the much smaller than the chain length suggests.

So the right workflow is:

- derive the conditionals cleanly,

- run multiple chains,

- inspect burn-in and convergence diagnostics,

- and if ESS remains poor, change the geometry of the update, not just the iteration count.

For hierarchical models this often means blocking, collapsing, or changing the parameterization itself.

Hierarchical Models: Centered, Non-Centered, and Conjugacy

Gibbs sampling has a special relationship to hierarchical models. In one direction, it is often the easiest exact MCMC method when the hierarchy is conditionally conjugate: normal-normal random effects, Gaussian state-space models, latent-class assignments, and older BUGS/JAGS workflows all lean on that structure.

But there is a catch. The parameterization that is best for Gibbs is not always the one that is best for HMC or NUTS.

- A centered hierarchy often preserves clean conditional conjugacy, so the Gibbs updates stay closed-form.

- A non-centered hierarchy often reduces posterior correlation between local effects and the global scale, which is better geometry for HMC and NUTS, but may destroy the simple conditional form that made Gibbs attractive in the first place.

So "use the non-centered form" is not a universal rule. It is a rule about a particular geometry-sampler pairing. For the full comparison, see Centered vs. Non-Centered Hierarchical Models.

Gibbs for Conjugate Models

The power of Gibbs sampling is most apparent in conjugate models, where each full conditional belongs to a standard distribution family.

Normal-Normal model (known variance)

Model: for , with prior .

The full conditional for is:

This is a one-block model, so one Gibbs draw gives an exact posterior sample. The power of Gibbs becomes clear in hierarchical extensions with additional latent variables.

Beta-Binomial model

Model: , with prior .

The full conditional is:

Again conjugacy gives a closed-form full conditional. In a hierarchical model with multiple groups sharing a common prior, the Gibbs sampler alternates between updating group-level parameters and the hyperparameters.

Gibbs for a bivariate normal

Target: .

The full conditionals are:

When is close to 1, the chain moves in small steps along the diagonal, illustrating the slow-mixing problem for correlated variables. The autocorrelation of the chain is approximately per iteration.

Common Confusions

Gibbs requires KNOWN full conditionals

Gibbs sampling requires that you can derive and sample from each full conditional distribution. If the full conditional does not have a recognizable closed form, you cannot use standard Gibbs for that variable. The solution is MH within Gibbs: use a Metropolis-Hastings step (with some proposal distribution) to update that variable, while using exact Gibbs updates for the variables where full conditionals are known. This hybrid approach is extremely common in practice.

Gibbs is not always better than MH

The 100% acceptance rate of Gibbs does not mean it is always more efficient than MH. In high-correlation scenarios, a well-tuned MH proposal that accounts for correlations (or Hamiltonian Monte Carlo, which uses gradient information) can vastly outperform component-wise Gibbs. Gibbs excels when the model has conditionally conjugate structure and moderate correlations.

Acceptance probability 1 does not mean i.i.d. draws

Every Gibbs proposal is accepted because it is drawn from the correct full conditional. That says nothing about how correlated successive full states are. A Gibbs chain can have severe autocorrelation and a tiny effective sample size even though the acceptance rate is exactly 1.

Systematic scan Gibbs does not satisfy detailed balance

A common misconception is that Gibbs sampling always satisfies detailed balance. In fact, only random scan Gibbs satisfies detailed balance. Systematic scan Gibbs, where you cycle through variables in a fixed order, satisfies the weaker property of -invariance: where is the transition kernel. This is sufficient for convergence but means some theoretical tools (e.g., certain CLT results) require more care.

Gibbs updates use the MOST RECENT values

In systematic scan, when updating , you condition on the values of that have already been updated in the current iteration, and the values of from the previous iteration. This is not the same as conditioning on all values from the previous iteration (which would be a different, valid but less efficient, algorithm sometimes called "synchronous" or "parallel" Gibbs).

Summary

- Gibbs sampling updates each variable from its full conditional, cycling through all variables

- It is a special case of MH with acceptance probability 1

- Requires known, tractable full conditional distributions

- Random scan satisfies detailed balance; systematic scan only satisfies -invariance

- Excels in conditionally conjugate models and older hierarchical Bayesian workflows

- Blocking, collapsing, and parameterization matter because 100% acceptance does not guarantee good ESS

- When full conditionals are not tractable, use MH within Gibbs or move to a geometry-aware method like HMC

Exercises

Problem

Derive the Gibbs sampler for the bivariate normal distribution:

Find both full conditional distributions and .

Problem

Prove that the Gibbs update for variable , viewed as an MH step with proposal , has acceptance probability exactly 1.

Problem

Consider a mixture model: with , , and priors . Derive the full conditional distributions for the Gibbs sampler: and .

References

Canonical:

- Geman & Geman (1984), "Stochastic Relaxation, Gibbs Distributions, and the Bayesian Restoration of Images"

- Gelfand & Smith (1990), "Sampling-Based Approaches to Calculating Marginal Densities"

- Casella & George (1992), "Explaining the Gibbs Sampler," The American Statistician 46(3), 167-174. Still one of the cleanest expositions of why the algorithm works.

Current:

- Robert & Casella, Monte Carlo Statistical Methods (2004), Chapter 10

- Gelman, Carlin, Stern, Dunson, Vehtari, Rubin, Bayesian Data Analysis (3rd ed., 2013), Chapter 11

- Brooks et al., Handbook of MCMC (2011), Chapters 1-5

- Betancourt & Girolami, "Hamiltonian Monte Carlo for Hierarchical Models" (2013, arXiv:1312.0906). Useful contrast for when conditional conjugacy stops being the dominant consideration.

Next Topics

The natural next steps from Gibbs sampling:

- Griddy Gibbs: approximate Gibbs when full conditionals are not available in closed form

- Burn-in and Convergence Diagnostics: how to assess whether the chain has converged and whether ESS is actually usable

- Hamiltonian Monte Carlo: when gradient-guided global moves beat component-wise conditional updates

- Centered vs. Non-Centered Hierarchical Models: when the best parameterization for Gibbs is not the best one for NUTS

Last reviewed: May 6, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

2- Markov Chain Monte Carlolayer 2 · tier 1

- Metropolis-Hastings Algorithmlayer 2 · tier 1

Derived topics

6- Burn-in and Convergence Diagnosticslayer 2 · tier 2

- Hamiltonian Monte Carlolayer 3 · tier 2

- No-U-Turn Sampler and Neal's Funnellayer 3 · tier 2

- Griddy Gibbs Samplinglayer 2 · tier 3

- MCMC for Markov Random Fieldslayer 3 · tier 3

+1 more on the derived-topics page.