Sampling MCMC

Griddy Gibbs Sampling

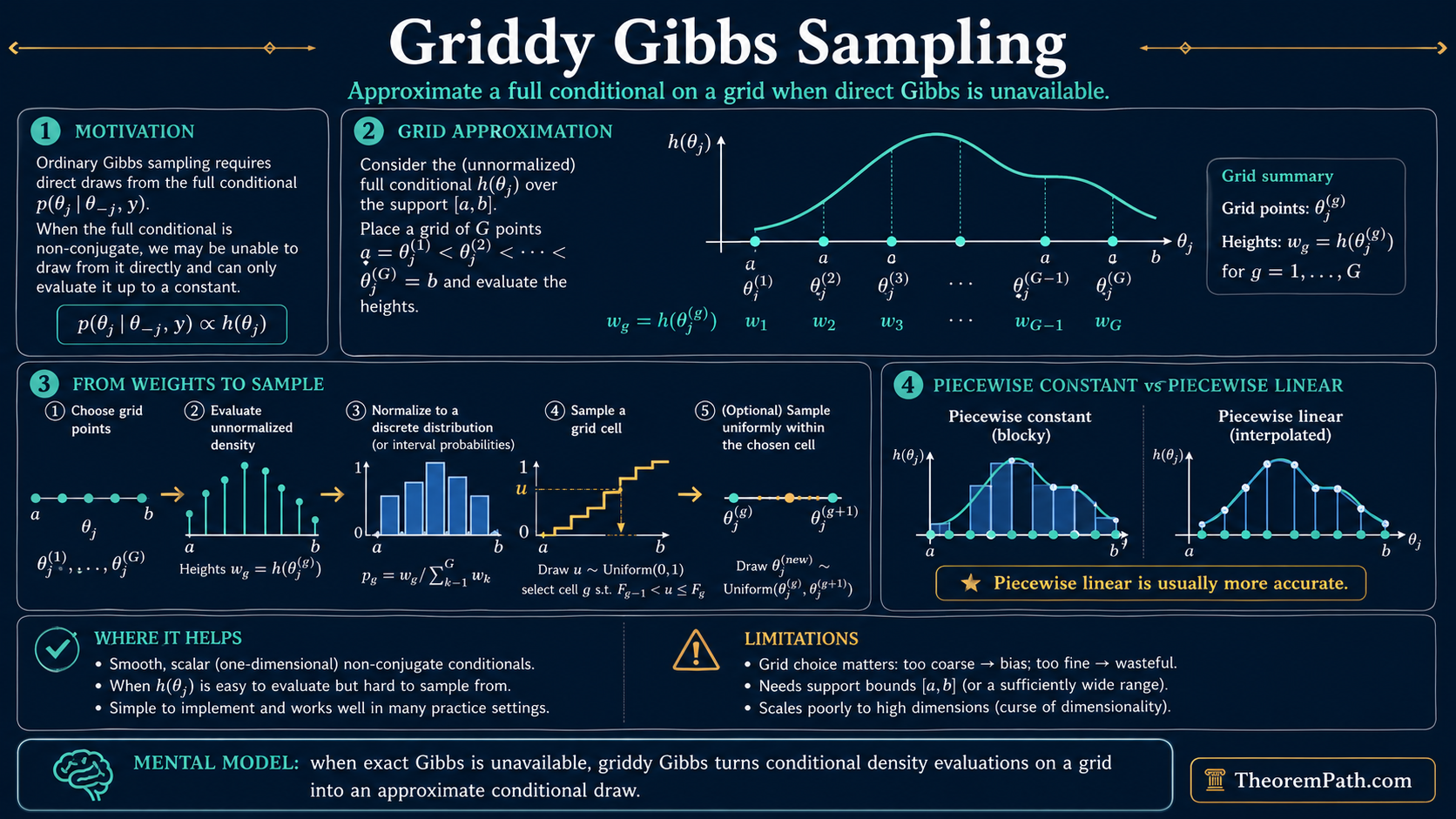

When Gibbs sampling encounters a non-conjugate full conditional, approximate it on a grid and sample from the piecewise constant or piecewise linear approximation. Simple, effective for smooth univariate conditionals.

Prerequisites

Why This Matters

Standard Gibbs sampling requires that you can sample from each full conditional distribution directly. In conjugate models, these conditionals have known forms (normal-normal, gamma-Poisson, etc.). In non-conjugate models, the full conditional may have no closed-form sampling algorithm.

Hide overviewShow overview

Griddy Gibbs (Ritter and Tanner, 1992) handles this by evaluating the conditional on a grid, constructing a discrete approximation, and sampling from that approximation. It is the simplest approach to non-conjugate conditionals, requiring nothing beyond the ability to evaluate the conditional density (up to a normalizing constant) at specified points.

Mental Model

Suppose you need to sample from but cannot do so directly. You can evaluate for any value of .

Place a grid of points over the support. Evaluate at each grid point. Normalize these values to form a discrete distribution. Sample a grid cell, then (optionally) sample uniformly within that cell.

The result is an approximate draw from the full conditional. If the grid is fine enough and the conditional is smooth, the approximation error is small.

Formal Setup

Griddy Gibbs Algorithm

To sample from where is a scalar:

- Choose grid points spanning the effective support of .

- Evaluate for .

- Piecewise constant: Set for each interval. Sample interval with probability , then sample uniformly within the interval.

- Piecewise linear: Interpolate linearly between within each interval. The CDF within an interval is a quadratic, so inversion sampling is exact.

Main Theorems

Convergence of Griddy Gibbs

Statement

Let denote the griddy Gibbs approximation to the full conditional using grid points. If the full conditional is continuous on a compact interval , then the total variation distance satisfies:

where is the maximum grid spacing and is the modulus of continuity of . For Lipschitz conditionals with constant , this gives .

Intuition

A smooth function is well approximated by a piecewise constant function when the grid is fine. The error per interval is bounded by the maximum variation of the function within that interval, times the interval width. Summing over intervals gives the total error.

Proof Sketch

On each interval , the piecewise constant approximation differs from by at most pointwise. Integrating this pointwise error over the interval and summing over all intervals gives the total variation bound.

Why It Matters

The bound tells you how to choose the grid: for a target accuracy in total variation, you need grid spacing . For smooth conditionals, a moderate grid (50-200 points) suffices.

Failure Mode

If the full conditional has sharp spikes or multiple narrow modes, the grid may miss them entirely. The grid must be fine enough to resolve all features of the conditional. For multimodal conditionals, you need prior knowledge of where the modes are to place grid points appropriately.

Canonical Examples

Non-conjugate logistic regression

In a Bayesian logistic regression with a normal prior on coefficients, the full conditional for each coefficient is proportional to:

This has no closed form. Place a grid of 100 points over where is the MLE and is the asymptotic standard error. Evaluate at each grid point, normalize, and sample. The conditional is typically unimodal and smooth, so the grid approximation is accurate.

Common Confusions

Griddy Gibbs is an approximation, not exact

Unlike standard Gibbs sampling, griddy Gibbs does not sample from the exact full conditional. The Markov chain targets an approximate posterior, not the true posterior. The approximation error depends on grid resolution. This error is in addition to MCMC sampling variability.

Griddy Gibbs is only for scalar conditionals

The method requires evaluating the conditional on a grid. In dimensions, a grid with points per dimension has points total. For , this is impractical. Griddy Gibbs is designed for the case where each full conditional is univariate (or at most bivariate), which is the standard Gibbs setting where you cycle through one variable at a time.

Summary

- Evaluate the non-conjugate full conditional on a grid and sample from the discrete approximation

- Piecewise constant is simplest; piecewise linear gives better accuracy for the same grid size

- Grid spacing controls approximation error: smoother conditionals need fewer grid points

- Only works for scalar (or low-dimensional) conditionals

- For log-concave conditionals, adaptive rejection sampling is often preferable because it automatically refines the approximation

Exercises

Problem

Suppose a full conditional is Lipschitz with constant on the interval . How many equally spaced grid points do you need to achieve total variation error at most 0.01?

Problem

Explain why griddy Gibbs with a piecewise linear approximation is exact (zero approximation error) when the full conditional is itself piecewise linear on the grid intervals. What class of conditionals does this characterize?

References

Canonical:

- Ritter & Tanner, "Facilitating the Gibbs Sampler: The Gibbs Stopper and the Griddy-Gibbs Sampler" (1992), Journal of the American Statistical Association

Current:

-

Robert & Casella, Monte Carlo Statistical Methods (2004), Chapter 10.3

-

Gilks, Richardson & Spiegelhalter, Markov Chain Monte Carlo in Practice (1996), Chapter 5

-

Gelman et al., Bayesian Data Analysis (2013), Chapters 10-12

-

Brooks et al., Handbook of MCMC (2011), Chapters 1-5

Next Topics

- Adaptive rejection sampling: automatic envelope refinement for log-concave conditionals

- Hamiltonian Monte Carlo: gradient-based MCMC that avoids grid discretization entirely

Last reviewed: April 14, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

1- Gibbs Samplinglayer 2 · tier 1

Derived topics

2- Hamiltonian Monte Carlolayer 3 · tier 2

- Adaptive Rejection Samplinglayer 2 · tier 3

Graph-backed continuations