LLM Construction

Megakernels

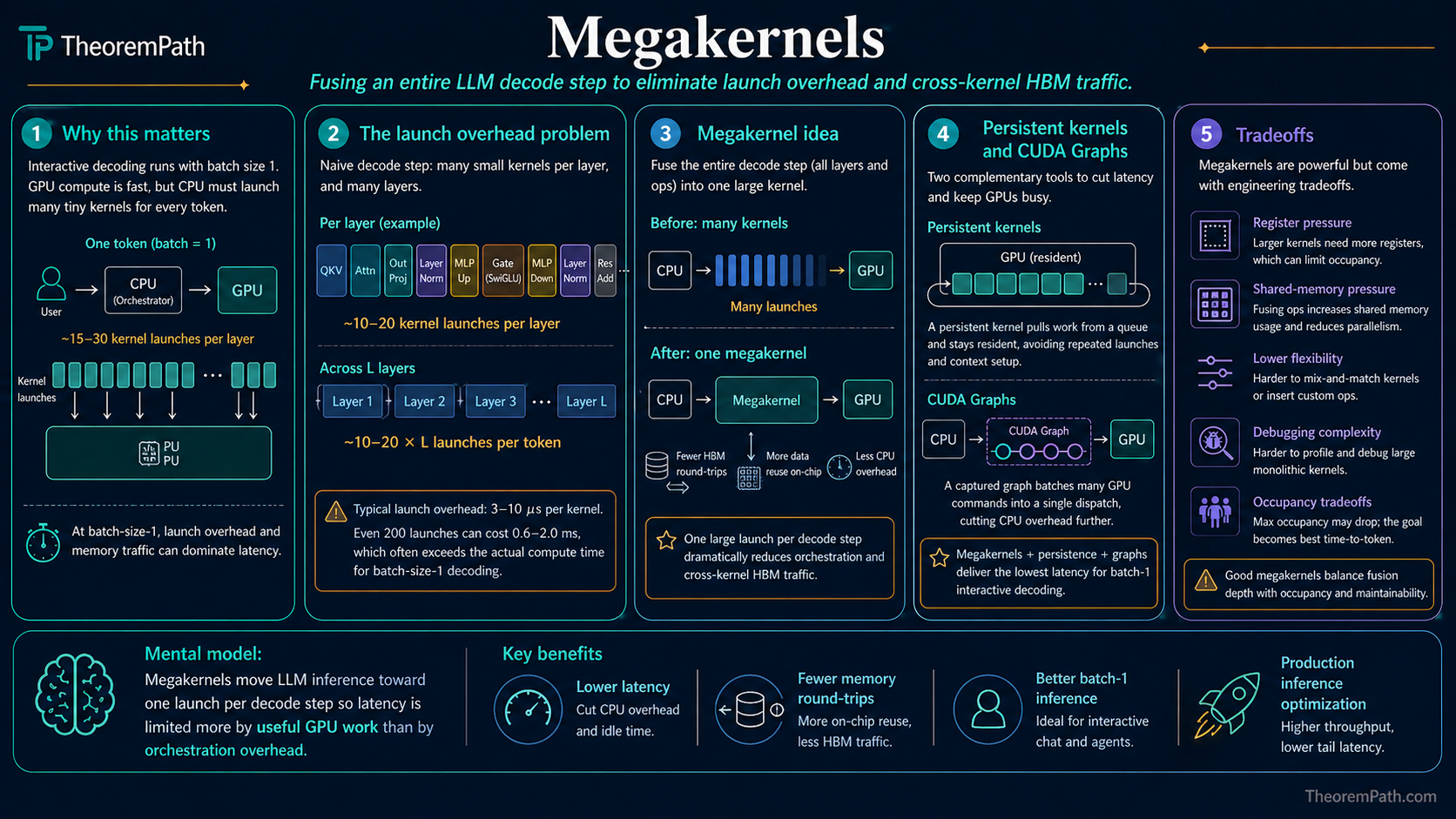

Fuse an entire LLM forward pass or decode step into a single GPU kernel launch to eliminate kernel launch overhead and cross-kernel HBM round-trips. Why persistent kernels and CUDA Graphs dominate low-latency inference.

Prerequisites

Why This Matters

Fused kernels eliminate HBM round-trips between consecutive operations. A megakernel goes further: fuse everything in the transformer forward pass, or at least the entire decode step, into a single GPU kernel launch.

Hide overviewShow overview

This is the right optimization when the bottleneck is no longer memory traffic between ops but the number of kernel launches themselves. At batch size 1 with short prompts and long generation (the typical interactive-chat regime), an autoregressive decode step spends a surprising amount of wall-clock time on CPU-side kernel dispatch: each layer issues dozens of small CUDA kernels, each paying 2-10 microseconds of launch overhead, and on a small model that overhead approaches or exceeds the GPU compute time.

Megakernel-style implementations drive that number toward one launch per decode step. Low-latency Llama inference work from Stanford's Hazy Research group, NVIDIA's TensorRT-LLM, and the CUDA Graphs feature all target this regime. The theoretical ceiling is dictated by arithmetic-intensity ratios (the FLOPs/byte of the decode step), not by launch overhead at all.

The Launch Overhead Problem

Kernel Launch Overhead

The CPU-side cost of preparing and submitting a GPU kernel for execution: argument marshaling, stream synchronization, command buffer append, and eventually a doorbell write to the GPU. Typical measured cost on modern GPUs is 2-10 microseconds per launch, dominated by the CUDA runtime and driver path rather than the GPU itself.

Consider a Llama-style decoder with 32 layers. A naive implementation of a single decode step issues kernels for each sublayer: QKV projection, attention, output projection, residual, layer norm, MLP up-projection, SwiGLU gate multiply, MLP down-projection, residual, layer norm. If each sublayer is one or two kernels, the total is 10-20 launches per layer times 32 layers = 320-640 launches per token. At 5 microseconds per launch that is 1.6-3.2 ms of pure CPU-side overhead per token, before any GPU work begins.

Meanwhile the actual compute for one decode token on a 7B model is about 14 GB of BF16 weight reads and around GFLOPs. On an H100 (roughly 3 TB/s HBM), the weight-read time is about 4-5 ms. The 1.6-3.2 ms of launch overhead is a substantial fraction of this, and grows more significant under quantization (4-bit reduces HBM traffic to about 1 ms while launch overhead is unchanged).

What a Megakernel Does

Megakernel

A single GPU kernel that performs the entire forward pass (or decode step) of a model. All layers, all sublayers, and all reductions are expressed as one persistent kernel that runs from the start of the computation to the end without returning control to the host. The kernel internally sequences the work across thread blocks or warps using on-device synchronization.

Persistent Kernel

A kernel launched once with a grid sized to fully occupy the GPU, which then stays resident and processes work items in a loop (often from a work queue in global memory). The model's forward pass is encoded as a sequence of work items the persistent kernel consumes. In many designs the kernel persists across many decode steps, not just one.

Two broad strategies realize this:

- Single-kernel fusion (ThunderKittens-style megakernels): write one kernel whose source code contains all the operations for a full forward pass, with explicit tile-level scheduling. Requires a tile-oriented DSL because raw CUDA is impractical at this size.

- CUDA Graphs: let the runtime record a graph of kernel launches once, then replay the graph as a single API call. The GPU side still runs many kernels, but the CPU side issues one launch. This is the "free" megakernel in the sense that existing kernels need not be rewritten.

Both aim at the same observable effect: one CPU-to-GPU dispatch per step. The difference is whether the GPU side is also fused (strategy 1) or stays as a sequence of independent kernels (strategy 2).

Launch-Savings Theorem

Latency Floor from Kernel Launches

Statement

With kernels per decode step, the wall-clock latency per token is at least where is the per-launch overhead and is the total GPU-side work. Reducing kernel count to saves microseconds regardless of whether the ops themselves are faster.

A megakernel with attains the lower bound . When , the saving is at least a factor of reduction in latency; when , the reduction approaches .

Intuition

Kernel launches are a serial cost the GPU cannot hide, because the command must reach the GPU before the kernel can run. On a stream with no overlap, the costs add: launch, run, launch, run. Merge the runs into one, and you pay launch once.

Overlap via asynchronous streams does not change the conclusion for a single decode step because subsequent kernels depend on the previous kernel's output. A CUDA Graph replay amortizes many launches into one graph launch, which has similar effect.

Proof Sketch

Let be the execution time of kernel . Sequential execution takes . A single fused kernel takes where (the fused kernel does at least the same work, possibly more due to register-pressure penalties). Subtract to get savings . If the fused kernel does not add work, and savings are exactly .

Why It Matters

At batch size 1 on small models, and are comparable, so megakernels give factor-of-2-ish latency wins. At large batch sizes on large models, and megakernels give a few percent, which is often not worth the implementation cost. The payoff regime is narrow but important: interactive inference on edge devices, low-latency agents, high-frequency speculative-decoding verifier steps.

Failure Mode

The savings estimate assumes the fused kernel does no extra work. In practice:

- Register pressure. A kernel that must hold all intermediate state for many operations may exceed the register budget per thread, forcing spills to local memory (global memory in disguise). This can add more HBM traffic than it saves.

- Occupancy drop. A huge kernel may need so many registers per thread that fewer thread blocks fit on each SM, reducing the GPU's ability to hide memory latency. This makes .

- Scheduling constraints. On-device synchronization across thread blocks is expensive and often requires reading and writing global memory. A cleanly fused kernel needs block-level synchronization only at coarse boundaries; a poorly fused one may serialize everything.

Example Techniques in Practice

ThunderKittens megakernels (Spector et al., Stanford Hazy Research, 2024). ThunderKittens is a tile-centric CUDA framework that targets tensor-core throughput with a small number of primitives (tile load, tile matmul, tile store). Because the abstraction is at the tile-and-warp level rather than the individual-thread level, a full attention layer fits in a single kernel of a few hundred lines. Published kernels reach throughput competitive with FlashAttention-3 on H100 at substantially smaller source size.

CUDA Graphs (NVIDIA). An existing sequence of kernel launches is

captured into a graph object, then replayed by a single cudaGraphLaunch

call. The GPU side still runs each kernel, but the CPU submits once.

PyTorch supports this via torch.cuda.make_graphed_callables and

inference frameworks (vLLM, TensorRT-LLM) use it by default for decode.

Persistent kernel inference. A kernel is launched once on startup with a grid that fills the GPU. It reads work items (weights, inputs) from a queue and writes outputs back. The host sees a single long-running kernel and communicates via memory rather than launches. This pattern appears in parts of TensorRT-LLM's runtime and in research prototypes for low-latency decoding.

Superoptimizer-driven fusion. Automated search over tensor-program rewrites can discover fused regions that a human would not write by hand. The Mirage line of work (Wu et al., CMU, 2024) searches at the algebraic, block, and thread levels and emits a single kernel per fused region, sometimes covering an entire sub-graph of a transformer.

When Megakernels Pay and When They Do Not

Megakernels pay when:

- Batch size is small (launch overhead is a large fraction of total time).

- Model is small enough that per-layer GPU work is short (so launch is comparable to work).

- The decode step is autoregressive and latency-critical (serial generation means launch costs are not amortized across tokens).

- The deployment is a dedicated GPU with no contention (persistent kernels block other work).

Megakernels do not pay when:

- Batch size is large enough that each kernel saturates the GPU and launch overhead is amortized across many sequences.

- The model is compute-bound (enormous MLP matmuls dominate; launch is negligible).

- The hardware already provides a lower-level solution (CUDA Graphs often capture most of the benefit with much less engineering).

- The code is changing frequently and the fused kernel would need to be rewritten for each experiment.

Common Confusions

Megakernels do not reduce FLOPs

Like ordinary kernel fusion, megakernels do the exact same arithmetic as the unfused pipeline. The wins are in launch overhead and cross-op HBM traffic, not in FLOP count. Any claim that a megakernel is faster because it "does less work" is misframing; the correct statement is that it dispatches less work.

CUDA Graphs are not a megakernel in the strong sense

A CUDA Graph replays a recorded sequence of kernel launches as one API call. The CPU overhead drops to near zero but the GPU still runs the same kernels with the same on-GPU boundaries. A megakernel (in the ThunderKittens or persistent-kernel sense) removes those boundaries on the GPU side too. Both target launch overhead; only the megakernel also lets the GPU keep state in registers across what were previously kernel boundaries.

Persistent kernel is not the same as megakernel

A persistent kernel is a kernel that does not exit: it processes work from a queue indefinitely. A megakernel is a kernel whose body covers an entire model forward pass. The two overlap in practice (inference servers use persistent megakernels) but the concepts are independent: you can have a persistent small kernel, and you can have a one-shot megakernel.

Register pressure can reverse the win

Fusing more ops into one kernel increases the register footprint per thread. On H100, each SM has 65,536 registers shared across up to 2048 threads. If a megakernel uses 256 registers per thread, only 256 threads can reside per SM, cutting occupancy by 8x. Latency hiding collapses and the kernel becomes slower than the unfused version despite the launch savings. Measuring register count is the first diagnostic when a fused kernel underperforms.

Summary

- Megakernels eliminate kernel launch overhead by fusing an entire forward pass or decode step into one kernel launch

- The latency floor is ; reducing to 1 saves microseconds per decode step

- Pays off when batch size is small and model is small (interactive inference, speculative-decoding verifier steps, edge deployment)

- CUDA Graphs provide most of the CPU-side benefit with far less engineering; true GPU-side megakernels (ThunderKittens, persistent kernels) require tile-level DSLs

- Register pressure and occupancy drop are the main failure modes; a kernel too large to schedule is slower than the unfused baseline

Exercises

Problem

A decoder issues 400 kernel launches per decode step. Each launch costs 5 microseconds and the GPU spends 2 ms per step on actual work.

(a) What fraction of wall-clock time is launch overhead?

(b) A CUDA Graph replay reduces to 1 launch per step with GPU-side work unchanged. What is the speedup in wall-clock latency per token?

(c) A megakernel pushes GPU-side work down by 10% (removed cross-kernel HBM spills) in addition to reducing launches to 1. What is the speedup relative to the baseline?

Problem

An H100 SM provides 65,536 registers and supports up to 2048 resident threads. Consider two megakernel designs:

- Design A: 32 registers per thread, 256 threads per block.

- Design B: 128 registers per thread, 256 threads per block.

For each design, compute the register limit on resident threads per SM, determine how many blocks fit per SM, and compute the occupancy as (resident threads) / 2048. Comment on which design is more likely to hide HBM latency and why this matters for megakernel construction.

References

Canonical:

- NVIDIA, CUDA Programming Guide, sections on kernel launch overhead and CUDA Graphs (graph capture, instantiation, and replay).

- Spector et al., ThunderKittens: Simple, Fast, and Adorable AI Kernels (Stanford Hazy Research, 2024). Tile-centric CUDA framework supporting megakernel-style fusion.

- Dao, Fu, Ermon, Rudra, Ré, FlashAttention (NeurIPS 2022, arXiv:2205.14135). Fused attention as the first tile-level megakernel-adjacent kernel for transformers.

Current:

- Shah et al., FlashAttention-3: Fast and Accurate Attention with Asynchrony and Low-precision (2024, arXiv:2407.08608). Extends fused attention with warp-specialization and FP8 support on Hopper GPUs.

- Wu et al., Mirage: A Multi-Level Superoptimizer for Tensor Programs (CMU, 2024, arXiv:2405.05751). Automated search for fused tensor programs spanning sub-graphs of a model.

- NVIDIA, TensorRT-LLM documentation (inference engine that combines CUDA Graphs, fused kernels, and persistent-kernel inference for production LLM serving).

- Kwon et al., Efficient Memory Management for Large Language Model Serving with PagedAttention (SOSP 2023, arXiv:2309.06180). vLLM, which uses CUDA Graphs by default for decode steps.

- Tillet, Kung, Cox, Triton: An Intermediate Language and Compiler for Tiled Neural Network Computations (MLSys 2019). The original Triton compiler paper; the tiling abstraction underlies TorchInductor's fusion strategy.

- Ansel et al., PyTorch 2: Faster Machine Learning Through Dynamic Python Bytecode Transformation and Graph Compilation (ASPLOS 2024). TorchInductor fuses pointwise and reduction regions into Triton megakernels as part of torch.compile.

Next Topics

- Fused Kernels: the op-by-op fusion that underlies megakernel construction.

- Inference Systems Overview: how megakernels fit into the broader serving stack (batching, scheduling, KV caching).

- Speculative Decoding and Quantization: the other primary low-latency inference techniques that compose with megakernels.

Last reviewed: May 29, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

2- Fused Kernelslayer 5 · tier 2

- GPU Compute Modellayer 5 · tier 2

Derived topics

2- Inference Systems Overviewlayer 5 · tier 2

- Speculative Decoding and Quantizationlayer 5 · tier 2

Graph-backed continuations