LLM Construction

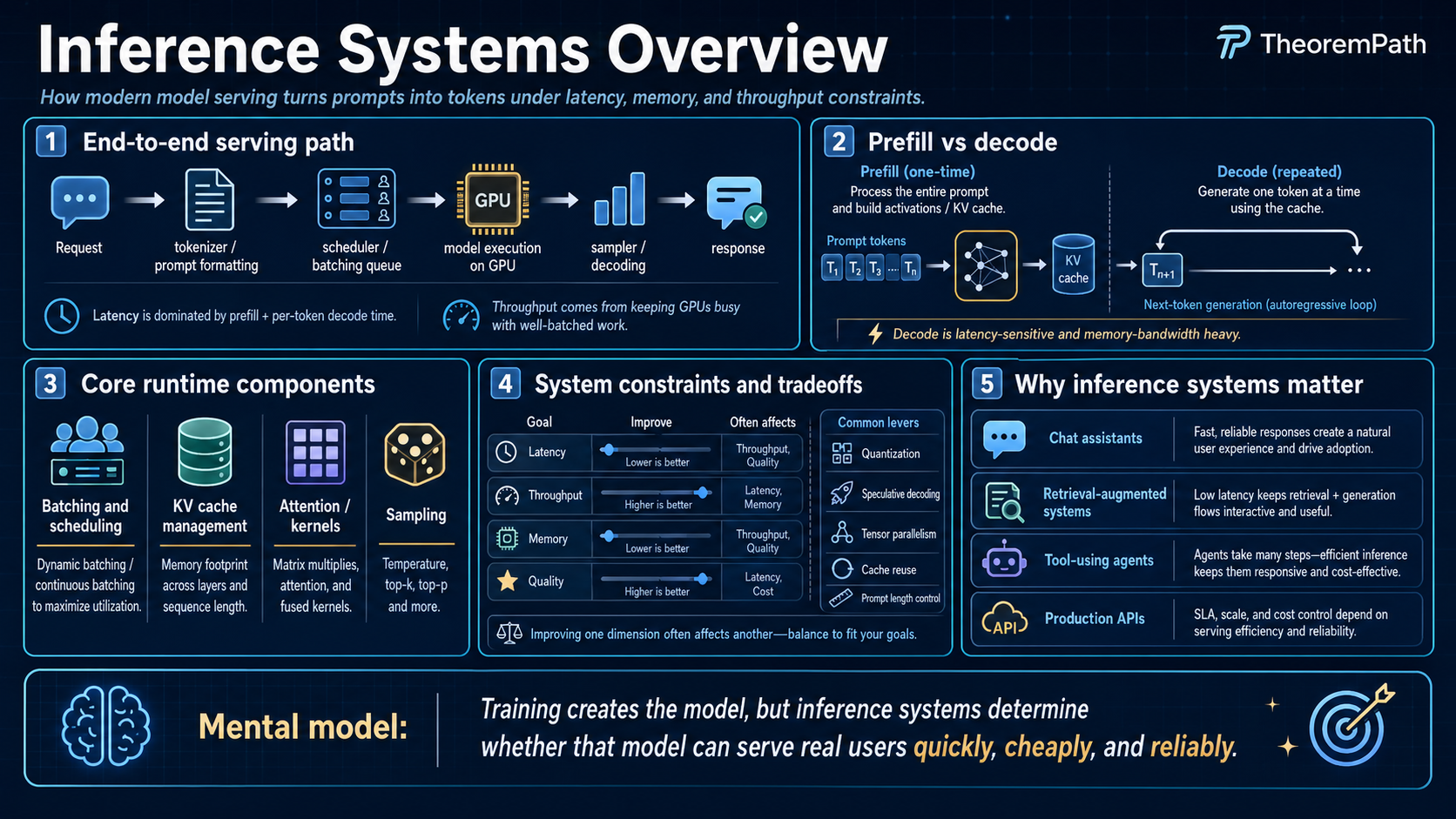

Inference Systems Overview

The modern LLM inference stack: batching strategies, scheduling, memory management with paged attention, model parallelism for serving, and why FLOPs do not equal latency when memory bandwidth is the bottleneck.

Prerequisites

Why This Matters

Training a large model costs millions of dollars but happens once. Serving that model costs money on every single request and runs continuously. For most organizations, inference cost dominates the total cost of ownership of an LLM system. Understanding the inference stack is essential for making informed decisions about model deployment, hardware selection, and performance optimization.

Hide overviewShow overview

The inference stack is also where the theory meets reality: FLOPs per parameter, memory bandwidth limits, and batching strategies determine what is actually achievable on real hardware, regardless of what the model can theoretically do.

Mental Model

Think of an LLM inference server as a restaurant kitchen. Orders (requests) arrive at varying times. The kitchen has limited equipment (GPU memory and compute). The chef (the model) can prepare multiple dishes simultaneously (batching) but each dish requires certain ingredients to stay warm on the counter (KV cache in memory). The challenge is maximizing the number of dishes served per hour (throughput) while keeping wait times acceptable (latency).

The Two Phases of Inference

Every autoregressive inference request has two distinct phases with very different computational profiles:

Prefill Phase

The prefill phase processes the entire input prompt in a single forward pass. All input tokens are processed in parallel (like a training step). This phase is compute-bound: the GPU's arithmetic units are the bottleneck because many tokens are processed simultaneously, providing high arithmetic intensity.

Decode Phase

The decode phase generates output tokens one at a time. Each step processes a single new token, attending to the full KV cache. This phase is memory-bandwidth-bound: the GPU must read the entire KV cache and model weights from HBM for each generated token, but performs relatively little computation per byte read.

This asymmetry is the central fact of LLM inference optimization. Optimizing prefill requires maximizing compute utilization. Optimizing decode requires maximizing memory bandwidth utilization. Most serving systems are decode-bound because generation typically produces more tokens than the prompt contains.

Why FLOPs Do Not Equal Latency

The Arithmetic Intensity Bottleneck

Statement

For an operation with arithmetic intensity (FLOPs per byte of memory traffic), the execution time is bounded by:

The operation is compute-bound if and only if (the operation has more FLOPs per byte than the hardware can sustain) and memory-bound if and only if .

For autoregressive decode with a single sequence, the arithmetic intensity is approximately:

For an A100 GPU: TFLOP/s, TB/s, so FLOP/byte. Since , the decode phase is massively memory-bandwidth-bound. The GPU's compute units are idle more than 99% of the time during single-sequence decode.

Intuition

Generating one token requires reading every model weight from memory (billions of bytes) but performing only two FLOPs per weight (multiply and accumulate). The GPU can compute much faster than it can read from memory. The result: the GPU spends almost all its time waiting for data to arrive from HBM, not doing math. More TFLOP/s does not help when memory bandwidth is the bottleneck.

Why It Matters

This explains why a GPU that can theoretically perform 312 TFLOP/s generates text much slower than the FLOP count suggests. It also explains why batching is so important: processing multiple sequences simultaneously amortizes the cost of reading model weights, increasing the arithmetic intensity toward the compute-bound regime.

Failure Mode

This analysis assumes the model weights are read from HBM. With model parallelism, weights may be distributed across GPUs, changing the bandwidth characteristics. With quantization (INT8, INT4), the bytes per parameter decrease, effectively doubling or quadrupling the arithmetic intensity.

Batching Strategies

Batching multiple requests together is the primary way to increase GPU utilization during decode.

Static Batching

Static batching groups a fixed number of requests into a batch and processes them together. All requests in the batch must wait until the longest request finishes before any response is returned. New requests cannot join a running batch.

Continuous Batching

Continuous batching (also called iteration-level batching) allows requests to enter and leave the batch at every decode step. When one request finishes generating, a new request immediately takes its slot. This eliminates the "waiting for the slowest request" problem of static batching.

Throughput Gain from Continuous Batching

Statement

For requests with variable output lengths, continuous batching achieves throughput up to times higher than sequential processing, where is the batch size and is the average output length. Compared to static batching, continuous batching improves throughput by a factor of:

where is the maximum output length in a batch. This ratio is large when output lengths vary widely (e.g., some requests produce 10 tokens, others 1000).

Intuition

In static batching, all requests must wait for the longest one. GPU cycles spent on "padding" short requests to match the longest are wasted. Continuous batching fills those wasted slots with new requests. The more variable the output lengths, the more waste static batching incurs and the larger the improvement from continuous batching.

Why It Matters

In real workloads, output lengths vary by orders of magnitude (a simple answer might be 20 tokens; a detailed explanation might be 2000). Continuous batching is what makes high-throughput LLM serving feasible. It is the default in all production serving frameworks (vLLM, TGI, TensorRT-LLM).

Failure Mode

Continuous batching adds scheduling complexity. Prefill and decode operations have different compute profiles, and mixing them in a single batch can cause interference. Some systems (Sarathi, Splitwise) separate prefill and decode onto different GPUs to avoid this interference.

Memory Management: Paged Attention

The KV cache is the dominant memory consumer during inference. Efficient memory management determines how many requests can be batched simultaneously.

The problem with naive allocation: Each request pre-allocates a contiguous KV cache block for its maximum possible length. Most requests do not reach maximum length, wasting memory. Memory cannot be shared between requests, even if they share the same system prompt.

Paged attention (vLLM) solves this by dividing the KV cache into fixed-size pages, allocated on demand:

- No pre-allocation waste: Pages are allocated only as the sequence grows

- Non-contiguous storage: Pages can be anywhere in GPU memory, managed by a page table

- Copy-on-write sharing: Requests with shared prefixes (same system prompt) share KV cache pages until they diverge

- Near-optimal utilization: Memory waste is at most one page per request

In benchmarks, paged attention increases the number of concurrent requests by 2-4x compared to naive allocation, directly translating to 2-4x higher throughput.

Scheduling

The scheduler decides which requests to process at each step and how to allocate GPU resources.

First-Come-First-Served (FCFS): Process requests in arrival order. Simple but can cause head-of-line blocking: a long request holds a batch slot that could serve many short requests.

Shortest-Job-First (SJF): Prioritize requests with shorter expected output lengths. Minimizes average latency but requires output length prediction, which is inherently uncertain.

Preemptive scheduling: Pause a running request to make room for a higher-priority one. The paused request's KV cache can be swapped to CPU memory and restored later. Adds complexity but enables priority-based serving.

Prefill-decode disaggregation: Run prefill on one set of GPUs and decode on another. Prefill is compute-bound and benefits from high-compute GPUs. Decode is memory-bound and benefits from high-bandwidth memory. This avoids the interference that occurs when prefill and decode share the same GPU.

Chunked prefill (Sarathi): Split a long prefill into smaller chunks and interleave each chunk with one or more decode steps in the same batch. This converts a single large compute-bound prefill into a sequence of mixed batches that keep decode latency stable while still making progress on prefill. See Agrawal et al., "SARATHI" (arXiv:2308.16369).

Prefix and Prompt Caching

Many real workloads repeat the same prefix across requests: a shared system prompt, a tool schema, a retrieved document, or a long chat history that grows by one turn per request. Recomputing the KV cache for the shared prefix on every request wastes prefill compute.

Prefix caching stores the KV cache for a shared prefix once and reuses it across requests that share that prefix. The first request pays full prefill cost. Subsequent requests with the same prefix skip prefill for the cached portion and only run prefill on the uncached suffix.

- vLLM prefix caching reuses the paged KV cache: pages corresponding to a matched prefix are retained and shared across requests.

- SGLang RadixAttention (Zheng et al. 2023, arXiv:2312.07104) stores cached KV entries in a radix tree keyed by token prefix, enabling automatic reuse across requests with shared prefixes and structured programs.

- Anthropic Prompt Caching (2024 API) exposes prefix caching to API callers: repeated long system prompts or documents are cached server-side with a separate price tier for cache reads versus writes.

- DeepSeek context caching provides a similar API-level caching layer for long contexts.

The throughput gain from prefix caching depends on prefix hit rate and prefix-to-suffix length ratio. For agents and RAG systems with long shared contexts, prefix caching often dominates other inference optimizations.

Speculative Decoding and Quantization in Serving

Serving systems routinely compose speculative decoding and quantization at the system level. Speculative decoding reduces the number of target-model forward passes per accepted token, attacking the bandwidth bottleneck of decode. Quantization reduces bytes per parameter, directly improving decode arithmetic intensity. The two are complementary: the draft model can also be quantized, and speculative decoding can sit on top of a quantized target model without algorithmic changes.

Model Parallelism for Serving

Large models that do not fit on a single GPU require parallelism:

Tensor parallelism (TP): Split each layer's weight matrices across multiple GPUs. Each GPU computes a portion of the matrix multiplication. Requires all-reduce communication between GPUs at every layer. Low latency per request but requires high-bandwidth interconnect (NVLink).

Pipeline parallelism (PP): Assign different layers to different GPUs. Requests flow through GPUs sequentially. Simpler communication pattern but adds pipeline latency (one GPU must wait for the previous to finish).

Expert parallelism (EP): For mixture-of-experts models, assign different experts to different GPUs. Only the activated experts need to communicate. Natural fit for MoE architectures.

In practice, production systems use tensor parallelism within a node (connected by NVLink) and pipeline parallelism across nodes (connected by InfiniBand).

Latency vs Throughput

These two metrics are in tension:

Latency (time to first token, time per output token): minimized by processing each request as fast as possible, which means using the entire GPU for one request.

Throughput (requests per second, tokens per second): maximized by batching many requests together, which increases per-request latency but serves more users.

The tradeoff is mediated by the SLO (service-level objective): "p99 latency must be under 200ms per token." The scheduler must find the maximum batch size that satisfies the latency SLO.

Time to first token (TTFT): Dominated by prefill time. Scales with input length. For long prompts, TTFT can be seconds.

Time per output token (TPOT): Dominated by decode time. More stable but increases with batch size (more KV caches to read).

Total latency: TTFT + (number of output tokens) TPOT.

For a new LLM serving deployment, start with vLLM or TGI. These implement continuous batching, paged attention, and tensor parallelism out of the box. Profile your workload before optimizing: measure TTFT, TPOT, throughput, and GPU memory utilization under realistic load. Only optimize the bottleneck. If you are memory-bound, try quantization (INT8 first) or paged attention tuning. If you are compute-bound during prefill, consider prefill-decode disaggregation. If you need lower latency, try speculative decoding. Do not optimize what is not the bottleneck.

Common Confusions

More TFLOP/s does not mean faster generation

A GPU with 2x the TFLOP/s does not generate tokens 2x faster if generation is memory-bandwidth-bound. For single-sequence decode, the bottleneck is memory bandwidth, not compute. A GPU with higher bandwidth (e.g., HBM3 vs HBM2) will generate faster, even if it has fewer TFLOP/s. This is why inference-optimized hardware (like Google's TPUv5e or Groq's LPU) focuses on memory bandwidth and efficient data movement, not peak FLOP/s.

Batch size is limited by memory, not compute

You cannot increase batch size indefinitely to improve throughput. Each request in the batch requires KV cache memory. Total KV cache grows as , where is batch size and is sequence length. When KV cache memory exceeds GPU memory (minus model weights), you hit the batch size limit. This is why memory efficiency (paged attention, quantization, GQA) directly translates to higher throughput.

Prefill and decode need different optimizations

Prefill is compute-bound (many tokens processed in parallel). Decode is memory-bound (one token at a time). Optimizing for one does not help the other. Reducing model size helps decode (less memory to read) but may not help prefill (compute is the bottleneck). Flash Attention helps prefill (reduces memory traffic during the attention computation) but has less impact on decode (the KV cache read dominates).

Summary

- Prefill is compute-bound; decode is memory-bandwidth-bound

- FLOPs do not equal latency: arithmetic intensity determines the bottleneck

- Single-sequence decode uses less than 1% of GPU compute capacity

- Batching amortizes weight reads across requests, increasing utilization

- Continuous batching eliminates waste from variable output lengths

- Paged attention manages KV cache like virtual memory, enabling 2-4x more concurrent requests

- Tensor parallelism for within-node, pipeline parallelism for across-node

- Latency and throughput are in tension; the SLO determines the operating point

- Start with vLLM or TGI; profile before optimizing

Exercises

Problem

A model has 7B parameters in FP16. The GPU has 2 TB/s memory bandwidth. Ignoring the KV cache, what is the maximum token generation rate for a single sequence during decode? (Assume each token requires reading all weights once.)

Problem

You are serving Llama-3-70B on 8 A100-80GB GPUs with tensor parallelism, batch of autoregressive decode requests (prefill already complete). The model uses GQA with 8 KV heads, , 80 layers, FP16 weights and FP16 KV cache. Fixed sequence length for every request. Each GPU has 80 GB HBM with sustained HBM bandwidth TB/s. Model weights consume 17.5 GB per GPU after tensor parallelism. The SLO requires TPOT under 50 ms.

Using only memory and bandwidth bounds (ignore communication, activation, and fragmentation overhead), what is the maximum batch size that satisfies both the memory budget and the TPOT SLO?

Problem

Prefill-decode disaggregation runs prefill on "prefill GPUs" and decode on "decode GPUs," transferring the KV cache between them. Analyze the tradeoff: what are the benefits of disaggregation, and under what workload conditions does the KV cache transfer overhead negate the benefits?

References

Canonical:

- Kwon et al., "Efficient Memory Management for Large Language Model Serving with PagedAttention" (vLLM, SOSP 2023)

- Yu et al., "Orca: A Distributed Serving System for Transformer-Based Generative Models" (iteration-level / continuous batching, OSDI 2022)

- Zheng et al., "SGLang: Efficient Execution of Structured Language Model Programs" (RadixAttention prefix caching, arXiv:2312.07104, 2023)

Current:

- Agrawal et al., "SARATHI: Efficient LLM Inference by Piggybacking Decodes with Chunked Prefills" (arXiv:2308.16369, 2023)

- Patel et al., "Splitwise: Efficient generative LLM inference using phase splitting" (2024)

- Anthropic, "Prompt Caching" API documentation (2024)

- DeepSeek, "Context Caching" API documentation (2024)

- NVIDIA TensorRT-LLM and HuggingFace TGI (text-generation-inference) documentation (2024)

Next Topics

The natural next steps from inference systems:

- Scaling laws: how model size and training compute determine the capabilities you are serving

- Context engineering: designing the full system around inference constraints

Last reviewed: April 18, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

7- Model Compression and Pruninglayer 3 · tier 2

- Edge and On-Device MLlayer 5 · tier 2

- KV Cachelayer 5 · tier 2

- Speculative Decoding and Quantizationlayer 5 · tier 2

- Docker and Containers for MLlayer 4 · tier 3

Derived topics

2- Scaling Lawslayer 4 · tier 1

- Context Engineeringlayer 5 · tier 2

Graph-backed continuations