LLM Construction

Memory Systems for LLMs

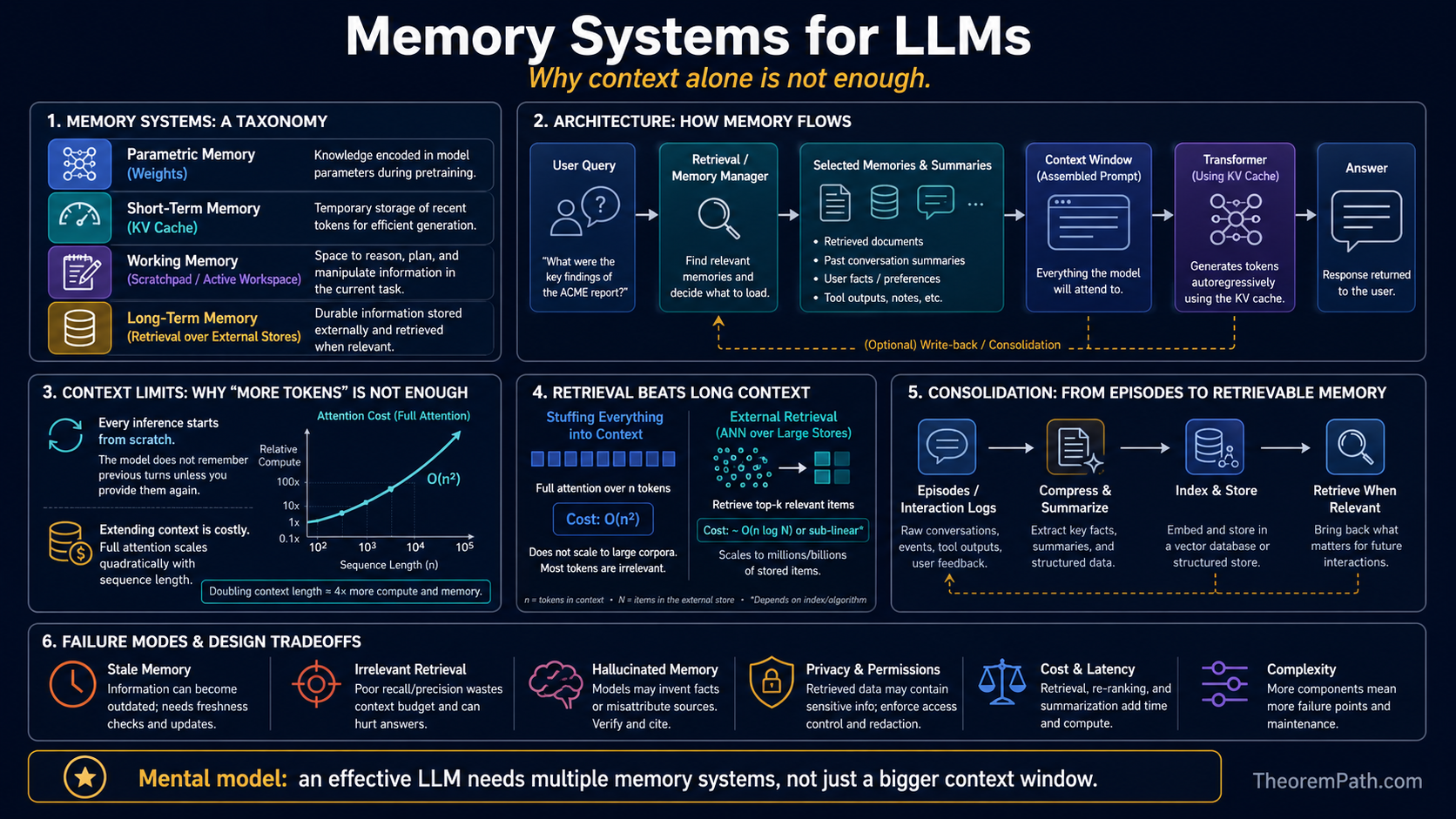

Taxonomy of LLM memory: short-term (KV cache), working (scratchpad), long-term (retrieval), and parametric (weights). Why extending context alone is insufficient and how memory consolidation works.

Prerequisites

Why This Matters

LLMs have no persistent memory by default. Every inference call starts from scratch: the model sees the context window and nothing else. All "memory" must fit within the context window or be encoded in the model's parameters during training.

Hide overviewShow overview

This is a severe limitation. A context window of 128K tokens covers roughly 200 pages of text. A useful assistant needs to remember months of conversations, thousands of documents, and evolving user preferences. Extending the context window alone does not solve this: attention is quadratic in sequence length, while approximate nearest neighbor retrieval over a store of items is sub-linear (typically ). The engineering question is how to build memory systems that give LLMs access to unbounded information while keeping inference tractable.

Mental Model

Think of human memory as a guide. Humans have working memory (what you are actively thinking about, roughly 7 items), short-term memory (what happened in the last few minutes), long-term memory (accumulated over a lifetime), and procedural memory (skills encoded in neural pathways, analogous to model weights). LLMs need analogs of each.

Memory Taxonomy

Short-Term Memory (KV Cache)

Short-term memory in an LLM is the KV cache: the key-value pairs computed during the current inference pass. This covers the current context window and persists only for the duration of a single generation session. Capacity is bounded by the context window length. Access pattern: full attention over all cached positions.

Working Memory (Scratchpad)

Working memory is the model's ability to use generated text as a computation buffer. Chain-of-thought reasoning, scratchpads, and intermediate outputs serve as working memory. The model reads its own previous outputs to maintain state across reasoning steps. This consumes context window tokens.

Long-Term Memory (External Retrieval)

Long-term memory uses an external storage system (vector database, key-value store, or file system) to persist information beyond the context window. At inference time, a retrieval system fetches relevant items and injects them into the context. Capacity is unbounded. Access pattern: sparse retrieval (top- nearest neighbors), not full attention.

Parametric Memory

Parametric memory is information encoded in the model's weights during training. The model "knows" facts because they influenced weight updates during pretraining. Parametric memory is fixed at inference time (unless fine-tuned), hard to update, hard to audit, and can produce confident hallucinations when the memorized information is outdated or wrong.

Why Context Extension Is Insufficient

The naive solution to memory is "just extend the context window." Modern models support 128K, 200K, or even 1M+ tokens. But scaling context has diminishing returns for three reasons.

Computational Cost

Retrieval vs Attention Complexity

Statement

Full self-attention over tokens costs computation and memory for the KV cache. Retrieval of items from an external store of items using approximate nearest neighbor search costs for retrieval plus for attention over the retrieved items. When , retrieval is asymptotically cheaper: the model processes a short context with high-relevance items rather than a long context with everything.

Intuition

Attention reads everything in the context and computes pairwise interactions. Retrieval reads only the relevant items using an index. For a 1M-token context with 10 relevant passages, attention does operations over mostly irrelevant tokens. Retrieval does lookups and then attention over the 10 passages.

Why It Matters

This is the core argument for retrieval-augmented memory over context extension. As the total information an LLM needs access to grows (all of a user's documents, conversation history, knowledge base), the cost gap between "put it all in context" and "retrieve what you need" widens superlinearly.

Failure Mode

Retrieval introduces a new failure mode: if the retrieval system fails to find the relevant items, the model cannot reason about them at all. Full-context attention at least sees all the information, even if it attends to it poorly. Retrieval precision is critical. A retrieval miss is worse than a lost-in-the-middle attention failure because the information is completely absent from the context.

Attention Degradation

As shown by the lost-in-the-middle phenomenon, attention quality degrades with context length. Even if you can fit 1M tokens in context, the model will not attend to all of them effectively. Information in the middle of a long context is functionally lost.

Cost Scaling

For a model with transformer blocks, hidden dimension and 128 attention heads (head dimension 64) at FP16, the KV cache for 1M tokens requires approximately:

per request — about 32 GB per layer summed over layers. This is per-request memory that cannot be shared across users. At scale, context-window memory dominates GPU costs.

Memory Consolidation

Memory consolidation is the process of compressing and persisting important information from the context window into long-term storage.

Summarization-based consolidation: After a conversation, generate a summary of key facts and store it. On future conversations, retrieve the summary instead of replaying the full history. Information is lost, but the summary is compact.

Embedding-based consolidation: Compute embedding vectors for important passages and store them in a vector database. Retrieval is by semantic similarity. Preserves more nuance than summarization but does not preserve exact content.

Structured extraction: Extract key-value pairs, facts, or entity relationships from the conversation. Store them in a structured database. Enables precise retrieval ("what is the user's preferred language?") but requires a reliable extraction system.

Architectural Approaches

Memory-layer architectures: Replace fully-connected feed-forward blocks with key-value memory layers that do sparse lookup against a large learned memory bank. Product-Key Memory (Lample et al. 2019) was the canonical early form; MemoryFormer (Ding et al. 2024) is a recent Transformer variant in the same family. This bakes external memory into the architecture rather than bolting it on at inference via RAG.

Memory tokens: Append a fixed set of learnable "memory" tokens to the context. These tokens are updated across sessions to accumulate persistent state. Limited capacity but simple to implement.

Retrieval-augmented generation (RAG): The most widely deployed memory system. An external retrieval engine (vector database + embedding model) fetches relevant documents and inserts them into the context. The model treats retrieved content the same as any other context.

RAG is a memory system, not just a knowledge system

RAG is often described as giving models access to external knowledge. But it is equally useful as a memory system: storing and retrieving conversation history, user preferences, and prior interactions. The same retrieval infrastructure serves both use cases.

Parametric memory is not reliable

Information stored in model weights is the result of statistical learning over the training corpus. The model does not store facts as a database does. It stores distributional patterns that correlate with facts. This is why models hallucinate: the parametric memory produces text that is distributionally plausible but factually wrong. Explicit memory systems (retrieval, structured storage) provide verifiable, updatable storage that parametric memory cannot.

Summary

- Four types of LLM memory: short-term (KV cache), working (scratchpad/CoT), long-term (retrieval), parametric (weights)

- Extending context window has compute and memory cost; retrieval has lookup cost for stored items

- Attention quality degrades with context length; retrieval precision is independent of total store size

- Memory consolidation compresses session information into persistent storage

- Parametric memory is not updatable or verifiable without retraining

- Most production systems use RAG as the primary long-term memory mechanism

Exercises

Problem

A model has a 128K-token context window. A user has had 500 prior conversations averaging 2000 tokens each. Can all prior conversations fit in context? What is the alternative?

Problem

Compare the per-request memory cost of a 1M-token context (full KV cache) vs. a system that retrieves the top-20 passages of 512 tokens each into a 32K context window. Assume layers, , 128 heads with head dimension 64, and FP16 storage.

Problem

Design a memory consolidation system that can answer the question "What did the user say about X three months ago?" with high reliability. What are the failure modes of summarization-based, embedding-based, and structured extraction approaches for this task?

References

Canonical:

- Lewis et al., "Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks" (2020)

- Liu et al., "Lost in the Middle: How Language Models Use Long Contexts," TACL 2024 (arXiv:2307.03172)

Current:

- Lample, Sablayrolles, Ranzato, Denoyer, Jégou, "Large Memory Layers with Product Keys" (NeurIPS 2019, arXiv:1907.05242)

- Ding et al., "MemoryFormer: Minimize Transformer Computation by Removing Fully-Connected Layers" (NeurIPS 2024, arXiv:2411.12992)

- Zhong et al., "MemoryBank: Enhancing Large Language Models with Long-Term Memory" (2024, arXiv:2305.10250)

- Gu, Dao, "Mamba: Linear-Time Sequence Modeling with Selective State Spaces" (COLM 2024, arXiv:2312.00752)

- Borgeaud et al., "Improving Language Models by Retrieving from Trillions of Tokens" (Retro; ICML 2022, arXiv:2112.04426)

Next Topics

The natural next steps from memory systems:

- Latent reasoning: complements memory by enabling deeper computation within the hidden state, reducing memory pressure from chain-of-thought

- Context engineering: the systems discipline of assembling context from multiple memory sources

Last reviewed: April 27, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

2- Context Engineeringlayer 5 · tier 2

- KV Cachelayer 5 · tier 2

Derived topics

1- Latent Reasoninglayer 5 · tier 2

Graph-backed continuations