LLM Construction

KV Cache

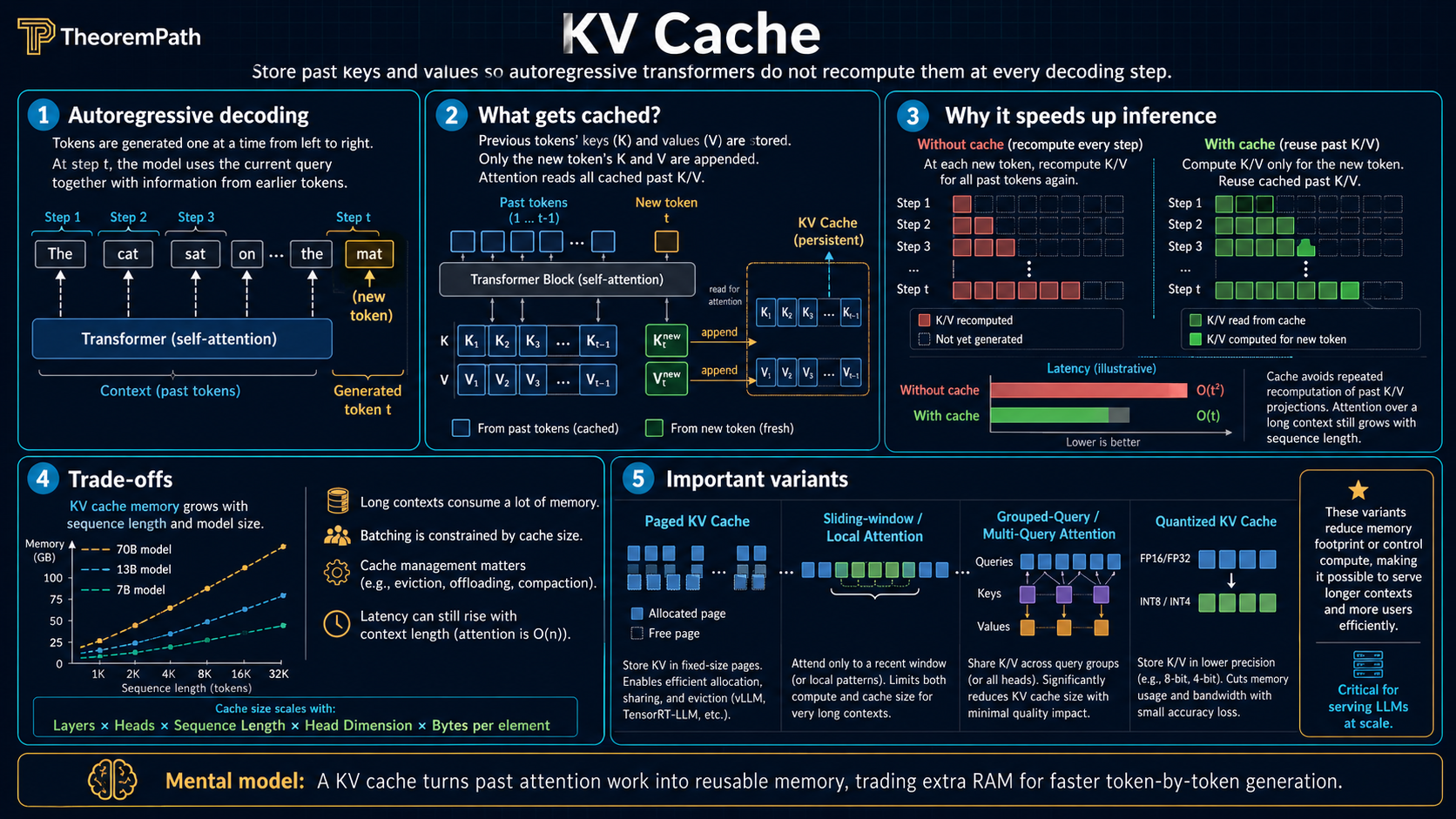

Why autoregressive generation recomputes attention at every step, how caching past key-value pairs makes it linear, and the memory bottleneck that drives MQA, GQA, and paged attention.

Prerequisites

Why This Matters

Training a transformer is compute-bound. Inference is memory-bound. The single biggest memory consumer during autoregressive generation is the KV cache: the stored key and value tensors from all previous tokens. For a 70B parameter model serving 100K-token contexts, the KV cache alone can require over 100 GB of GPU memory. Understanding the KV cache is essential for understanding why long-context inference is expensive, why models have context length limits in practice, and why architectural innovations like multi-query attention exist.

Hide overviewShow overview

Mental Model

When a transformer generates text autoregressively (one token at a time), each new token must attend to all previous tokens. Without caching, you would recompute the key and value projections for all previous tokens at every single generation step. wasting enormous compute on redundant matrix multiplications.

The KV cache stores the key and value vectors computed for past tokens, so that at each step you only compute the query, key, and value for the new token. The new query attends to all cached keys, the weighted sum uses all cached values, and the new key-value pair is appended to the cache.

The Redundant Recomputation Problem

During autoregressive generation, at step the model has produced tokens and needs to produce . The attention computation for token requires:

Without caching: At step , you compute and from scratch. At step , you compute and from scratch. recomputing rows through that were already computed in the previous step.

Over a generation of tokens, the total key/value computation is . This is wasteful because most of it is redundant.

The KV Cache Solution

KV Cache

The KV cache stores the computed key and value vectors for all past tokens. At generation step :

- Compute the new token's projections: , ,

- Append and to the cache: ,

- Attend using the full cache:

The key-value projections for tokens are never recomputed.

KV Cache Reduces Per-Step Compute from O(td) to O(d)

Statement

Without KV cache, generating token requires work for key/value projections (computing and ) plus for attention. Generating all tokens costs total.

With KV cache, generating token requires for the new key/value projections (only one token) plus for attending to the cache. Generating all tokens costs for attention operations. The key-value projection cost drops from to .

The memory cost of the cache is:

where is the number of layers, the factor of 2 accounts for both keys and values, and is the current sequence length.

Intuition

The KV cache trades memory for compute. Instead of recomputing past keys and values ( compute per step), you store them ( memory per layer) and only compute the single new token's projections ( compute). This is the classic time-space tradeoff, and for autoregressive LLMs it is overwhelmingly worthwhile.

Why It Matters

Without KV caching, generating a 1000-token response from a transformer would require roughly 500x more key/value projection compute than with caching. In practice, no production LLM system operates without a KV cache. The cache is what makes real-time text generation feasible.

Failure Mode

The KV cache grows linearly with sequence length. If Llama 2 70B used MHA with layers, KV heads, in fp16, the cache for a single sequence of length would be bytes . At : approximately 262 GB. This exceeds the memory of any single GPU. In practice Llama 2 70B uses GQA with 8 KV heads, reducing the actual cache by 8x to roughly 33 GB at the same context length, which is the precise reason GQA was adopted. The KV cache remains the primary bottleneck for long-context inference.

Prefill vs Decode Phases

Autoregressive inference has two distinct phases:

Prefill (prompt processing): The entire input prompt of length is processed in a single forward pass (parallel, like training). All key-value pairs are computed and stored in the cache. This phase is compute-bound because it processes many tokens in parallel.

Decode (generation): New tokens are generated one at a time. Each step processes a single token, attending to the full cache. This phase is memory-bandwidth-bound because the compute per token is small, but each step must read the entire KV cache from GPU memory.

The decode phase is why LLM inference throughput is often limited by memory bandwidth rather than compute. For a model with 80 layers, generating one token requires reading the entire KV cache (potentially tens of GB) from HBM each step. The compute per token is dominated by the dense projections and FFN ( per layer) plus attention against the cache ( per head), but the arithmetic intensity is low: most of the work per FLOP is moving KV bytes, so HBM bandwidth — not FLOPs — sets the throughput ceiling.

Multi-Query Attention and Grouped-Query Attention

Multi-Query Attention (MQA)

In standard multi-head attention (MHA), each of heads has its own and matrices. The KV cache stores separate key-value pairs per layer per token.

Multi-query attention (MQA) (Shazeer, 2019) shares a single set of keys and values across all heads:

Each head still has its own query projection but uses the same keys and values.

MQA Reduces KV Cache by Factor h

Statement

The KV cache size for different attention variants at sequence length :

| Variant | KV pairs per layer | Cache size per layer |

|---|---|---|

| MHA ( heads) | ||

| GQA ( groups) | ||

| MQA (1 shared) |

MQA reduces the KV cache by a factor of compared to MHA. GQA with groups reduces it by a factor of .

Intuition

The key insight is that in MHA, different heads often learn similar key-value patterns. Sharing keys and values across heads eliminates this redundancy at a small quality cost. GQA is the middle ground: instead of all heads sharing one KV set or each head having its own, groups of heads share KV sets.

Why It Matters

For Llama 2 70B with heads, MQA would reduce the KV cache by 64x. In practice, Llama 2 70B uses GQA with groups, reducing the cache by 8x. This makes the difference between requiring 262 GB (hypothetical MHA) and 33 GB (actual GQA-8) for a 100K-token context. The difference between infeasible and barely feasible on a single node.

The quality degradation from GQA is minimal. Ainslie et al. (2023) showed that GQA with small group counts (e.g., ) matches MHA quality to within roughly 0.2 points on standard benchmarks while achieving near-MQA inference speed. Llama 2 70B subsequently adopted GQA-8 in production.

Multi-Head Latent Attention (MLA)

The MQA/GQA progression reduces the number of stored KV heads. Multi-Head Latent Attention (MLA), introduced in DeepSeek-V2 (2024), instead compresses the KV representation through a low-rank joint projection. Per token per layer, MLA stores a small latent vector with , from which keys and values for all heads are reconstructed at attention time via learned up-projections. The reported result is a roughly 7x KV-cache reduction relative to GQA-8 at comparable or better quality on DeepSeek-V2 evaluations. The technique was the leading cache-compression method as of DeepSeek-V2 (2024) and represents a distinct axis from MQA/GQA: head sharing reduces the number of cached projections, while MLA reduces their dimensionality.

KV Cache Quantization

A parallel axis of compression is numerical precision. KVQuant (Hooper et al., 2024) stores KV pairs at 4-bit precision using per-channel and per-vector quantization strategies, enabling 10M+ token contexts on hardware that would otherwise run out of memory at fp16. KIVI (Liu et al., 2024) takes a different approach: 2-bit asymmetric quantization applied separately to K and V tensors, exploiting the observation that K and V have distinct statistical profiles. Both methods apply post-training quantization (PTQ) to the cache without retraining the base model; see quantization theory for PTQ methods that apply to KV cache.

Paged Attention (vLLM)

Paged Attention

Paged attention (Kwon et al., 2023) applies the operating system concept of virtual memory paging to KV cache management.

In standard serving, each sequence pre-allocates a contiguous memory block for its maximum possible KV cache size. This wastes memory because:

- Most sequences do not reach maximum length

- Memory cannot be shared between sequences

- Internal fragmentation from alignment requirements

Paged attention divides the KV cache into fixed-size pages (blocks of tokens). Pages are allocated on demand and can be stored non-contiguously in GPU memory, with a page table mapping logical token positions to physical memory locations.

Paged attention enables:

- Near-zero memory waste: pages are allocated only as needed

- Memory sharing: sequences with shared prefixes (e.g., the same system prompt) can share KV cache pages via copy-on-write

- Higher throughput: more sequences can be batched because memory utilization is close to optimal

In benchmarks, vLLM achieves 2-4x higher throughput than naive serving implementations, primarily by reducing KV cache memory waste.

Speculative decoding (Leviathan et al., 2023; Medusa, Cai et al., 2024) is orthogonal to KV cache optimization: it reduces the number of decode steps by drafting multiple tokens with a small model and verifying them in parallel, while KV caching reduces the per-step memory cost of attending to past tokens. The two compose in production systems.

Why KV Cache Is the Memory Bottleneck

For a concrete example, consider serving Llama 3 70B (80 layers, 64 heads, GQA with 8 KV heads, , fp16):

- Model weights: bytes GB

- KV cache per token per layer: bytes bytes

- KV cache per token (all layers): bytes KB

- KV cache for 128K context: GB

With batching (serving sequences simultaneously), the total KV cache is GB. At batch size 8: 320 GB for KV cache alone. more than double the model weight memory.

This is why KV cache size, not parameter count, determines the maximum batch size and context length for deployed LLMs.

Common Confusions

KV cache saves compute, not the attention operation itself

The KV cache avoids recomputing key and value projections for past tokens. The attention operation itself. computing and the weighted sum over values. still requires reading the full cache and scales as per step. KV caching does not change the complexity of the attention mechanism; it eliminates redundant projection computation.

MQA/GQA affect the KV cache but not the query projections

In MQA and GQA, each attention head still has its own query projection . Only the key and value projections are shared. This means the quality of each head's "question" is preserved. only the "dictionary" being searched is shared.

FlashAttention and KV cache solve different problems

FlashAttention reduces the memory required for the attention computation (the matrix) by computing it in tiles. The KV cache reduces redundant key-value projection compute during generation. They are complementary and both are used in production systems.

Summary

- Without KV cache: generating tokens costs in key/value projections

- With KV cache: store past K, V vectors; only compute new token projections per step

- KV cache memory:

- Prefill is compute-bound (parallel); decode is memory-bandwidth-bound (sequential)

- MQA shares one KV set across all heads (cache )

- GQA shares KV across groups of heads (cache ). The practical sweet spot

- Paged attention manages cache like virtual memory pages, reducing waste

- For long contexts, KV cache dominates GPU memory over model weights

Exercises

Problem

A model has layers, attention heads (full MHA, no grouping), , and uses fp16. How much memory does the KV cache require for a single sequence of length ?

Problem

During the decode phase, generating one token requires reading the entire KV cache from GPU HBM. For the model above (32 layers, GQA with 4 groups, , fp16) at sequence length , how many bytes must be read per generated token? If the GPU has 2 TB/s HBM bandwidth, what is the maximum generation speed (tokens per second) for a single sequence, ignoring all computation time?

Related Comparisons

References

Canonical:

- Vaswani et al., "Attention Is All You Need" (2017). attention mechanism that KV cache accelerates

Current:

- Shazeer, "Fast Transformer Decoding: One Write-Head is All You Need" (2019), arXiv:1911.02150. multi-query attention

- Ainslie, Lee-Thorp, de Jong, Zemlyanskiy, Lebrón, Sanghai, "GQA: Training Generalized Multi-Query Transformer Models from Multi-Head Checkpoints" (2023), arXiv:2305.13245

- Pope, Douglas, Chowdhery, Devlin, Bradbury, Heek, Xiao, Agrawal, Dean, "Efficiently Scaling Transformer Inference," MLSys 2023, arXiv:2211.05102. canonical analysis of inference cost, prefill/decode, and KV cache scaling

- Kwon et al., "Efficient Memory Management for Large Language Model Serving with PagedAttention," SOSP 2023, arXiv:2309.06180. vLLM

- DeepSeek-AI, "DeepSeek-V2: A Strong, Economical, and Efficient Mixture-of-Experts Language Model" (2024), arXiv:2405.04434. introduces Multi-Head Latent Attention (MLA)

- Hooper, Kim, Mohammadzadeh, Mahoney, Shao, Keutzer, Gholami, "KVQuant: Towards 10 Million Context Length LLM Inference with KV Cache Quantization" (2024), arXiv:2401.18079

- Liu, Zhao, Rael, Shrivastava, Chen, "KIVI: A Tuning-Free Asymmetric 2-bit Quantization for KV Cache" (2024), arXiv:2402.02750

- Leviathan, Kalman, Matias, "Fast Inference from Transformers via Speculative Decoding," ICML 2023, arXiv:2211.17192

- Cai, Li, Geng, Zhang, Lee, Chen, Dao, "Medusa: Simple LLM Inference Acceleration Framework with Multiple Decoding Heads" (2024), arXiv:2401.10774

Next Topics

The natural next steps from KV cache:

- Scaling laws: understanding compute-optimal training which determines the model sizes being served

- Positional encoding: how position information interacts with cached key-value pairs

Last reviewed: April 26, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

5- Linear Layer: Shapes, Bias, and Memorylayer 2 · tier 1

- Attention Is All You Need (Paper)layer 4 · tier 1

- Attention Mechanism Theorylayer 4 · tier 2

- Attention Variants and Efficiencylayer 4 · tier 2

- Efficient Transformers Surveylayer 4 · tier 2

Derived topics

6- Context Engineeringlayer 5 · tier 2

- Inference Systems Overviewlayer 5 · tier 2

- KV Cache Optimizationlayer 5 · tier 2

- Memory Systems for LLMslayer 5 · tier 2

- Prefix Cachinglayer 5 · tier 2

+1 more on the derived-topics page.