RL Theory

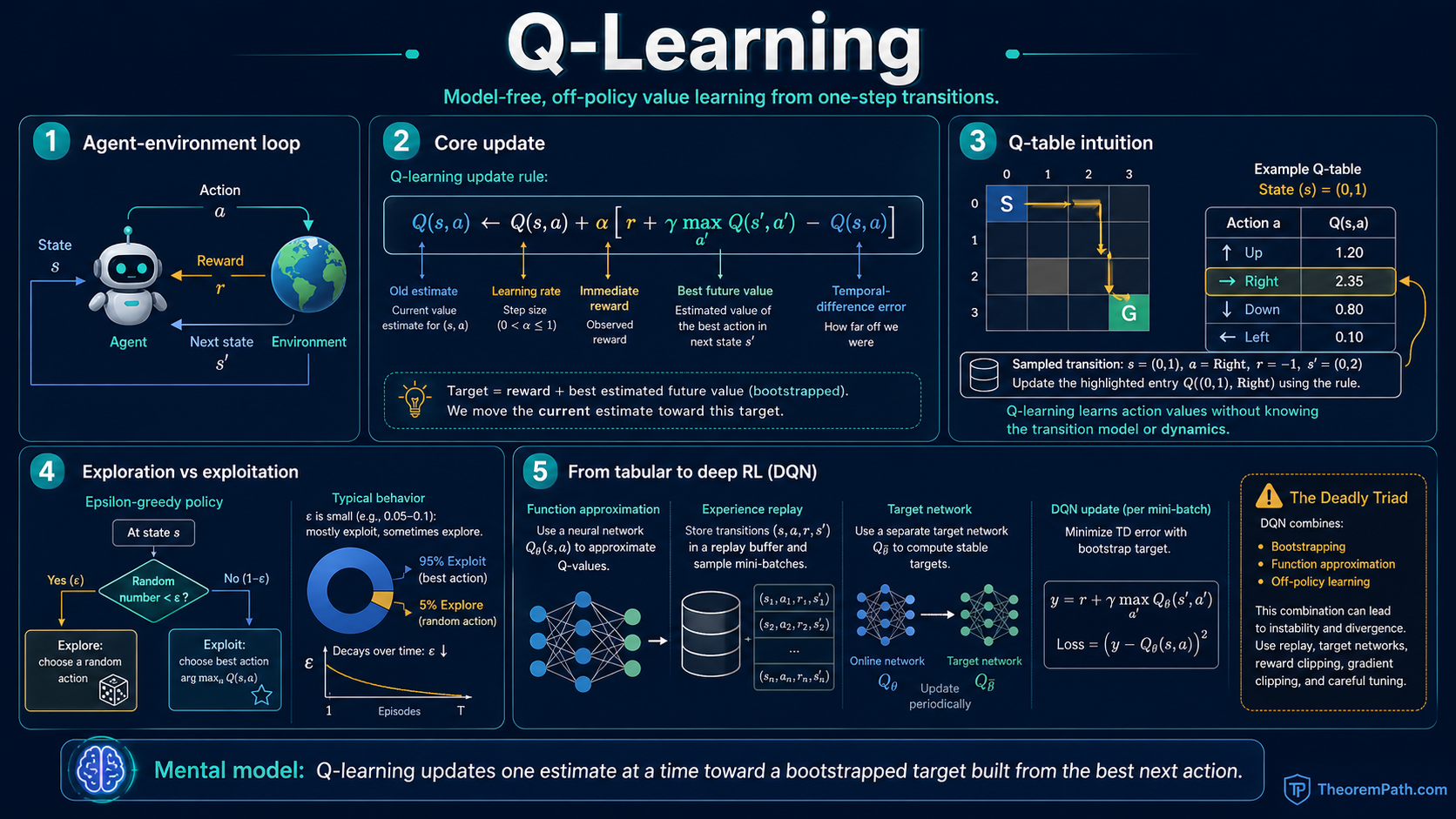

Q-Learning

Model-free, off-policy value learning: the Q-learning update rule, convergence under Robbins-Monro conditions, and the deep Q-network revolution that introduced function approximation, experience replay, and the deadly triad.

Prerequisites

Why This Matters

Value iteration requires knowing the transition model . You need to sum over all possible next states. In most real problems, you do not have this model. You interact with the environment and observe transitions one at a time.

Hide overviewShow overview

Q-learning solves this: it learns the optimal action-value function from individual transitions , without ever building a model of the environment. It is the most important model-free RL algorithm and the foundation of Deep Q-Networks (DQN), which achieved human-level performance on Atari games and launched the deep RL era.

The deadly triad: only the combination of function approximation, bootstrapping, and off-policy data diverges

Any two of the three components (function approximation, bootstrapping, off-policy) are safe. Tabular Q-learning removes function approximation; SARSA removes off-policy distribution mismatch. Combining all three creates a feedback loop where overestimation in one region propagates through shared parameters, and the Q-value norm can grow without bound. DQN's target network and replay buffer damp this failure mode but do not eliminate it. Curves are illustrative, not from a numerical simulation.

Mental Model

Value iteration updates by taking a max over all actions and summing over all next states weighted by their transition probabilities. Q-learning replaces this model-based expectation with a single sample: observe one transition and update toward .

Over many samples, the sample averages converge to the true expectations, and converges to . The key requirement: you must visit every state-action pair infinitely often (exploration) and decrease the step size appropriately (learning rate schedule).

Formal Setup and Notation

We work in a finite MDP but assume is unknown. The agent observes transitions by interacting with the environment.

Q-Learning Update Rule

Given a transition , the Q-learning update is:

where is the learning rate (step size) at time . The term in brackets is called the TD error (temporal difference error).

Off-Policy Learning

Q-learning is off-policy: the policy used to select actions during training (the behavior policy) can differ from the policy being learned (the target policy, which is greedy with respect to ). This is because the update uses , which does not depend on which action was actually taken in state .

Robbins-Monro Conditions

The Robbins-Monro conditions on the step sizes require:

The first condition ensures the steps are large enough to overcome any initial error. The second ensures the noise from stochastic updates eventually dies out. A common choice is .

Main Theorems

Q-Learning Convergence

Statement

Under the stated conditions, the Q-learning iterates converge to the optimal action-value function with probability 1:

where is the unique fixed point of the Bellman optimality operator on Q-values: .

Intuition

The Q-learning update is a stochastic approximation to the Bellman optimality update. At each step, the sample is a noisy estimate of . Since is a -contraction, the stochastic approximation theory of Robbins-Monro guarantees convergence to the fixed point as long as the noise decays (step sizes shrink) and every state-action pair is updated infinitely often.

Proof Sketch

The proof applies the general stochastic approximation theorem. Write the Q-learning update as:

where is zero-mean noise (conditioned on the history). The operator is a -contraction. By the ODE method for stochastic approximation, this converges to the fixed point of under the Robbins-Monro conditions on and the infinite-visitation assumption.

Why It Matters

This theorem is the theoretical foundation of model-free RL. It says you can learn optimal behavior without knowing the environment dynamics, just by trying things and observing results. The price you pay is the need to explore every state-action pair infinitely often. a condition that becomes impossible to satisfy in large state spaces.

Failure Mode

The convergence guarantee requires visiting every pair infinitely often. In practice, with epsilon-greedy exploration in a large state space, many pairs are visited rarely or never. More critically, this theorem applies only to the tabular case. It says nothing about function approximation.

From Tabular to Deep: DQN

For large or continuous state spaces, maintaining a table for every state-action pair is impossible. Deep Q-Networks (DQN) approximate with a neural network trained by stochastic gradient descent.

The naive approach. simply replacing the table with a network. fails catastrophically. DQN introduced two stabilization techniques:

Experience Replay. Store transitions in a replay buffer . At each training step, sample a random minibatch from and update. This breaks the correlation between consecutive samples (which violates the i.i.d. assumption of SGD) and reuses data efficiently.

Target Network. Maintain a separate target network that is updated slowly (e.g., copied from every steps). The training target becomes:

This prevents the moving-target problem where the update target changes with every gradient step.

The DQN loss for a minibatch is:

The Deadly Triad

The Deadly Triad

Statement

The combination of function approximation, bootstrapping, and off-policy learning can cause Q-value estimates to diverge. Specifically, there exist MDPs and linear function approximators where semi-gradient Q-learning with off-policy data produces unbounded Q-values:

Any two of the three components are safe; it is the combination of all three that creates instability.

Intuition

Bootstrapping means the update target depends on the current estimates (a moving target). Function approximation means updating one state-action pair affects the values of other pairs (generalization). Off-policy data means the distribution of updates does not match the distribution the current policy would generate. Together, these can create a feedback loop where overestimation in one region propagates and amplifies through the function approximator.

Why It Matters

DQN uses all three components. The target network and experience replay are engineering solutions that mitigate (but do not eliminate) instability. The deadly triad explains why DQN training can be brittle and why many subsequent algorithms (Double DQN, Dueling DQN, distributional RL) focus on stabilization. It also explains the appeal of on-policy methods like PPO, which avoid the off-policy component entirely.

DQN Variants

Double DQN. The max operator in causes systematic overestimation because (a consequence of Jensen's inequality applied to the convex max function). Double DQN decouples action selection from evaluation:

Dueling DQN. Decompose the Q-network into value and advantage streams: . This helps when the value of a state matters more than the relative value of actions.

Q-Learning vs. SARSA

Q-learning is off-policy: it always updates toward . SARSA is on-policy: it updates toward where is the action actually taken:

SARSA converges to (the value of the current policy) rather than . It is safer in practice. a SARSA agent with epsilon-greedy exploration learns to avoid dangerous states, while a Q-learning agent learns the optimal policy ignoring exploration costs.

Common Confusions

Q-learning is not the same as DQN

Q-learning is a tabular algorithm with convergence guarantees. DQN is Q-learning combined with neural network function approximation, experience replay, and target networks. The convergence guarantee of tabular Q-learning does not transfer to DQN. The deadly triad means DQN can diverge, and its success is empirical rather than theoretically guaranteed.

The max in Q-learning is not the same as the max in value iteration

In value iteration, is computed exactly over all next states. In Q-learning, uses a single sampled next state . The max over actions is still exact (in the tabular case), but the expectation over transitions is replaced by a single sample.

Exploration is not solved by Q-learning

Q-learning convergence assumes all state-action pairs are visited infinitely often. It does not specify how to achieve this. Epsilon-greedy is the simplest strategy (take random actions with probability ), but it is inefficient in large or sparse-reward environments. Efficient exploration (UCB, Thompson sampling, curiosity-driven methods) is a separate research area.

Summary

- Q-learning update:

- Converges to under Robbins-Monro step sizes and infinite exploration

- Off-policy: the behavior policy and target policy can differ

- DQN adds function approximation, experience replay, and target networks

- The deadly triad: function approximation + bootstrapping + off-policy can diverge

- Double DQN fixes overestimation; dueling DQN improves value/advantage decomposition

Exercises

Problem

In a deterministic MDP with two states and one action per state, , , , , , and . Starting from , compute after one observed transition from .

Problem

Why does experience replay help DQN training? Give two distinct reasons.

Problem

Explain why the deadly triad does not arise in tabular Q-learning. Which of the three components is missing, and why does its absence prevent divergence?

Related Comparisons

References

Canonical:

- Watkins & Dayan, "Q-Learning" (1992). original convergence proof

- Tsitsiklis, "Asynchronous Stochastic Approximation and Q-Learning" (1994)

Current:

- Mnih et al., "Human-Level Control through Deep Reinforcement Learning" (Nature, 2015). DQN

- van Hasselt et al., "Deep Reinforcement Learning with Double Q-Learning" (2016)

- Sutton & Barto, Reinforcement Learning: An Introduction (2018), Chapter 6

Next Topics

The natural next step from Q-learning:

- Policy gradient theorem: when value-based methods struggle (continuous actions, high-dimensional spaces), optimize the policy directly

Last reviewed: April 24, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

4- Bellman Equationslayer 2 · tier 1

- Value Iteration and Policy Iterationlayer 2 · tier 1

- Stochastic Approximation Theorylayer 2 · tier 2

- Temporal Difference Learninglayer 2 · tier 2

Derived topics

5- Policy Gradient Theoremlayer 3 · tier 1

- Actor-Critic Methodslayer 3 · tier 2

- DDPG: Deep Deterministic Policy Gradientlayer 3 · tier 2

- Offline Reinforcement Learninglayer 3 · tier 2

- TD3: Twin Delayed Deep Deterministic Policy Gradientlayer 3 · tier 2