Optimization Function Classes

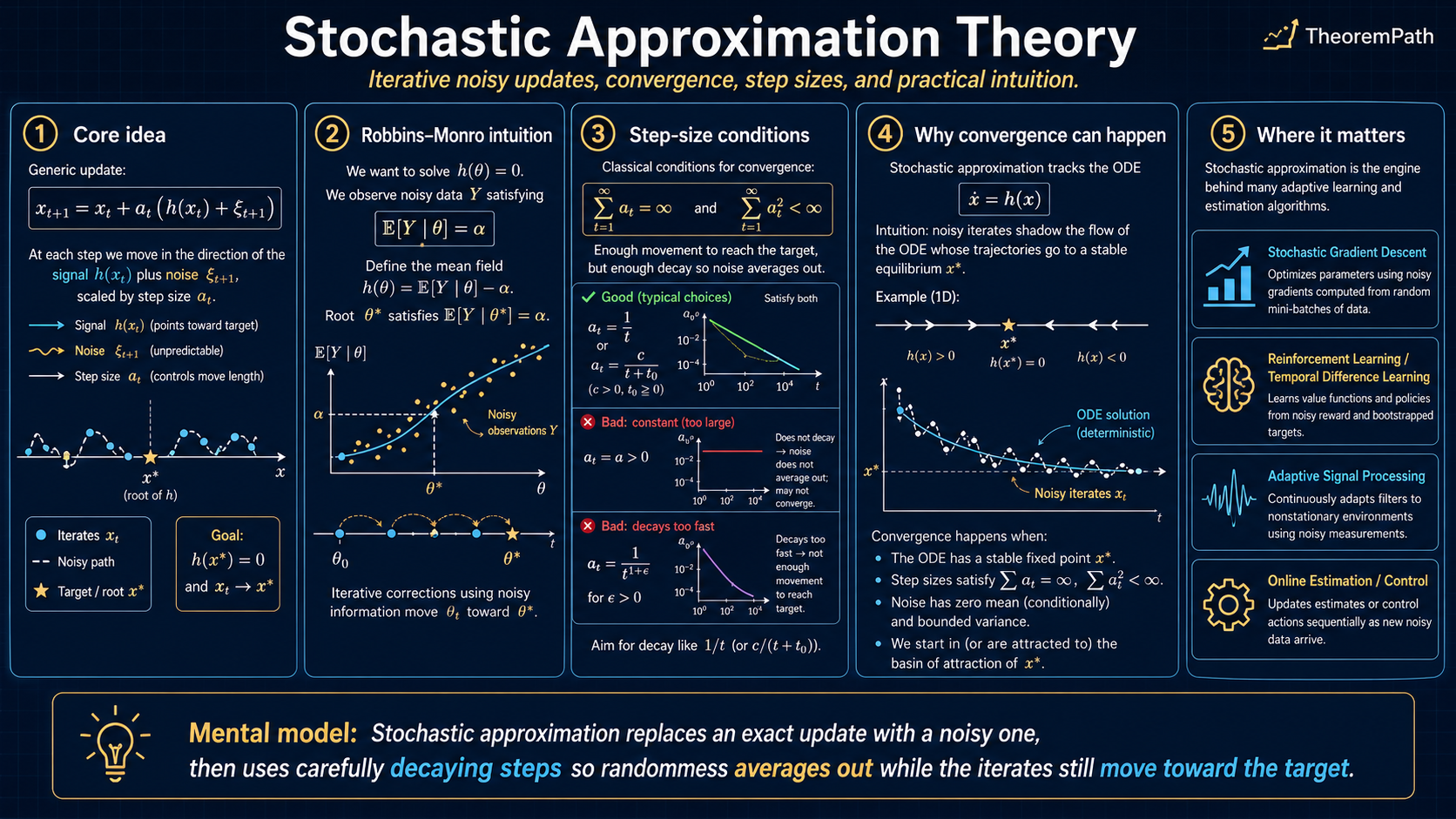

Stochastic Approximation Theory

The Robbins-Monro framework, ODE method, and Polyak-Ruppert averaging: the unified theory behind why SGD, Q-learning, and TD-learning converge.

Prerequisites

Why This Matters

Stochastic gradient descent, Q-learning, temporal difference learning, and dozens of other iterative algorithms in ML and RL share a common skeleton: an update rule that moves toward a target using noisy observations. Stochastic approximation is the theory that explains why these algorithms converge despite the noise, and how fast.

Hide overviewShow overview

If you understand stochastic approximation, you understand the convergence of SGD, Q-learning, and TD-learning as special cases of one theorem. Without it, each convergence proof looks like a separate miracle.

Stochastic approximation

Noisy updates can still track a stable mean field

Update rule

Signal keeps adding

The sum of step sizes diverges, so the mean field can still pull the iterate to the root.

Noise gets averaged out

The sum of squared step sizes is finite, so accumulated martingale noise has bounded variance.

Constant steps do not settle

With a fixed learning rate, the path usually stabilizes around the root instead of converging to it.

Mental Model

You want to find a root of some function , but you cannot evaluate directly. Instead, at each step you observe where is zero-mean noise. The question: under what conditions on the step sizes and the noise does the sequence converge to ?

The answer is the Robbins-Monro theorem: if the step sizes decay at the right rate (slow enough to integrate to infinity, fast enough for their squares to be summable) and the noise is controlled, then almost surely.

Formal Setup

Consider the iteration:

where is the parameter, is the step size, is the mean field (the function whose root we seek), and is a noise term.

Robbins-Monro Conditions

The step size sequence satisfies the Robbins-Monro conditions if and only if:

The first condition ensures the iterates can reach any target (the steps do not decay too fast). The second ensures the accumulated noise variance is finite (the steps decay fast enough to average out noise).

The canonical choice is for some , which satisfies both conditions. The harmonic series diverges () while the Basel series converges ().

Why not constant step size?

A constant step size satisfies but violates . The iterates will keep bouncing around with variance proportional to . They converge to a neighborhood of , not to itself. This is actually what practitioners use in SGD (constant learning rate with eventual decay), and the theory predicts exactly the stationary distribution the iterates reach.

The Robbins-Monro Theorem

Robbins-Monro Convergence

Statement

Under the above conditions, the stochastic approximation iterates satisfy:

where is the unique root of .

Intuition

The noise averages out because the step sizes decay (second Robbins-Monro condition). The signal accumulates because the step sizes decay slowly enough (first condition). The "pointing toward " condition () ensures that the mean field always pushes the iterate back toward the root.

Proof Sketch

The standard proof uses a supermartingale argument. Define . Expand:

Taking conditional expectation: the cross term with vanishes (martingale property). The inner product is negative by the stability condition. The term is summable. By the Robbins-Siegmund supermartingale convergence theorem, converges a.s., and since the negative drift is not summable unless , we conclude convergence.

Why It Matters

This single theorem implies the convergence of:

- SGD: set ,

- Q-learning: set where is the Bellman optimality operator

- TD(0): set where is the Bellman evaluation operator

All are stochastic approximation with different mean fields .

Failure Mode

If the noise variance grows faster than the stability condition can control (e.g., grows polynomially with ), the iterates can diverge. This is exactly what happens with Q-learning under function approximation (the "deadly triad"): the mean field is no longer a contraction in the right norm, and the variance grows with the approximation error.

The ODE Method

The ODE method, due to Ljung (1977) and refined by Borkar and Meyn (2000), analyzes the iteration through a continuous-time differential equation. It handles nonlinear , asymptotically negligible noise, and Markovian noise, where direct martingale arguments break down.

Associated ODE

For the stochastic approximation iteration , the associated ordinary differential equation is:

The ODE method says: if the ODE has a globally asymptotically stable equilibrium , then the stochastic approximation iterates track the ODE solution and converge to .

ODE Method for Stochastic Approximation

Statement

Under the above conditions, almost surely. The trajectory of the interpolated process (piecewise-linear through the iterates, with time rescaled by the step sizes) converges to the trajectory of the ODE .

Intuition

Over short time windows, the noise averages out (law of large numbers). What remains is the deterministic drift , which is exactly the ODE. The stochastic iterates "track" the ODE solution, with the noise causing fluctuations that shrink as the step size decreases.

Proof Sketch

- Interpolate the discrete iterates into a continuous trajectory where time increments are .

- Show the noise contribution converges to zero over any fixed time window as (by the martingale strong law).

- The remaining trajectory satisfies an integral equation that approximates the ODE.

- By Gronwall's inequality, the deviation from the ODE solution is controlled by the step size and noise residual.

- Conclude that converges to the ODE's stable equilibrium.

Why It Matters

The ODE method reduces stochastic convergence questions to deterministic stability questions. ODE stability is one ingredient, not the whole proof. For Q-learning specifically, convergence (Tsitsiklis 1994; Jaakkola, Jordan, Singh 1994) requires the full asynchronous-stochastic-approximation toolkit: (i) every state-action pair is visited infinitely often (sufficient exploration), (ii) the per-coordinate step sizes satisfy the Robbins-Monro conditions, (iii) the iterates and rewards are bounded a.s., and (iv) the update noise forms a martingale-difference sequence with bounded conditional variance. Given those, one can conclude that the iterates track the ODE , whose contraction in then gives a unique globally attracting fixed point. The ODE stability alone is not enough.

Failure Mode

The ODE method requires the iterates to remain bounded a.s. (the "stability" condition). This is the hardest assumption to verify in practice. For linear stochastic approximation (), stability holds when has eigenvalues with negative real parts. For nonlinear problems, verifying stability often requires constructing a Lyapunov function.

Polyak-Ruppert Averaging

The Robbins-Monro iterates converge at rate in general. Polyak (1990) and Ruppert (1988) independently discovered that averaging the iterates achieves the optimal rate with the optimal asymptotic constant, without requiring careful step size tuning.

Polyak-Ruppert Averaging

Statement

Define the averaged iterate . Then:

This is the optimal asymptotic covariance: it matches the Cramér-Rao lower bound for the stochastic approximation problem.

Intuition

Each individual iterate is noisy and converges slowly. But the noise in consecutive iterates is nearly independent (because the step size is small). Averaging nearly-independent estimates reduces variance by a factor of . The optimal asymptotic covariance is the best possible for any estimator that only observes the noisy function evaluations.

Why It Matters

Polyak-Ruppert averaging explains why "averaged SGD" works so well in practice: you can use a relatively large, slowly decaying step size (which gives fast initial progress) and then average to get optimal asymptotic variance. This is strictly better than carefully tuning the step size schedule. The result also connects stochastic approximation to classical statistics: the averaged iterate is asymptotically efficient in the sense of Cramér-Rao.

Failure Mode

The averaging result requires the step size exponent . If (the canonical ), the individual iterates already achieve the optimal rate (for the right constant ), but the optimal depends on the unknown Jacobian . Averaging with avoids this sensitivity: you do not need to know to get the optimal rate. If , the step sizes do not decay fast enough and the noise is not averaged out properly.

Linear Stochastic Approximation

The most tractable and well-understood case is when is linear: where and .

Linear Stochastic Approximation

The linear stochastic approximation iteration is:

where has all eigenvalues with strictly negative real parts (so is the unique root of ). The associated ODE is , which is a stable linear system.

This case is important because:

- TD(0) with linear function approximation is linear SA with and

- Least-squares SGD is linear SA with and

- For linear SA, the boundedness condition in the ODE method is automatic when is Hurwitz (all eigenvalues have negative real parts)

Connections and Special Cases

| Algorithm | Mean field | Noise | Contraction? |

|---|---|---|---|

| SGD | Gradient noise | Yes (if strongly convex) | |

| Q-learning | TD error | Yes (-contraction in ) | |

| TD(0) | TD error | Yes (-contraction in ) | |

| SARSA | TD error | Yes (-contraction) | |

| Empirical risk | Sampling noise | Problem-dependent |

Robbins-Monro vs Kiefer-Wolfowitz

The Robbins-Monro algorithm assumes you can observe directly (i.e., you have a noisy function evaluation). The Kiefer-Wolfowitz algorithm handles the case where you can only observe function values , not gradients. It estimates the gradient by finite differences, adding an extra layer of approximation. SGD is Robbins-Monro (you observe stochastic gradients). Gradient-free optimization is Kiefer-Wolfowitz.

Step size conditions are sufficient, not necessary

The Robbins-Monro conditions are sufficient for convergence but not necessary. Convergence can hold under weaker conditions (e.g., with does not satisfy for but can still give convergence in specific problems). The conditions are sharp for the general problem: there exist mean fields and noise sequences for which convergence fails if either condition is violated.

Key Results Timeline

- 1951: Robbins and Monro prove the original convergence theorem for scalar root-finding with noisy observations.

- 1952: Kiefer and Wolfowitz extend to optimization (gradient-free setting).

- 1977: Ljung introduces the ODE method, connecting stochastic approximation to dynamical systems theory.

- 1988-1990: Ruppert and Polyak independently discover iterate averaging achieves optimal rates without step size tuning.

- 1994: Tsitsiklis proves Q-learning convergence using the ODE method.

- 2000: Borkar and Meyn give the definitive treatment of the ODE method with weaker assumptions.

Exercises

Problem

Show that satisfies the Robbins-Monro conditions if and only if . For each boundary case ( and ), determine which condition holds and which fails.

Problem

Given a loss function and the SGD update , identify the mean field and the noise in the Robbins-Monro framework. What condition on ensures the stability condition ?

Problem

Consider the one-dimensional problem: find the root of (so ). With noise and step size , simulate 10,000 steps. Compare the MSE of vs the averaged over 100 independent runs. What rate does each achieve? What is the asymptotic variance of predicted by the Polyak-Ruppert theorem?

References

Canonical:

- Robbins, H. and Monro, S., "A Stochastic Approximation Method," Annals of Mathematical Statistics 22(3), 1951. The original paper.

- Borkar, V. S., Stochastic Approximation: A Dynamical Systems Viewpoint, Cambridge University Press, 2008, Ch. 2-5. Modern reference for the ODE method.

- Kushner, H. J. and Yin, G. G., Stochastic Approximation and Recursive Algorithms and Applications, 2nd ed., Springer, 2003, Ch. 1-6, 8.

- Benveniste, A., Metivier, M., Priouret, P., Adaptive Algorithms and Stochastic Approximations, Springer, 1990, Ch. 1-3. Classical treatment with martingale arguments.

- Polyak, B. T. and Juditsky, A. B., "Acceleration of Stochastic Approximation by Averaging," SIAM J. Control and Optimization 30(4), 1992. The averaging theorem.

- Ljung, L., "Analysis of Recursive Stochastic Algorithms," IEEE Trans. Automatic Control 22(4), 1977. Original ODE method paper.

Current:

- Szepesvari, C., Algorithms for Reinforcement Learning, Morgan and Claypool, 2010, Ch. 3. SA applied to RL.

- Bottou, L., "Large-Scale Machine Learning with Stochastic Gradient Descent," COMPSTAT 2010, §3-4. Practical SGD perspective.

- Bach, F. and Moulines, E., "Non-asymptotic Analysis of Stochastic Approximation Algorithms for Machine Learning," NeurIPS 2011, §3 on constant step-size behavior.

- Srikant, R. and Ying, L., "Finite-Time Error Bounds For Linear Stochastic Approximation and TD Learning," COLT 2019, §3-4.

Frontier:

- Mou, W., Li, C. J., Wainwright, M. J., Bartlett, P. L., Jordan, M. I., "On Linear Stochastic Approximation: Fine-grained Polyak-Ruppert and Non-asymptotic Concentration," COLT 2020.

- Chen, Z., Zhang, S., Doan, T. T., Clarke, J.-P., Maguluri, S. T., "Finite-Sample Analysis of Nonlinear Stochastic Approximation with Applications in Reinforcement Learning," Automatica 146, 2022.

Last reviewed: April 26, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

5- Borel-Cantelli Lemmaslayer 0B · tier 1

- Convex Optimization Basicslayer 1 · tier 1

- Stochastic Gradient Descent Convergencelayer 2 · tier 1

- Martingale Theorylayer 0B · tier 2

- Adaptive Learning Is Not IIDlayer 3 · tier 2

Derived topics

2- Q-Learninglayer 2 · tier 1

- Temporal Difference Learninglayer 2 · tier 2

Graph-backed continuations