Concentration Probability

Restricted Isometry Property

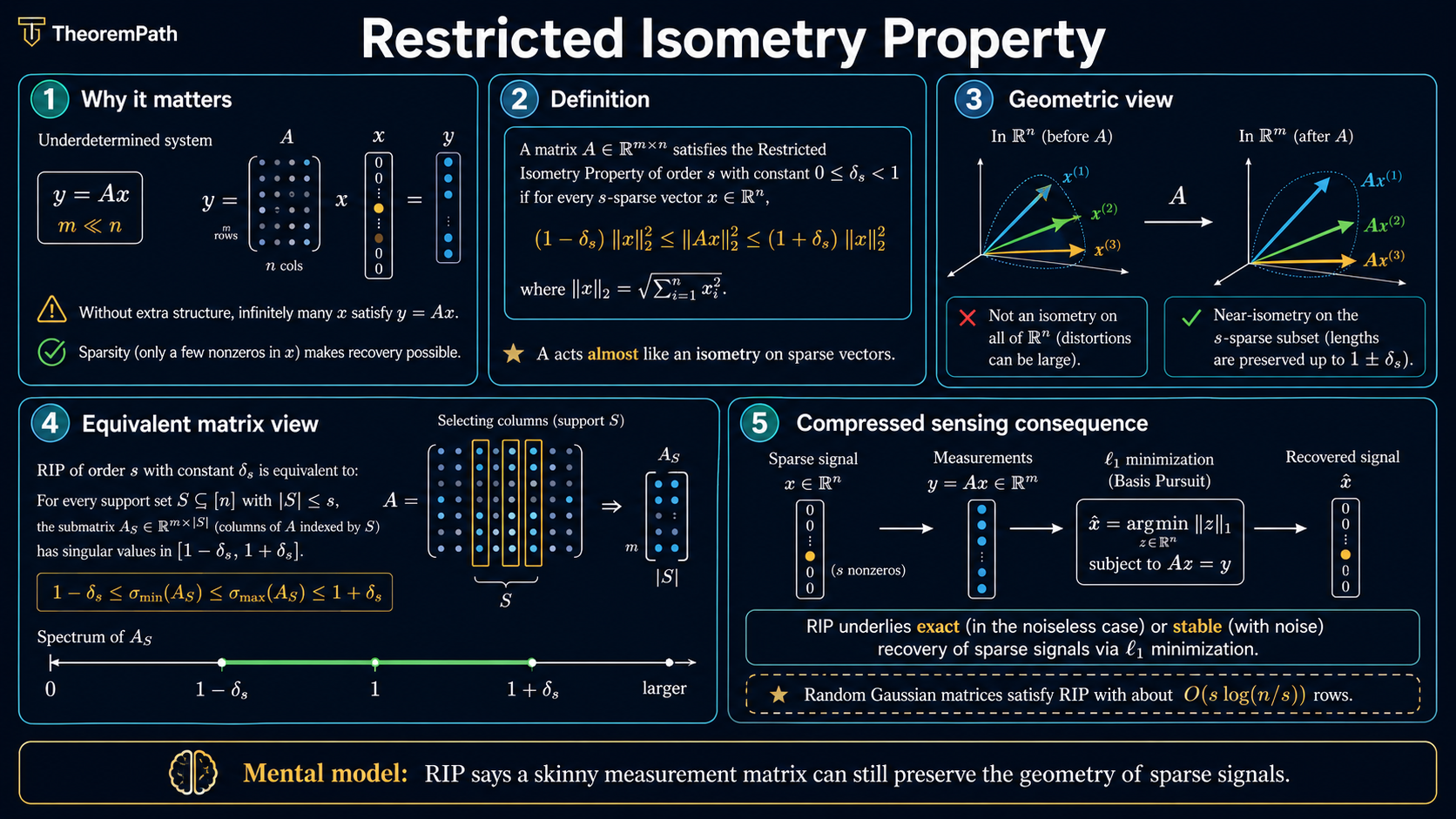

The restricted isometry property (RIP): when a measurement matrix approximately preserves norms of sparse vectors, enabling exact sparse recovery via L1 minimization. Random Gaussian matrices satisfy RIP with O(s log(n/s)) rows.

Prerequisites

Why This Matters

The restricted isometry property is the bridge between the abstract goal ("recover a sparse signal from few measurements") and the computational tool (L1 minimization). Without RIP or a similar condition, the system with is hopelessly underdetermined. With RIP, the system has a unique sparse solution that can be found by a polynomial-time algorithm.

Hide overviewShow overview

RIP is also the theoretical justification for why random measurement matrices work in compressed sensing. The same geometric phenomenon, random projections preserving structure, underlies both RIP and the Johnson-Lindenstrauss lemma.

Mental Model

A matrix satisfying RIP acts almost like an isometry (distance and norm preserving map) when restricted to sparse vectors. The "restricted" qualifier is crucial: is an matrix with , so it cannot be an isometry on all of . But it can be a near-isometry on the much smaller set of -sparse vectors, because this set has low "effective dimension" even though it lives in .

Formal Setup

Let with . For a set with , let denote the submatrix of formed by the columns indexed by .

Restricted Isometry Property (RIP)

The matrix satisfies the restricted isometry property of order with constant if and only if for every -sparse vector :

Equivalently, for every subset with , all singular values of lie in .

The RIP constant is the smallest for which this holds.

Spark of a Matrix

The spark of a matrix is the smallest number of linearly dependent columns. if and only if every columns of are linearly independent, which is a necessary (but not sufficient) condition for RIP of order to hold with .

Main Theorems

RIP Implies Exact Sparse Recovery via L1 Minimization

Statement

If satisfies RIP of order with , then for every -sparse vector , the solution to basis pursuit:

satisfies . Moreover, in the noisy case with , basis pursuit denoising recovers with:

where depends only on .

Intuition

RIP of order ensures that preserves distances between pairs of -sparse vectors (their difference is -sparse). This means no two distinct -sparse vectors can produce the same measurements. Among all vectors consistent with the measurements, the -sparse one is the unique L1 minimizer because L1 favors sparsity (the L1 ball has corners at the coordinate axes).

Proof Sketch

Let and partition the indices of into sets where is the support of (size ), contains the largest entries of , the next largest, and so on. L1 optimality gives (the "cone constraint"). The RIP applied to (which is -sparse) combined with the measurement constraint and the cone constraint forces .

Why It Matters

This theorem converts the NP-hard problem of finding the sparsest solution ( subject to ) into a polynomial-time linear program ( subject to ). The condition is the price of this computational tractability.

Failure Mode

The threshold is sufficient but not tight. Improved analyses (Cai & Zhang, 2014) sharpened this to , which is known to be tight for L1 minimization. Also, verifying that a specific matrix satisfies RIP is NP-hard (Bandeira et al., 2012), so in practice we rely on probabilistic constructions.

Gaussian Matrices Satisfy RIP with O(s log(n/s)) Rows

Statement

Let have i.i.d. entries from . There exist universal constants such that if:

then satisfies RIP of order with constant with probability at least .

Intuition

A random Gaussian matrix is "incoherent" with every sparse basis simultaneously. For any fixed -sparse vector, concentrates around by subgaussian concentration. The challenge is making this hold for all possible supports simultaneously. The epsilon-net argument handles this: cover the unit sphere restricted to each support by a finite net, apply concentration to each net point, then use a union bound over the net.

Proof Sketch

Step 1: Fix a support with . The submatrix has i.i.d. entries. For a fixed unit vector supported on , where . By subgaussian concentration: .

Step 2: Cover the unit sphere in by an -net of size .

Step 3: Union bound over all supports and all net points: the total number of events is . Taking logs: . Setting proportional to times this quantity makes the union bound work.

Step 4: Extend from net points to all unit vectors using a standard perturbation argument.

Why It Matters

This theorem says you can construct RIP matrices by simply drawing random entries. No careful design is needed. The measurement count is near-optimal in the strong sense: is the basic uniqueness / spark condition for exact recovery of an -sparse vector by linear algebra alone, but for uniform stable recovery over all -sparse supports, the matching information-theoretic / packing lower bounds scale like (Foucart & Rauhut 2013, Ch. 10; Garnaev–Gluskin 1984 for -widths; Donoho–Tanner 2009 for related phase transitions). The Gaussian RIP upper bound therefore matches the correct uniform-recovery lower bound up to constants and logarithmic factors, not merely the trivial uniqueness bound.

Failure Mode

The constant in the bound can be large in practice. For small and moderate , the bound may require more measurements than are practical. Also, Gaussian matrices are dense ( storage), which is problematic when is very large. Structured random matrices (partial Fourier, random sparse) achieve similar guarantees with or storage and faster matrix-vector products.

Connection to Johnson-Lindenstrauss

RIP and Johnson-Lindenstrauss Both Follow from Concentration of Random Projections

Statement

The Johnson-Lindenstrauss (JL) lemma states: for any set of points in , a random projection into with preserves all pairwise distances to within factor .

RIP states: a random projection into with preserves norms of all -sparse vectors to within factor .

Both results follow from the same concentration inequality for subgaussian random projections. The difference is what set of vectors must be preserved: JL preserves a finite point set; RIP preserves the (infinite) union of -dimensional subspaces.

Intuition

A random low-dimensional projection preserves structure because most of the "action" is in a low-dimensional subspace. For JL, the structure is points (effective dimension ). For RIP, the structure is -dimensional subspaces (effective dimension accounting for the possible supports).

Why It Matters

Understanding this connection reveals that RIP, JL, and random embedding results are all manifestations of the same phenomenon: subgaussian random projections approximately preserve low-dimensional geometry. This unifying view helps you apply the right tool: JL for dimensionality reduction of point sets, RIP for sparse recovery.

Failure Mode

The JL dimension depends on the number of points but not on the ambient dimension . The RIP dimension depends on both sparsity and ambient dimension. These are not interchangeable: JL does not imply RIP and RIP does not imply JL in general, though they share the same proof machinery.

Why Verifying RIP is Hard

Given a specific matrix , checking whether it satisfies RIP of order requires verifying the norm-preservation condition for all -sparse vectors. This is equivalent to computing the extreme singular values of all submatrices . For and , this is , an astronomically large number.

Bandeira et al. (2012) proved that certifying RIP is NP-hard. This is why we use random matrices: we do not verify RIP for a specific realization. Instead, we prove that RIP holds with high probability for the random ensemble.

Common Confusions

RIP is about the matrix, not about any particular signal

RIP is a property of that holds uniformly over all -sparse vectors. Once you know satisfies RIP, you can recover any -sparse signal without knowing its support in advance. This is what makes compressed sensing universal: the same random measurement matrix works for all sparse signals.

RIP of order s is not enough for sparse recovery; you need order 2s

The recovery guarantee requires (not ) to be small. This is because the error vector is the difference of two -sparse vectors, which is -sparse. A common mistake is to check when is what matters.

RIP does not require Gaussian matrices

Gaussian matrices are the simplest to analyze, but many other random matrix constructions satisfy RIP: subgaussian matrices, partial Fourier matrices, random Bernoulli matrices, and certain structured random matrices. The proof technique (concentration + epsilon-net + union bound) adapts to any matrix with independent subgaussian rows.

Summary

- RIP of order with constant : matrix preserves norms of -sparse vectors within factor

- For L1 recovery: need RIP of order with

- Gaussian matrices satisfy RIP with rows

- Proof uses: subgaussian concentration, epsilon-net covering, union bound over supports

- Connection to JL: both are about random projections preserving low-dimensional structure

- Verifying RIP for a specific matrix is NP-hard; we rely on probabilistic guarantees

Exercises

Problem

A matrix with Gaussian entries needs to satisfy RIP of order 20 with . Using the bound with , how many rows are needed?

Problem

Explain why the RIP measurement bound has a factor while the JL bound has a factor. Relate (number of points in JL) to the parameters and in RIP.

References

Canonical:

- Candes & Tao, "Decoding by linear programming" (IEEE Trans. Info. Theory, 2005). introduced RIP

- Baraniuk et al., "A simple proof of the restricted isometry property for random matrices" (Constructive Approximation, 2008)

Current:

-

Foucart & Rauhut, A Mathematical Introduction to Compressive Sensing (2013), Chapters 6 and 9

-

Cai & Zhang, "Sparse Representation of a Polytope and Recovery of Sparse Signals and Low-Rank Matrices" (IEEE Trans. Inform. Theory, vol. 60, no. 1, Jan 2014, pp. 122-132). sharpened RIP threshold to

-

Vershynin, High-Dimensional Probability (2018), Chapters 2-5

-

Boucheron, Lugosi, Massart, Concentration Inequalities (2013), Chapters 2-6

Next Topics

The natural next steps from RIP:

- Epsilon-nets and covering numbers: the proof tool behind RIP bounds

Last reviewed: April 26, 2026

Canonical graph

Required before and derived from this topic

These links come from prerequisite edges in the curriculum graph. Editorial suggestions are shown here only when the target page also cites this page as a prerequisite.

Required prerequisites

1- Sub-Gaussian Random Variableslayer 2 · tier 1

Derived topics

0No published topic currently declares this as a prerequisite.